NVIDIA Project Logan Processor Assimilates Kepler Mobile

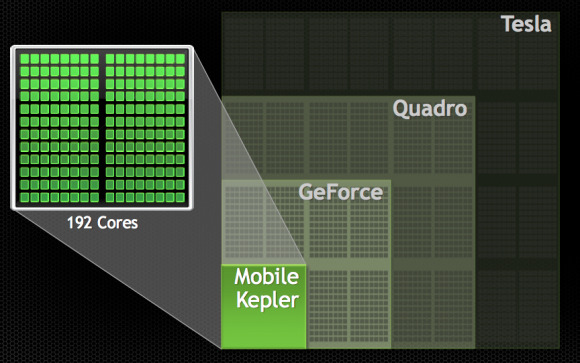

This week NVIDIA is once again blurring the lines between desktop and mobile graphics with a note on the introduction of Kepler technology into their next-generation mobile processor. NVIDIA suggests that, "from a graphics perspective, this is as big a milestone for mobile as the first GPU, GeForce 256, was for the PC when it was introduced 14 years ago." This is the first set of details we're getting on Project Logan, the next processor architecture in the Tegra chipset family.

You'll remember the comic book character collection of code-names for the processors that've become the Tegra 3 and Tegra 4 – and what we must assume will be the Tegra 5 as well. Here with what's still called Project Logan, NVIDIA makes clear their intent to bring graphics processing abilities until now reserved for desktop machines to the mobile realm; for tablets, smartphones, and everything in between.

In addition to deploying Kepler's efficient processing powers to the Logan mobile SoC, NVIDIA intents on bringing the excellence in a form that the company will be able to license to others. This licensing was outlined earlier this year amid the latest Kepler integrations into GPUs such as the NVIDIA GeForce GTX 760.

NVIDIA suggests that the technology deployed with mobile Kepler is able to use one-third the power of "GPUs in leading tablets, such as the retinal iPad", while it performs identical renderings. They also note that this efficiency is achieved with mobile Kepler without compromising graphics capabilities, working with OpenGL ES 3.0, OpenGL 4.4, and everything else in the OpenGL universe.

Though we're expecting this architecture to hit Google's Android – as NVIDIA has been hitting for the past several years – they do mention that the technology also supports DirectX, the latest graphics API from Microsoft. Think Windows RT and Windows 8 – NVIDIA's been there before.

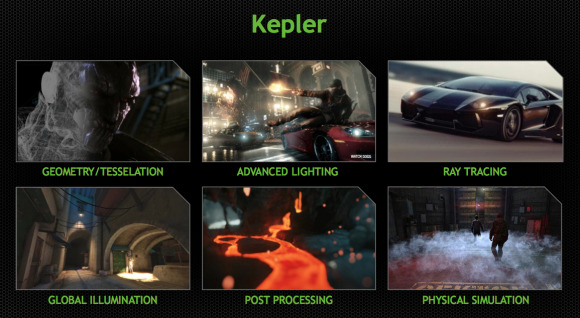

Working with these current and next-generation APIs allow NVIDIA to bring on graphics unlike any seen in the mobile universe, developers taking hold of these environments with a variety of high-end rendering and simulation techniques. NVIDIA runs down three of the most powerful:

Tessellation – which creates geometry dynamically and efficiently on the GPU from high-level descriptions, sizing triangles optimally based on the user's viewpoint. By comparison, fine detail in a traditional pre-generated approach is inefficient, requiring excess geometry to deal with all possible viewpoints.

Compute-based deferred rendering – which calculates the effect of all lights in the scene in a single deferred rendering pass. This OpenGL 4 capability greatly improves deferred rendering efficiency and scalability compared to current OpenGL ES based implementations, which require an extra pass for each light source in the scene. The scalability of the compute-based approach paves the way to even more advanced lighting models, such as using virtual points of lights to approximate global illumination effects.Advanced anti-aliasing and post-processing – which deliver better image quality, particularly in areas of very sharp color contrast, by making multi-sampling more programmable and allowing applications to implement their own anti-aliasing filters. These also enable more efficient film-quality post-processing effects, such as motion blur and depth of field.

NVIDIA makes clear that a lovely collection of processing-heavy tasks will be able to be carried out with this next-generation solution including computer vision, augmented reality, computational imaging, and speech recognition. Showed off this week at Siggraph was a return of the digital head now known as "Ira", aka Faceworks.

Stick around as we continue to jump deeper into the next big superhero-themed processor, one that'll break barriers beyond what we're only just seeing now with the NVIDIA Tegra 4 – living inside NVIDIA SHIELD and getting pumped up for benchmarks sooner than later!

VIA: NVIDIA