5 Features That Make Smart Glasses Not A Total Waste Of Money

It can be easy to see smart glasses as impractical, or even gimmicky, but it's tough to argue with the convenience of wearables, particularly as they develop and become more lightweight and difficult to distinguish from regular frames. In fact, these sorts of qualities could potentially make them a bit too good at what they do. The U.S. Air Force has banned this popular new tech largely because recording audio and video, even potentially without the wearer's knowledge, can make them a dangerous security risk. It's certainly true that there are some places where smart glasses like Meta Ray-Bans really shouldn't be worn, but that doesn't mean that they're necessarily a waste of money.

There are some things that the handheld nature of smartphones and other recording devices isn't best equipped to manage, after all. This means that while this technology may seem superfluous to some, there's a practicality to the idea that, if implemented well, it could become rather mainstream in years to come. If you're a content creator, for instance, they could go on to become your new best friend. They may even be doing so already, and we're going to take a look at why. It's important to note that smart glasses and AI glasses are in their relative infancy, so these systems still have some development to go through, but the potential is absolutely there.

Compatibility with your prescription and an increasingly lightweight design

If you've seen 3D movies at the theater, you may well have experienced the classic issue that 3D glasses can be awkward for glasses wearers. Of course, they've been adapted to fit neatly over standard glasses, so you can enjoy the movie, but that's not really an option with smart glasses, which are designed to be worn for long periods. In response to this need, some manufacturers have ensured that they're compatible with your prescription lenses.

Both Oakley and Ray-Ban, for instance, offer this service, with Ray-Ban noting, as reported by Meta, that "you can get your Ray-Ban Meta glasses with prescription by ordering prescription lenses during your purchase at Ray-Ban.com, Ray-Ban Store, or at certified physical retailers." The warranty will only be good, both manufacturers note, if the glasses were purchased directly through these outlets. It's not necessary, of course, to use the advanced features of smart or AI glasses all the time, so they can serve as a replacement for your standard eyeglasses throughout your day.

They'd also be very impractical if the user didn't enjoy them because they were weighty or bulky. A device like the Apple Vision Pro weighs in at a formidable 650g, for instance (with the battery pack just over an additional 350g), making it unfeasible and uncomfortable to wear for longer periods of time. By contrast, manufacturers of smart glasses and AI glasses are working to make the product indistinguishable from regular eyeglasses. CES 2026, for instance, saw Meta-Bounds unveil its resin diffractive waveguide AI and AR glasses, weighing as little as 25g. This, in turn, makes them more likely to be worn and utilized in different scenarios.

The promising future of waveguide displays

Though it's possible to connect to various different kinds of displays, smartphone users are mostly confined to the small screen on the system. Glasses that provide a display in front of your very eyes, meanwhile, are hands-free. This technology is called Waveguide, combining a hidden projector and special thin glass to create a digital image. It's certainly more expensive than models that don't feature it. For example, standard Meta Ray-Bans start at $299.99, which is a full $500 less than the Meta Ray-Ban Display models that feature Waveguide.

For some potential customers, this will be the key to the value of smart glasses. Via Waveguide, these models can do extraordinary things: There's a set of smart glasses that can translate any language being spoken in front of the wearer, for instance, providing a running transcript of the conversation.

Waveguide technology is in its infancy, and that means there's still some development needed. The price of entry compared to counterparts without it is a significant barrier too. The same is true of the bright and rather garish green, which is the only color that the systems can use to display the text on the 'screen' for now. This certainly isn't to say that the Waveguide revolution will stop there as a result. It's still one of the most significant "wow factor" features that smart glasses offer, and it's sure to become more advanced. AI technology is always at its best when it's able to fulfil a specific need, and it's been busily serving as a productivity tool for some time already. What this particular technology will be able to do is bring those services to users directly via their lenses.

The world through your own eyes

One thing that many lament is that nobody's "in the moment" anymore. If you look at contemporary footage of a show or gig, you'll often see numerous smartphones pointing in every direction. One important feature that smart and AI glasses offer to help combat this trend is that they provide a true first-person view of what the user is looking at. Simple voice, tap-operated, or gesture-operated technology allows the wearer to do so without occupying their hands.

There are, naturally, safety concerns surrounding this kind of discrete photography and video capture, and regulation of such is an issue that localities, businesses, and communities continue to grapple with as it becomes more widespread. For some, these access concerns are a smart reason not to buy Meta's new AI glasses. Nonetheless, when used where everybody present is aware and has consented to the recording, it lends an excellent personal, spur-of-the-moment air to a content creator's footage, which holding bulky recording equipment or even a smartphone would be unable to achieve.

It's the ease of use that's the primary factor here, and that's something that can differ a little between models. For instance, using Meta AI glasses, you also have the option of voice prompts, with "Hey Meta, take a video" to begin recording and "Hey Meta, stop" to cut it. "Hey Meta, take a photo" also works for photographs, all of which allows for a more direct and potentially more spontaneous capture. This isn't simply for content creators, but for anybody who wishes they could have captured that fleeting moment more quickly without fumbling for a phone.

Audio without additional headphones

With smart glasses, there's no need to worry about forgetting to toss your headphones in your bag or forgetting to charge your earbuds and arriving at the gym to find they're dead. This is because smart glasses can play audio for you. The open-ear speakers on the Ray-Ban Meta AI are one innovation that does just that.

Meta says the model offers "discreet, open-ear Bluetooth speakers that deliver rich-quality audio without blocking out ambient noises." This is a critical advantage in that some headphones' noise-canceling qualities can be very distracting and potentially dangerous. In this way, the glasses aim to provide the best of both worlds, with Meta further noting that, when making calls, the recipient will benefit from approximately 90% reduced ambient noise. All of this is without requiring any headphones.

This is very valuable functionality for those who make use of these audio features on a regular basis, and a vital part of the package to take into account when considering whether a pair would be right for you. The idea, here, is again to make the use of a pair of smart glasses or AI glasses feel more natural. If they're a novelty that's only used for very specific moments, we may simply leave them at home when leaving for the gym, work, or wherever we're going, making the purchase a rather wasted investment.

Be My Eyes functionality for the visually impaired

For the developers of any new technology, accessibility is a key concern. One potential game-changer in this department is Be My Eyes, a function implemented in Meta Ray-Ban AI glasses. Those with limited vision may have considered the glasses a wasted purchase, but after engaging with this tool, they may find that it becomes one of the best purchases they've ever made.

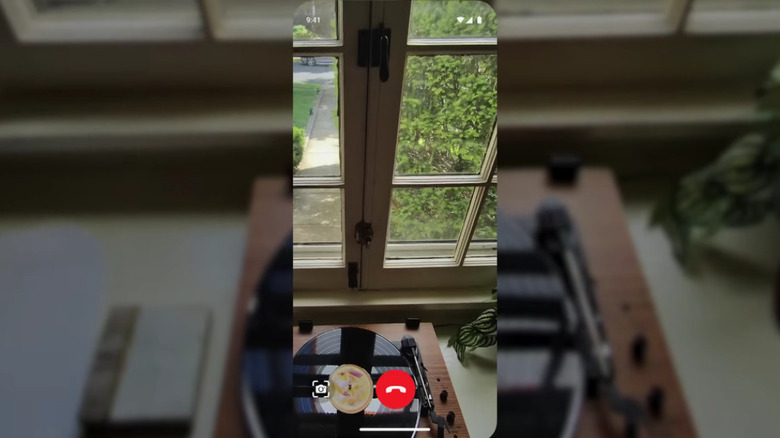

The concept behind this app, as demonstrated in Meta's introductory video above, is that it allows wearers to call a volunteer through the Be My Eyes app in their glasses. That volunteer will then be able to connect with the wearer's glasses and 'see through' them. The wearer can then detail the task they need help performing, with one example presented above being finding a specific piece of mail in a stack. The volunteer can then work with them to solve the problem. Implementing this feature is done via syncing with the Be My Eyes app on a smartphone, which then allows the Meta AI Glasses user to connect with a volunteer via a simple voice command or press of the controls on the arms of the glasses.

As another key factor in accessibility, the service is available in 15 languages and in 20 countries. It's also available on smartphones, and it's a supportive breakthrough that's becoming more common around the world. In fact, in March 2026, Be My Eyes announced that its worldwide network of volunteers had grown to 10 million people and that they now serve approximately one million people. The software existed prior to its implementation in the glasses, but with them, it has become more convenient and user-friendly than ever.