This Tiny Supercomputer Fits 'Doctorate-Level' AI Into Your Pocket

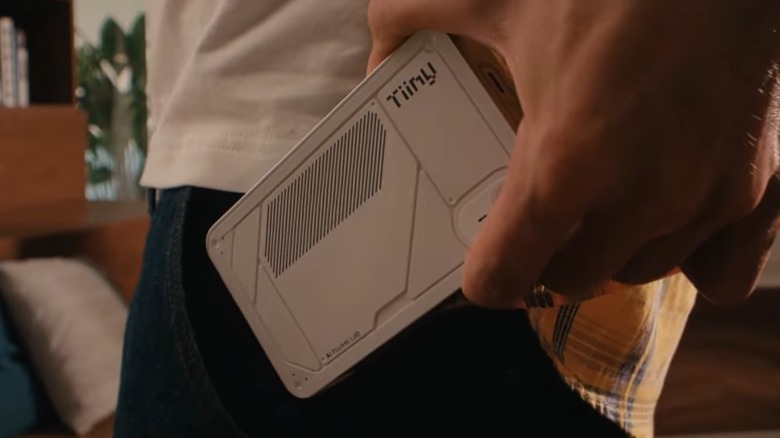

Think supercomputers, and the chances are you're thinking about a massive air-conditioned facility crammed with hardware and adorned with tens of miles of data cables. However, Tiiny AI — a US startup — claims to have created a supercomputer that doesn't need a warehouse-sized facility, indeed; this is a supercomputer that can fit in your pocket.

Before we look at the device in question — the AI Pocket Lab — let's have a look at how such a computer could be dubbed a "supercomputer." There's no strict definition of a supercomputer, but generally, it's used to describe the largest and most powerful computers currently operating. In other words, machines that are capable of handling workloads that are beyond the capabilities of conventional consumer hardware.

In this context, the distinction comes down to capability rather than scale. This is a device that's been designed to run a large language model locally — workloads that would usually require multiple GPUs at a minimum, but often require data center infrastructure. Here, the fact that this device claims to fit "doctorate-level" AI into your pocket would certainly take it beyond the capability of consumer hardware. So, while the hardware may not look like any supercomputer you've ever imagined, the type of work it can perform would satisfy one metric generally accepted to define a supercomputer.

Okay, supercomputer definition over, let's take a look at the device that'll allow you to carry doctorate-level AI around with you.

What's inside the AI pocket lab?

The trick behind the AI Pocket Lab is an unusual combination of hardware that prioritizes memory over raw processing power. Tiiny AI lists the device as pairing a 12-core ARM processor with a substantial 80GB of LPDDR5X RAM. Around 48GB of which is reserved for a dedicated neural processing unit (NPU), which is designed specifically to handle AI workloads.

It's this configuration that enables the system to run LLMs locally, including those in the 120-billion-parameter range. The company also reports performance of up to 190 trillion operations per second (TOPS) split across the processor and the NPU system, and output speeds of between 18 and 40 tokens per second. Generally, with most LLMs, four tokens are equivalent to three words. As such, this would allow the machine to produce output fast enough to be a practical device.

Other specifications include a 1TB PCIe 4.0 SSD, Wi-Fi & Bluetooth, three USB-C ports, and an integrated microphone. It's worth noting that this isn't a standalone computer; instead, it connects directly to a Windows or macOS host system, although Linux is supported without a dedicated client — or it can also be accessed over a network. The developers claim it supports over fifty open source LLMs.

While this is remarkable enough, it's worth pausing here and remembering that this is all from a device that fits in your pocket and doesn't need access to power-hungry data centers. Dimensions-wise, it's in the smartphone ballpark, coming in at 5.6 inches by 3.15 inches, is just under an inch thick, and weighs in at 10.5 ounces.

Why local AI could matter

A good question to pose at this point could be — just why does this machine exist? After all, we live in a highly interconnected world with an AI assistant now available on any smartphone. You can even run an AI chatbot locally on your iPhone.

One of the most significant aspects of the AI Pocket Lab is the fact that it can run AI models without relying on cloud infrastructure. From a privacy perspective, this matters; online AI tools have a long history of concerns, which is why there are plenty of good reasons not to share personal information with online AI chatbots. Another point of interest is its ability to allow users to access AI in environments where there is limited or no connectivity.

It's the size of the AI model it can handle that we find a big distinction between the Tiiny device and running an AI chatbot locally on a typical smartphone. The AI Pocket Lab can handle models with up to 120 billion parameters. Whereas a typical offline chatbot like Llama 3.1 can handle about 8 billion parameters.

The AI Pocket Lab also reflects a growing push towards edge computing, where data is processed closer to where it's generated rather than the aforementioned electricity-guzzling data centers. For context, the AI Pocket Lab ships with a 65-watt power supply and has a rated TDP of 30 watts, figures that place it far closer to a typical laptop than to large-scale AI infrastructure.