The Brains Behind Google's AI Chips Are Starting A Rival Business

A stealth startup of former Google engineers is working on an artificial intelligence chip that could outshine their former employer's much-vaunted TensorFlow tech. Dubbed Groq, the clandestine company has shared little of its plans or roadmap, though recent filings suggest more than $10m in funding has already been secured. More than four-fifths of the original Google TPU team are now working there.

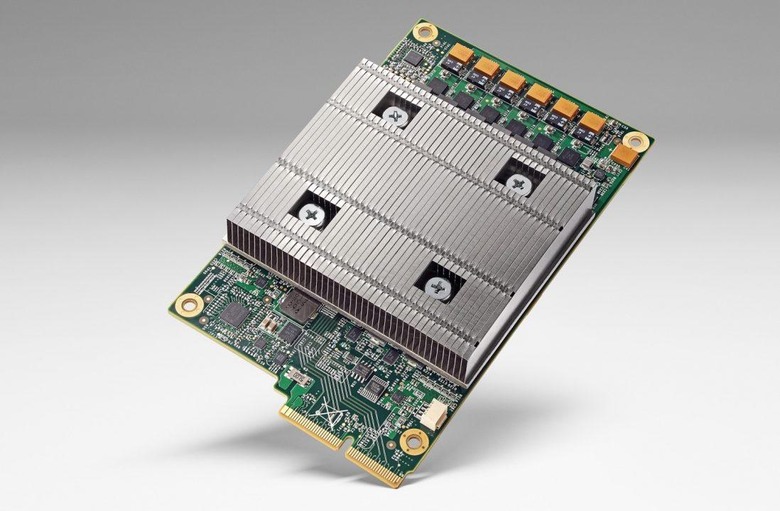

The TPU, or Tensor Processing Unit, is Google's homegrown AI chip. The company began working on it several years ago, in the hope of making neural networking much more practical at scale. While machine learning isn't new – Intel is working on enabling it with CPUs, while NVIDIA is coming at it from the opposite direction, with GPUs, among others – it's computationally very intensive.

That's a problem when you're running an operation on the scale that Google is. Neural networking and artificial intelligence are seen as essential to improving everything from search result accuracy, to computational image processing, through to spam and malware detection. AI also has a home in the Google Assistant, which is at heart of standalone devices like Google Home, but also features on Android smartphones and more.

The result was the TPU, powering TensorFlow, a scalable system that opens neural networking up to everyone. It was developed in part by Jonathan Ross, a former Google engineer who is now working with investor Chamath Palihapitiya on the startup known today to be Groq. Also on the roster are a claimed eight of the first ten people from the TPU project.

"It's too early to talk specifics," Palihapitiya told CBNC, "but we think what they're building could become a fundamental building block for the next generation of computing." Two SEC filings from last year confirmed both the name of the company and its initial funding. According to those documents – which name Palihapitiya, Ross, and former Google X engineer Douglas Wightman – Groq raised $10.3m.

While taking on Google – not to mention Intel, AMD, and NVIDIA, among others – in developing a chip is a sizable challenge, the potential upshot if Groq's technology proves successful is huge. Earlier this year, on a report into the TPU's performance in real-world trials, Google claimed it had proved 10x more efficient at processing than using GPUs. That has implications for power consumption, chip cost, and more.

NOW READ: Google has huge plans for its Tensor neural networking tech

How far we are from seeing the fruits of Groq's labors is unclear, and it seems likely that, if the company's schemes are successful, they'll be a tempting target for acquisition by some of the bigger names in chip development. AI is big business at the moment: only this week, Amazon opened up the artificial intelligence that powers its Alexa assistant to third-party developers and device manufacturers. ARM is positioning its low-power cores as ideal for AI, and Bosch and NVIDIA are doing something similar for the brains of self-driving vehicles.

SOURCE CNBC