My Wallet Is Open, Google, Now Hand Over Project Glass

Project Glass has opened my eyes and my wallet: Google, please, come help yourself to my credit card. The much-rumored wearable augmented reality system has emerged from the Google[x] skunkworks and it's even more than we hoped for. No clunky headset like a bad pair of swollen sunglasses, but a sleek slice of transparent display with just enough Star Trek: TNG hints to keep the geeks happy. With a concept video and a handful of rumors, though, there are still plenty of questions remaining. Google hasn't talked technology regarding Project Glass, focusing instead on the potential user experience, but there's enough here to slot together a few suggestions.

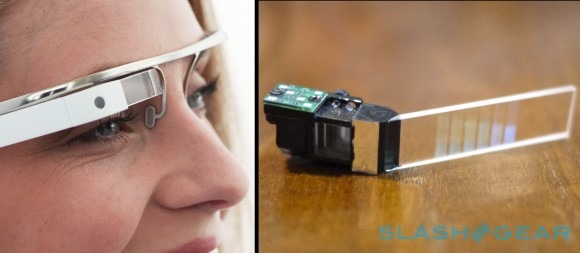

The eyepiece itself is a pretty specialist part: it takes some serious know-how to produce a transparent display that can float computer graphics on top of a real-world view without causing headaches or skewing problems. The obvious candidate is the Lumus OE-31 I played with last month; Google has no extreme close-ups of the screen, so it's difficult to see if there's a sign of the segmented vertical banding used to refract sections of the image into the eye. Lumus is quiet on the matter, but I've no doubt they'll stick with their same "we can't comment on any OEM partners" line as they gave us before.

Still, if it's the OE-31, then that means nHD 640 x 360 resolution. Google seems to be positioning it slightly above the standard line of sight, at least going by its model photos – Lumus' prototypes, in contrast, put the display directly in front of the eye – and the concept video seems to suggest a slight upward-glance movement is used to call down the icons. That implies eye-tracking, though there's no sign of a camera for that as far as I can see.

Exactly how much intelligence is in the eyewear itself is also unclear. From talks with wearable display manufacturers like Lumus and Vuzix, it's clear that there's a considerable compromise involved if you want to make a fully self-contained device: you need to accommodate sufficient processing power, not to mention batteries to keep it going. Lumus' OEMs are supposedly split between preferring tethered – i.e. with a wire going to a separate battery/processing pack – and wireless options; without more comprehensive photos of Project Glass we don't yet know which way Google is leaning.

Still, given the diminutive scale of the headset – at least, the one we've seen so far; Google supposedly has several designs in testing – a tethered approach seems almost certain. The lightweight metal headband and slimline eyepiece section would have little room for anything other than the transparent display itself and the camera, along with maybe a button or two.

More likely, then, is that a separate device – probably an Android phone – is doing the heavy lifting, with either it or a standalone battery pack keeping Project Glass powered up. Data could be transmitted by that same wired connection or, for more flexibility, over a separate wireless link: Bluetooth 4.0 perhaps, for its low power consumption and boosted rates. That would make it easier for Project Glass to be used as a display for multiple devices, too, rather than demanding the video input be repeatedly unplugged and plugged.

A regular Android device would also give Google greatest flexibility in software. The obvious approach is a general video output app which can layer augmented reality data on top of information from other apps running on the phone – Google Maps, Latitude, Google+, etc. – and with some APIs for third-party services to lace their functionality in too. We've seen a similar approach from Sony recently, with its SmartWatch, using a main hub app to control the wrist-worn microdisplay and then plugins distributed through the Android Market to add capabilities.

On that front, Google has already done much of the software work necessary, at least on the backend. Google Goggles does object recognition, Google Maps has all the navigation data and, with Latitude, handles person tracking. Google+ brings its social networking and Hangouts, while we've already seen voice recognition baked into Android.

Left to do is pull that all together and – most vitally – make it usable while mobile. Wearables demand a new interaction paradigm, even more refined than the glanceable data today's smartphones are beginning to offer with widgets and Live Tiles. Even if you only look at your smartphone screen for a few seconds, that's a few seconds devoted to a single object: when the whole world in front of you has the potential to be a screen, there's a lot more to consider. Not distracting too much, or in too potentially dangerous a way, is likely to be at the forefront of Google's UI designers' awareness. This is, after all, augmented reality, not dominated reality.

The benefits if they get it right could be astounding, however. The internet has already become our go-to encyclopedia, source of entertainment, social hub and workplace, among other things, but delving into it has generally been a process that distracts us from the real-world. Augmented reality has always promised to reverse that, injecting information into our lives, but systems to-date have been underwhelming: holding up a phone and peering "through" its display is hardly a seamless integration of real and virtual.

With Google I/O 2012 fast-approaching, it seems almost certain that Google will use the developer event to talk more about Project Glass. Who knows, maybe there'll be prototypes handed out, just as the search company has distributed Android phones and tablets in previous years. If so, expect those to fetch even huger sums than the limited-edition Galaxy Tab 10.1 on eBay shortly after.

I'll be watching those auctions closely, if that's the case. In a world saturated with "the next big thing in smartphones" and "the tablet to kill them all" it's tough to find something legitimately exciting and new: Project Glass has the potential to be just that. I'd certainly open up my wallet for it, and I've a suspicion there are plenty of others who will think the same.