Meet The Chip That Wants To Make Your Smartphone An SLR

Mobile chips don't necessarily need to get faster, they just need to get smarter, at least that's what video processing specialist Movidius believes, and it's launching a highly-focused vision processor, Myriad 2, to prove it. The follow-up to the original Myriad 1 co-processor – found inside Google's Project Tango 3D-scanning tablet – Myriad 2 promises a 20x boost in performance at computational photography, such as real-time mapping, 360-degree panoramic video, and more, all with the eventual goal of making the cameras we carry as clever as human vision. I caught up with Movidius CEO Remi El-Ouazzane to find out why you might want Myriad 2 inside your next smartphone or wearable.

Both generations of Myriad are examples of very dedicated silicon, intending to focus on a specific task and do it far more efficiently than a regular CPU or GPU might. In Myriad 2's case, that task is vision processing and computational photography, the sort of graphics crunching that a traditional video card would need to be plugged into mains electricity in order to match.

According to El-Ouazzane, Myriad 2 arrives at the start of a "third imaging revolution." The first was the ability to capture stills and video on film; the second, the switch to digital. Now, we're entering "the next era of cameras" where processing and understanding is as important – or more so – than just preserving the exact moment.

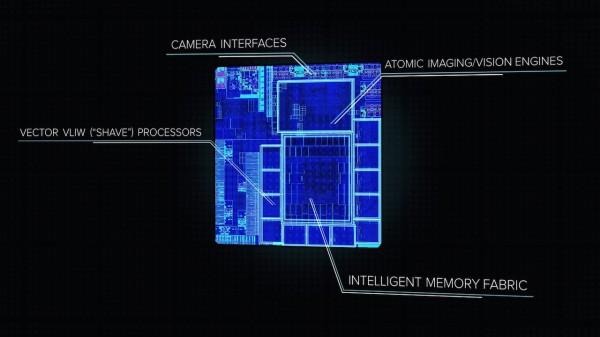

To do that, Movidius splits its chip into two distinct parts. On the one hand, there are twelve "SHAVE" 128-bit processors that have been optimized for vision processing workloads; each is running at 600MHz – with the potential for overclocking – versus the 180MHz versions in the first-gen chip.

Paired with them is a set of configurable hardware accelerators Movidius calls SIPP filters (Streaming Inline Processing Pipeline filters), which can be used to run preconfigured vision processing tasks like fusing together data from different types of cameras (say, IR and RGB) or stitching multiple video feeds into a 360-degree view.

Myriad 2:

Combined with a pair of 32-bit RISC processors that handle chip management, Myriad 2's cores offer the best of both worlds, El-Ouazzane explains. The SHAVE side offers raw compute which different OEMs can program in different ways; each has its own way of delivering the same result, the Movidius CEO points out, and so Myriad 2 gives them a scratchpad in which to do that.

Meanwhile, the SIPP filters can handle more common processing tasks in tandem, while the centralized memory architecture means both sides of the chip can work on the same data simultaneously. That cuts down on the delay introduced when data is shuffled around so that different parts of a processor can work on it.

360-degree Capture:

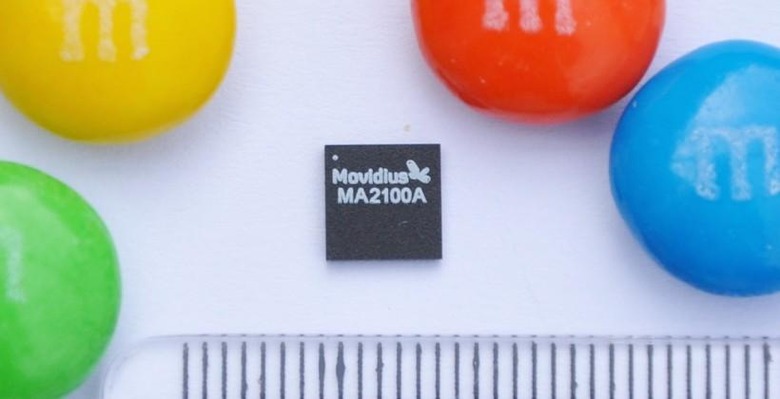

The result is a chip just 6.5mm square and 1mm thick, which can nonetheless handle the input from twelve 13-megapixel cameras at 48fps the same time, or 4K resolution video at 60fps, while sipping less than 0.5W under load. El-Ouazzane says that's at least 10x less power than a comparable GPU would demand.

What can all that power be used for? Movidius has some ideas, and Project Tango is just the start. El-Ouazzane is confident that Myriad 2 is just what smartphones need in order to take their camera quality to SLR levels, including things like autofocus speed and low-light performance.

Meanwhile, standalone cameras could use Myriad 2 to understand the combined data from CMOS arrays – such as Pelican Imaging is experimenting with – to push past the sensor limitations manufacturers are finding themselves running into. Cameras could offer higher-resolution depth sensing, using techniques like time-of-light (aka scannerless LIDAR) that have so far been too computationally intensive for consumer devices.

Gesture Input:

Not only seeing, but understanding what's going on in front of a camera opens the door for more unusual possibilities, however. Visual sensing is the last frontier in devices, El-Ouazzane suggests, now that they've already tackled the low-hanging fruit of audio and movement.

So, in addition to 3D modeling and scanning, as Google is playing with, there's visual searching like Amazon is doing with Firefly on the Fire Phone, indoor navigation without needing GPS or Bluetooth beacons, gesture input, facial recognition, and object detection.

A wearable camera could be aware of location and context, taking advantage of Myriad 2's grunt to shift content processing into real-time rather than waiting until later. Meanwhile, social robots and drones could use the vision chip to deliver true autopilot and user-recognition.

The challenge, of course, is persuading device manufacturers to give space to an extra chip. The add to the bill-of-materials is in single digits, El-Ouazzane told me, while Movidius is trying to make integrating Myriad 2 as straightforward as possible, with a flexible MDK (Myriad Development Kit) including both the regular software tools and sample code and libraries for ISP, computer vision, depth sensing, and such.

With that, and the modular organization of the chip's structure, OEMs will be able to create a combined pipeline of custom SHAVE routines and preconfigured SIPP filter shortcuts. The MDK handles compiling the pipeline in the most efficient way, and components can be interchanged to test out tweaks and improvements and then recompiled in 10-15 minutes.

Mantis Vision 3D Scanning:

On the front-end, Movidius has worked on an OpenCL system, and El-Ouazzane says there are already 20-30 companies developing on Myriad 2. There's a Qualcomm Snapdragon-powered reference board with a 13-megapixel sensor, stereo vision from a pair of Sony CMOS, an IMU for sensor fusion, and optional plug-n boards which can add things like thermal imaging.

More exciting for independent coders wanting to get onboard the computational imaging train, however, is Movidius' eventual plans for an Arduino board. "We want to give the tools to make vision processing the new paradigm," El-Ouazzane says.

When might we see the first fruits of all this Myriad 2 development? El-Ouazzane teased a "big name" partnership which will launch a product within the next 12 months, though wouldn't be drawn on who that was. Still, LG has already thrown its hat into the ring, confirming it will release a Project Tango device in 2015. At least one OEM has plans for tablets and phones with the same camera performance as DSLRs, El-Ouazzane promises.

Already, we've seen cameras that can restyle humble selfies into the handiwork of master photographers, cameras that can turn back the clock on settings as fundamental as focus, and even chatter of possible collaboration between Canon and PureView.

Back when Google first demonstrated what it had been working on with Tango, there were some who questioned the point of 3D mapping. As the Google ATAP team responsible for the current devices pointed out, a new type of computational vision is going to require a new set of "killer apps" and use-cases, and we're still in the early days of such development.

With that in mind, Movidius isn't quite sure what Myriad 2 will end up doing, but whether your interest extends only as far as Instagram, or you're a professional shutterbug, the possibilities the chip represents should be exciting.