iPhone Camera Upgrade Tipped With iOS 11 SmartCam

The feature-leaking fallout from Apple's inadvertent iOS 11 goof continues, with suggestions that the iPhone camera is about to get a whole lot smarter. Apple surprised – and then delighted – code-diggers last week, when what appeared to be an internal version of iOS 11.0.2 intended for HomePod test units was mistakenly pushed out to external developers. The glee at finding unannounced elements of the upcoming smart speaker was quickly eclipsed by a gush of news about the upcoming iPhone 8.

For instance, the code revealed graphics of the new iPhone, information about its biometric security, and how it will implement infrared cameras. Apple – after an atypical delay in its reaction time – eventually pulled the errantly published firmware, but the genie was well and truly out of the bottle. Since then, developers with access to the original version have been digging deeper, and that has unearthed some interesting updates seemingly in the pipeline for the iPhone's camera.

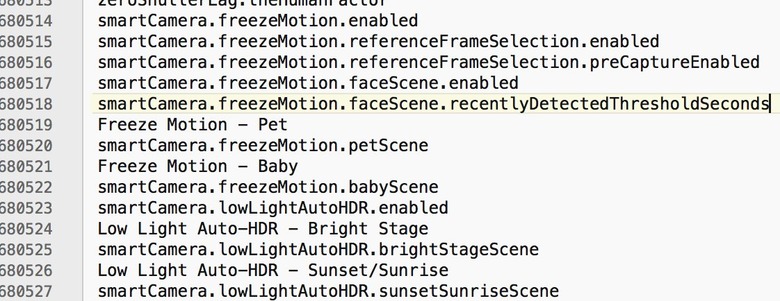

Developer Guilherme Rambo spotted the so-called "SmartCam" feature in the HomePod firmware, though it's clearly related to photography rather than the Siri-equipped streaming speaker. Going by the references, it's a way for the camera to automatically adjust its settings according to the type of scene it's faced with. That ranges from bright light through sunrises and snow scenes, to more specific situations like photos of pets, babies, and even documents.

iOS 11 (or the next iPhone) will have something called SmartCam. It will tune camera settings based on the scene it detects pic.twitter.com/7duyvh5Ecj

— Guilherme Rambo (@_inside) August 2, 2017

It seems to be Apple's next big implementation of machine learning, though it's not the firm's first time using such technology when it comes to photography. The Photos app, for instance, uses machine learning to identify what's in each frame after they've been taken: that way, you can search for not only people but things like cats or landmarks, and have them come up in search results. Notably, Apple does that process all on-device, rather than uploading everything first to the cloud as per rival systems.

Scene-type detection isn't new, though it's primarily been used in standalone cameras. The "Auto" mode on most point-and-shoots will try to figure out the best combination of settings according to whatever conditions they're faced with, though the end results can vary. Given how quickly dedicated cameras have been eclipsed in general use by smartphones, though, a smarter auto mode on the iPhone is likely to be much more useful.

Still unclear at this stage is whether all camera-equipped devices eligible for iOS 11 will get to benefit from SmartCam. Apple is expected to update the iPhone 7, iPhone 7 Plus, iPhone 6S, and previous generations with iOS 11 later this year, though it's possible that only the new iPhones – tentatively, and unofficially, referred to as the iPhone 8, iPhone 7S, and iPhone 7S Plus – will get the cleverer camera.