Confusing Autonomous Cars Might Be Worryingly Easy

Confusing a self-driving car could be as easy as applying some stickers to a road sign, researchers have warned. With driverless projects hunting for ways to cut costs and reduce reliance on expensive sensors such as LIDAR, many have looked to camera-based alternatives to better understand the world around a vehicle. Now, researchers at the University of Washington have found that throwing an autonomous car off course might be as easy as making minor changes to roadway signage.

As the team explains, while it's easy to create mayhem by removing signs altogether, a heavy-handed approach can be quickly spotted and addressed. Arguably more dangerous is a "robust and subtle" modification that could reliably convince a machine learning system – such as the brain of a self-driving car – but not be immediately recognized as something dangerous by human work crews and police.

"If an attacker can physically and robustly manipulate road signs in a way that, for example, causes a Stop sign to be interpreted as a Speed Limit sign by an ML-based vision system," they write, "then that can lead to severe consequences."

They came up with a system of stickers that could be produced with a regular printer and the supplies you might find in your local Staples, but which when applied in the right order to a road sign could be as much as 100-percent effective at fooling a smart car's brain. Importantly, even when applied, the stickers could easily be dismissed by those not aware of the possible danger as everyday graffiti-style vandalism.

The exploit could have dangerous applications well before fully autonomous cars reach the roads en-masse, however. Several automakers already use camera-based systems to read road signage, spotting temporary speed limits and other details. That data is usually combined with information within the navigation database.

Few automakers currently rely solely on this camera-based information for controlling mission-critical elements of the vehicle's behavior. Some adaptive cruise control systems, such as Mercedes-Benz's DISTRONIC, can automatically adjust the set speed down or up as the car moves in and out of roadways with different limits, but the information is generally checked against the onboard database. Still, as vision-based systems become more prevalent, this could become much more of a threat.

Adding to the uncertainty is the fact that no two autonomous car projects can seemingly agree on exactly what hardware is required to safely reach Level 4 and Level 5. They're the point where, according to the generally-accepted SAE Institute's definitions of self-driving vehicles, the car can take over control entirely in almost every condition. While the definitions may be the same, the route to reach such functionality can vary broadly.

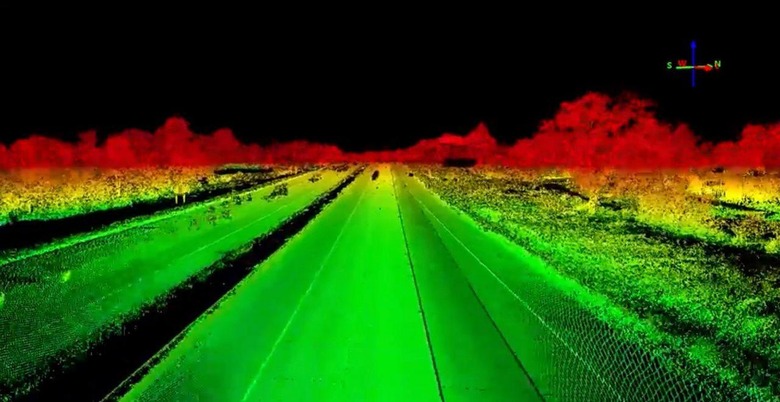

On the one hand, many espouse a system that includes LIDAR, effectively a laser rangefinder that can build up real-time maps of the environment around the vehicle in 3D. Although LIDAR is hugely accurate in that mapping, it's also very expensive. Several companies are working on reducing that cost, but it can still be the single biggest outlay in any piece of self-driving car equipment.

That's pushed some projects to look to ways around LIDAR. Tesla, notably, has said it believes its current Autopilot gen-2 hardware – which comprises cameras, radar, and other sensors, but no LIDAR – is sufficient for one day doing Level 5 autonomous driving. Other semi-autonomous systems, like Cadillac's Super Cruise which will launch later this year, rely on LIDAR-mapped data but don't demand the individual vehicles themselves have such sensors, instead using upgraded GPS tracking.

The researchers conclude that there's much more attention needed on how physical infrastructure could have an above-average impact on increasingly machine-driven cars. For those companies working on autonomous cars, though, the challenge seems to be how to deliver the safety of system redundancy while still keeping the overall cost down to something the mass market can afford.