WhatsApp Blasts "Very Concerning" Apple Child Abuse "Surveillance" Tech [Update]

WhatsApp has criticized Apple's new system for scanning photos in the hunt for child sexual abuse as "very concerning," after the unexpected announcement that the Cupertino firm would use AI to spot illegal images and more. Revealed yesterday, the new child safety features will mask photos shared through Messages, in addition to tracking such content uploaded to iCloud Photos.

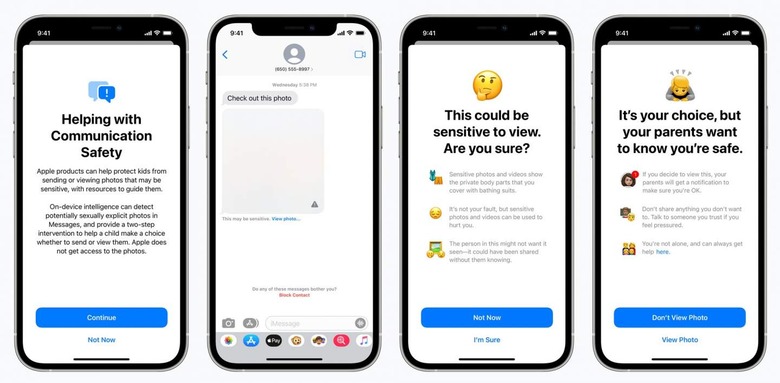

"This program is ambitious, and protecting children is an important responsibility," Apple said of the announcement. For younger users of iPhone and other Apple devices, it'll mean image analysis is used to spot any inappropriate pictures being either sent or received.

Rather than displaying them in the chat interface, the image will be blanked out by default with a warning that "this may be sensitive." The image analysis processing takes place on the device itself, Apple pointed out, as a nod to individual privacy. Still, while protecting children is acknowledged as being a good thing, not everyone is impressed with how Apple is electing to do it.

WhatsApp, for instance, has been outspoken in its disagreement with the system. "This approach introduces something very concerning into the world," Will Cathcart, head of WhatsApp, told the FT. "This is an Apple-built and operated surveillance system that could very easily be used to scan private content for anything they or a government decides it wants to control. It's troubling to see them act without engaging experts."

Despite calls from some for broader application of such a system on other messaging platforms, Cathcart was insistent that the Facebook-owned app would not be following suit. "We will not adopt it at WhatsApp," he confirmed.

Among the concerns is that, while the technology may begin as a child protection system, in time it could be co-opted by law enforcement for other purposes. That might include monitoring dissidents and protestors in countries with regime governments. Currently, Messages – like WhatsApp – is end-to-end encrypted, meaning that even the company itself cannot see the content that's being shared.

This new system won't change that. Instead, Apple will use data from the Child Sexual Abuse Material (CSAM) database to create image hashes, which can be compared to shared photos on the users' devices themselves. The company claims that the system has less than a one-in-one-trillion chance annually that an account could be mistakenly flagged as sharing the content.

Siri and Search on Apple devices are also being updated, and will be able to give more information on how to deal with potentially dangerous situations. The new features will be rolling out as part of iOS 15, iPadOS 15, watchOS 8, and macOS Monterey later in 2021.

Update: In a Twitter thread, Cathcart expanded on his concerns with the Apple system:

"I read the information Apple put out yesterday and I'm concerned. I think this is the wrong approach and a setback for people's privacy all over the world. People have asked if we'll adopt this system for WhatsApp. The answer is no.Child sexual abuse material and the abusers who traffic in it are repugnant, and everyone wants to see those abusers caught.

We've worked hard to ban and report people who traffic in it based on appropriate measures, like making it easy for people to report when it's shared. We reported more than 400,000 cases to NCMEC last year from WhatsApp, all without breaking encryption.

Apple has long needed to do more to fight CSAM, but the approach they are taking introduces something very concerning into the world. Instead of focusing on making it easy for people to report content that's shared with them, Apple has built software that can scan all the private photos on your phone — even photos you haven't shared with anyone. That's not privacy.

We've had personal computers for decades and there has never been a mandate to scan the private content of all desktops, laptops or phones globally for unlawful content. It's not how technology built in free countries works.

This is an Apple built and operated surveillance system that could very easily be used to scan private content for anything they or a government decides it wants to control. Countries where iPhones are sold will have different definitions on what is acceptable.

Will this system be used in China? What content will they consider illegal there and how will we ever know? How will they manage requests from governments all around the world to add other types of content to the list for scanning?

Can this scanning software running on your phone be error proof? Researchers have not been allowed to find out. Why not? How will we know how often mistakes are violating people's privacy?

What will happen when spyware companies find a way to exploit this software? Recent reporting showed the cost of vulnerabilities in iOS software as is. What happens if someone figures out how to exploit this new system?

There are so many problems with this approach, and it's troubling to see them act without engaging experts that have long documented their technical and broader concerns with this.

Apple once said "We believe it would be in the best interest of everyone to step back and consider the implications ..."

..."it would be wrong for the government to force us to build a backdoor into our products. And ultimately, we fear that this demand would undermine the very freedoms and liberty our government is meant to protect." Those words were wise then, and worth heeding here now."