Volvo Scores An Autonomous Car Soundtrack To Settle Your Stomach

The whirr of electric motors is increasingly familiar as we shift away from internal combustion, but autonomous vehicles may need a soundtrack of their own, a new project by Volvo and partners concludes. Focusing on how passengers in self-driving cars will build trust in autonomous systems, as well as ways to use sound to avoid motion sickness, the two year project has come up with a recipe for a "sonic soul" that could make future transportation feel less alien.

Right now, sound tech in cars generally falls into one of three categories. Most familiar are the audio systems we use for music; a more recent addition has been active noise cancelation that some vehicles rely on to help oust road and wind noise. Most controversial is the noise enhancement that's sometimes piped into the cabin to emphasize engine sounds, with the assumption that because of it we'll think the car is sportier.

Fully autonomous vehicles (AV), however, have a different level of interaction with the driver. Indeed, that person is no longer the driver at all: with traditional controls unlikely, Level 4 and Level 5 AVs will merely require a destination be set, and then everyone onboard is a passenger.

Most of the technical effort going into self-driving vehicles has been around how they perceive the road and other road-users, navigating urban areas and highways safely and efficiently. However, beyond fanciful concepts about the future of AV cabins – typically positioned as lounge-like spaces rather than two or more rows of seats all facing in the same direction – there's been far less consideration paid to the environment we'll be passengers in.

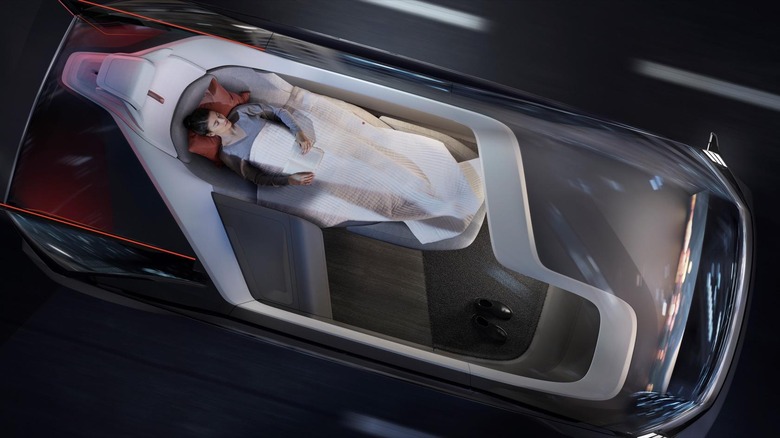

Volvo isn't immune to that cabin concept allure, of course. Back in 2018 it revealed the Volvo 360c, a vision of autonomous driving that it pitched as an alternative to cross-country flight. Rather than boarding a plane, you'd summon a self-driving 360c pod, get into its converting lounge seat/bed, and sleep while the AV drove you overnight to your destination.

The thread-count of the sheets in your autonomous vehicle are one thing, but Volvo's latest project – in collaboration with research institute RISE and audio production specialists Pole Position Production – explored sound within AVs. SIIC, or Sound Interaction in Intelligent Cars, focuses on how audio can be used, both to build trust in the system and to help avoid motion sickness while you ride.

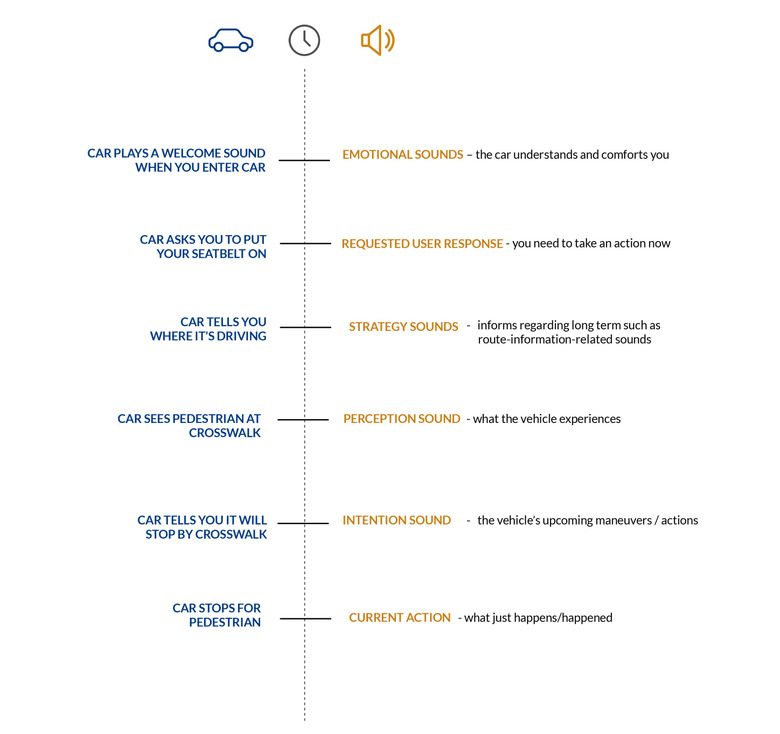

"The main idea behind the SIIC framework is a set of sound types or sound "layers," the researchers explain. "We use the term layers instead of sound types since the sounds that we recommend using in self-driving cars are not the type of traditional sound chimes that you find in your smartphone, but should be smoother and more continuous. Also, multiple sound layers may be active at the same time – hence the name layers."

"In current cars, it's more like reactive sounds, collision warnings," Dr. Pontus Larsson, Interactive Sound Designer at Volvo Cars, explained in an interview. "Our approach is being more proactive, inform in advance in a more subtle way, so you don't get the feeling that I need to do something."

SIIC envisages a set of sounds which can – consciously or subconsciously – shape the driver's experience of the autonomous vehicle. Emotional sounds, for instance, might be those which play when you enter the AV or power it on, designed to indicate that it recognizes and understands you. Intention sounds, however, would be used to highlight an upcoming maneuver or action, such as pausing at a crossing. Strategy sounds would indicate longer-term planning, such as route optimization, while perception sounds would help flag what the AV is experiencing.

Pole Position Production has plenty of experience with car audio, though until now that's primarily been in the gaming space. "In games we're also used to guiding a player through different scenarios – stories, or missions, or levels – and provide them with the information they need to complete these," Max Lachmann, CEO and Founder, explained. "We need to provide them with only the vital information that they need to have."

Different types of passenger may have different levels of need when it comes to information and reassurance, Lachmann says. Some might insist on as much data as possible, reveling in the experience of seeing the car recognize every pedestrian, cyclist, and road sign. More cautious passengers may be more focused on building trust, simply wanting to know the AV is in control. At the other extreme, workers might want as much of the experience as possible to fall into the background, as they focus on other tasks while being chauffeured around.

"From our perspective," Fredrik Hagman, Interactive Sound Designer at Volvo Cars says, "we're coming at this from a more interactive side ... can we maximize the usability of these sounds, and by that increasing the sense of trust, and increasing the communication between the car and the driver. That I communicate that I see this, that I will slow down."

Beyond reassurance, though, is nausea – or avoiding it. Getting carsick as the driver is rare, but when an AV is in control there's more chance of it affecting every passenger. "Motion sickness is quite a complex phenomenon, and people experience it very differently," Dr. Pontus explains. "But it's been found that apart from the visual ... you look down and get a different visual impression to what you sense with your body ... there is also an anticipatory sense ... If you're not aware what's going to happen that's also going to trigger motion sickness ... We let people know what's going to happen, so even if they look down they're prepared."

Existing research had theorized that simple tones played into the cabin could effectively prime passengers to expect forward or backward movements. Volvo took test vehicles to the track to see if that was true, finding that even with a single speaker and basic sounds it could actually reduce reports of motion sickness.

Then, they added to that by factoring in turns. "In our tests we did have panning sounds, so we had more than one speaker, " Lachmann says. By utilizing panning sounds which were perceived as moving across the cabin in the direction of the upcoming turn, passengers consistently reported feeling less carsick.

"I did maybe not believe fully in the motion sickness reduction to start with," Hagman admitted. "I was very, very surprised by the significant results we got."

While it's not uncommon for AVs to feature some sort of display that shows the path the vehicle plans to take, using audio cues means it's not dependent on a passenger actually looking at the instrumentation. That's key if they want to be able to read, work on a laptop, or even doze while they're onboard.

Of course, sound can also present its own problems. Most of the noises current vehicles make are designed to grab the attention, rather than be melodious; few people enjoy listening to the "fasten seatbelt" beep. "Sound can be intrusive, we shouldn't disregard the fact that people can be disturbed by sound," Dr. Pontus points out. "We tried to do it in a way that can be both effective and aesthetically pleasing for our users."

The palette of sounds the team came up with were from a mix of analog and digital sound generators, particularly Reaktor, and then further layered and processed. The resulting audio isn't particularly dramatic, as this example of an "intention sound" – the AV warning it's about to do something in 1-2 seconds time – shows:

What's being referred to as "skeuomorphic sonification," meanwhile, is the basis for perception sounds. In this example, a "perception sound" highlights that the AV has spotted the approach of another vehicle.

Out of 28 participants riding in a virtual vehicle wearing a VR headset, the team says, 27 out of the total group preferred it with the audio signals on rather than off. "The results showed that the auditory display had a significant effect on trust in the majority of the use cases. The participants also found the car to be more intelligent with sounds on," they found.

On a test track, with intention sounds indicating upcoming acceleration, deceleration, and turns, 17 out of 20 participants preferred riding in the vehicle which featured the audio cues.

With fully autonomous vehicles still some way out, "we have no plans for implementation right now," Hagman says. However, although Level 4 and above vehicles may be far on the roadmap, Level 3 – where advanced driver assistance systems take the helm in certain circumstances, passing control back to a human driver outside of those areas – could be closer at hand. Volvo itself, for instance, plans to launch a Level 3 system dubbed Highway Pilot, with the sensor suite at least baked into select new vehicles from 2022.

Highway Pilot – which will take responsibility on sections of highway – will face a challenge common to Level 3 systems: the handover. That's the point where the driver-assistance needs to pass control back to the driver, and which relies on the human operator being sufficiently tuned-in and ready for that transfer.

Hagman and the team, unsurprisingly, wouldn't be drawn on the potential for SIIC in such a situation. "This project is focused solely on fully self driving vehicles, where you basically get in and take off in full autonomy," the sound designer points out. "But I would say, I believe sound can play a vital role in [Level 3 handovers]."

Findings from the group into motion sickness prevention will be published in an upcoming paper, "Intuitive And Subtle Motion-Anticipatory Auditory Cues Reduce Motion Sickness In Self Driving Cars."