The Big iOS 10 Vs Android N War Is Over Privacy

At first glance, you could think Apple's WWDC 2016 and Google's I/O 2016 saw announcements of broadly the same thing, but the big question is where – not how – your data is being used. Tim Cook & Co. took to the stage at the Bill Graham Theater in San Francisco this morning to unveil the latest and greatest features of iOS 10, macOS, and more, but even if they didn't mention Google by name, they nonetheless stuck the dagger in at the end of the show.

Listening to some of the iOS 10 features, it wasn't hard to draw parallels with what Google discussed just a few weeks ago at its own developer event. Messages, for instance, with its varying sizes of text along with larger and more flexible emojis, and Photos, with facial recognition and smart searching. The ability to parse conversations and fill in the gaps in calendar entries automatically.

They're much-needed features, certainly, if iOS is to remain competitive. Google, after all, has an edge in machine-learning, something it has built on aggressively with the Google Assistant that will power chat apps, Google Home, and more.

Google Photos has long recognized and sorted by faces in addition to geotagged images, as well as mixing together related photos and videos – complete with effects and backing music – into highlight reels.

Is Apple playing catch-up, then? Not quite. Though the functionality may, on the face of it, be very similar, what's astonishing is just how much of it is done locally.

Google, to do its processing, relies on your data – whether it be photos, video, or something else – being in the cloud. There the company's powerful servers can do the crunching and spit out grouped photos or your indexed calendar.

In contrast Apple is doing it all on-device. It's a testament to the power of its own processors, of course, but also a definite position-taking on just where users should expect their data to be kept.

Without having to upload your selfies to the cloud, there's no worry that a hacker might extract them. "In every feature that we do," Craig Federighi, Apple's senior vice president of Software Engineering, said during the keynote today, we carefully consider how to protect your privacy."

Some users, undoubtedly, put more emphasis on functionality than they do caution on where their data is stored. Apple, too, isn't afraid of the cloud: in updates to its own cloud storage system today, it improved synchronization of files for users with multiple devices, ensuring for instance that works-in-progress you save temporarily to the desktop on your MacBook are also available on your iMac, your iPhone, and your iPad Pro.

The comfort level that Apple has with cloud-first is clearly different, however: it made a point of highlighting not only the "on-device intelligence" that eschews remote processing, but its reliance on end-to-end encryption, and an absence of user-profiling, the tracking used to build valuable profiles of people that some privacy advocates have criticized for storing far more personal data than people might expect.

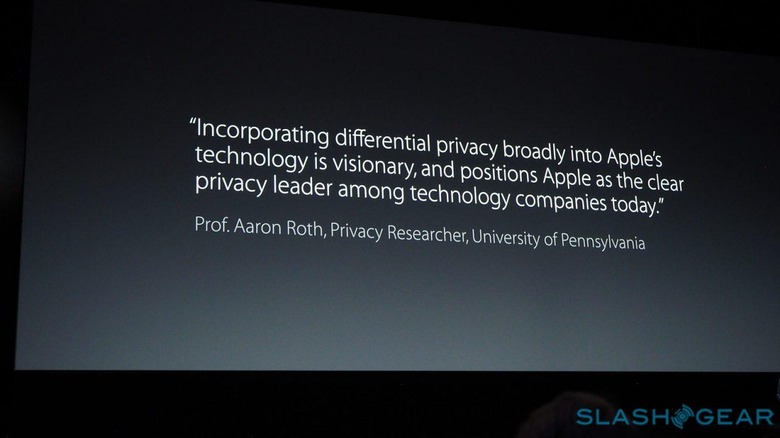

"Incorporating differential privacy broadly into Apple's technology is visionary," Professor Aaron Roth, privacy researcher at the University of Pennsylvania, said of the approach, "and positions Apple as the clear privacy leader among technology companies today."

Instead there's "differential privacy", a more general way of intuiting common trends in language and more, but divorced from individual identity, and which Apple says it will be making greater use of.

Apple has, recently, been criticized for neglecting its cloud services while rivals like Google and Microsoft push ahead with them. Today's WWDC keynote demonstrated that it may actually be an intentional slight more than anything: a way to live in a multi-device world that doesn't rely on storing everything remotely in the process.

In the process, shifting the hub of our data from a wheel-and-spoke system with the cloud in the center, to a device-centric model with encrypted interlinks, could well be the biggest differentiator between iOS 10 and Android N.