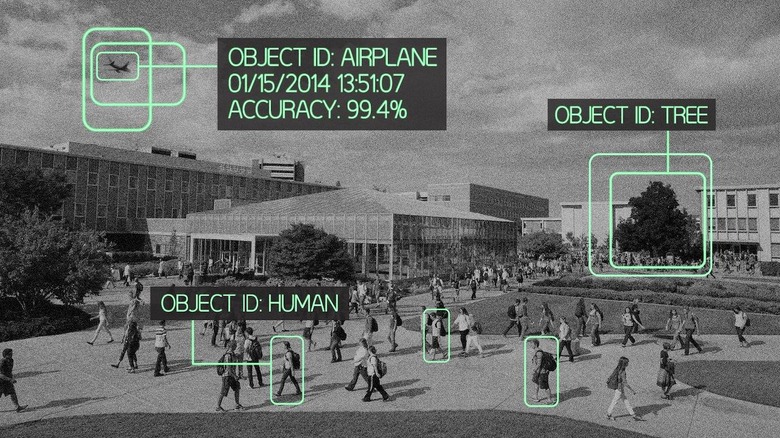

Smart Object-Recognition System Could Spy On Your Milk In The IoT

Computers that can identify objects without requiring any human training are now a possibility, as researchers figure out how to teach AIs to intuit the key features and differences between faces, objects, and more. The new algorithm, developed by engineer Dah-Jye Lee of Brigham Young University, avoids human calibration by instead giving computers the skills to learn how to differentiate themselves: so, rather than the operator flagging individual differences between, say, a person and a tree, the computer is given the tools to identify the differences on its own, and then use them moving forward.

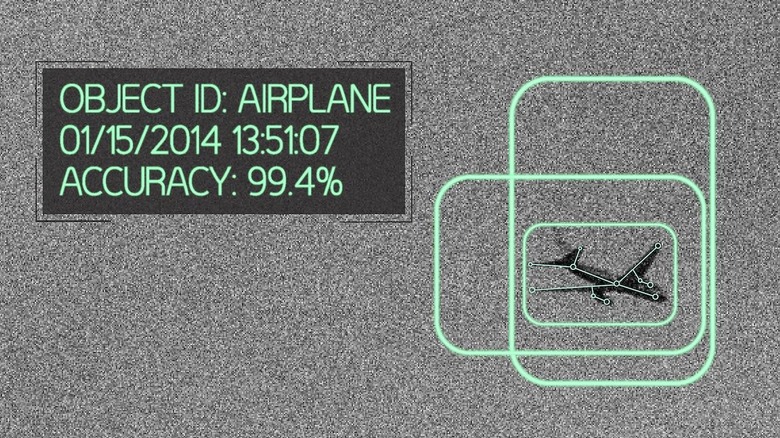

Lee says it's similar in theory to how a child is taught. His research saw a computer loaded with image sample sets – he picked faces, airplanes, cars, and motorbikes – and then the algorithm, known as "ECO features", left to calculate its own distinguishing elements.

For instance, the distinctive angles between the fuselage of a plane and its wings might be observed by the computer, which then allows it to tell the difference between that and a car. Lee's team found the algorithm was 100-percent accurate at recognizing each of the four datasets.

That high degree of accuracy continued when faced with the more difficult challenge of identifying objects within a category. Faced with classifying four different species of fish, Lee's algorithm achieved 99.4-percent success.

In contrast, rival object recognition systems only managed at most 98-percent success at distinguishing in the original four categories, never mind matching ECO features within categories.

Lee's team envisages ECO features being useful for unmanned and manpower-intensive applications like tracking invasive species in habitats and spotting flaws in products on production lines. Of course, there's also huge potential in the "Internet of Things" where systems are left to their own devices but could monitor individual people entering and leaving buildings, track what food you have left in your fridge, monitor individual cars as they navigate smart cities, and more.

VIA Google+