Occipital Bridge Hands-On: The Room-Mapping AR Rival To Google WorldSense

Where are you in the bold new world of virtual and augmented reality? I don't mean that metaphorically, either: the question of where a user in a virtual world is physically, and how they're interacting with the real world around them, is arguably the key to making the next generation of headsets. It's something not only big names like Google and Apple are working on, but VR/AR specialists like Occipital.

The San Francisco company isn't new, but you'd be forgiven for not having necessary heard of it. For the past few years Occipital has been working on sensor technology that can map out a space in real-time, without relying on GPS or resorting to adhesive markers or beacons. That resulted in the Structure Sensor, an $379 iPad accessory that turns Apple's tablet into a room-mapping 3D scanner.

Now, the technology has advanced again, now mounted atop a mixed reality headset. Dubbed Bridge, it adopts the same strategy as Google Cardboard, Daydream, and Samsung Gear VR by putting a smartphone at its heart. However, though the iPhone Bridge is designed to accommodate does make use of its camera, the really clever stuff comes from the Structure Sensor mounted on the top and plugged in via Lightning.

When I sat down with Occipital, the team was just preparing to ship its second batch of preorders. Sales of the so-called Bridge Explorer Edition had shipped in December to the early-adopters. Now, a new version – complete with 6-degree-of-freedom (6DoF) tracking and a brand new 3DoF controller – is headed out to developers eager to try Occipital's Bridge Engine.

The system is a one-two punch of hardware and software. The former distinguishes itself from other phone-based headsets by virtue of how it integrate's the iPhone's own abilities with external sensors. A wide-angle lens atop the phone's camera can feed live video from the room around you into the headset for augmented reality (and, bluntly, so that you don't bump into anything). However, Occipital is also using data from its own depth sensor to create a 3D view from that single wide-angle feed.

The result is a slightly offset view served up to each eye, helping with binocular vision. In the process, Bridge also adjusts for the iPhone's offset camera, so that your perceived view of the room around is from where your pupils actually are, not offset like the camera itself is seeing.

It's important, since Occipital isn't just targeting virtual reality like Gear VR and Daydream. Instead, it's aiming for augmented reality in the manner of Microsoft HoloLens. Unlike Microsoft's visor, it doesn't have fancy transparent displays; however, that keeps the price down – $399 for Bridge, though you'll need to supply the iPhone 6, 6s, or 7 yourself, versus several thousand for HoloLens – and dramatically increases the amount of space for AR effects.

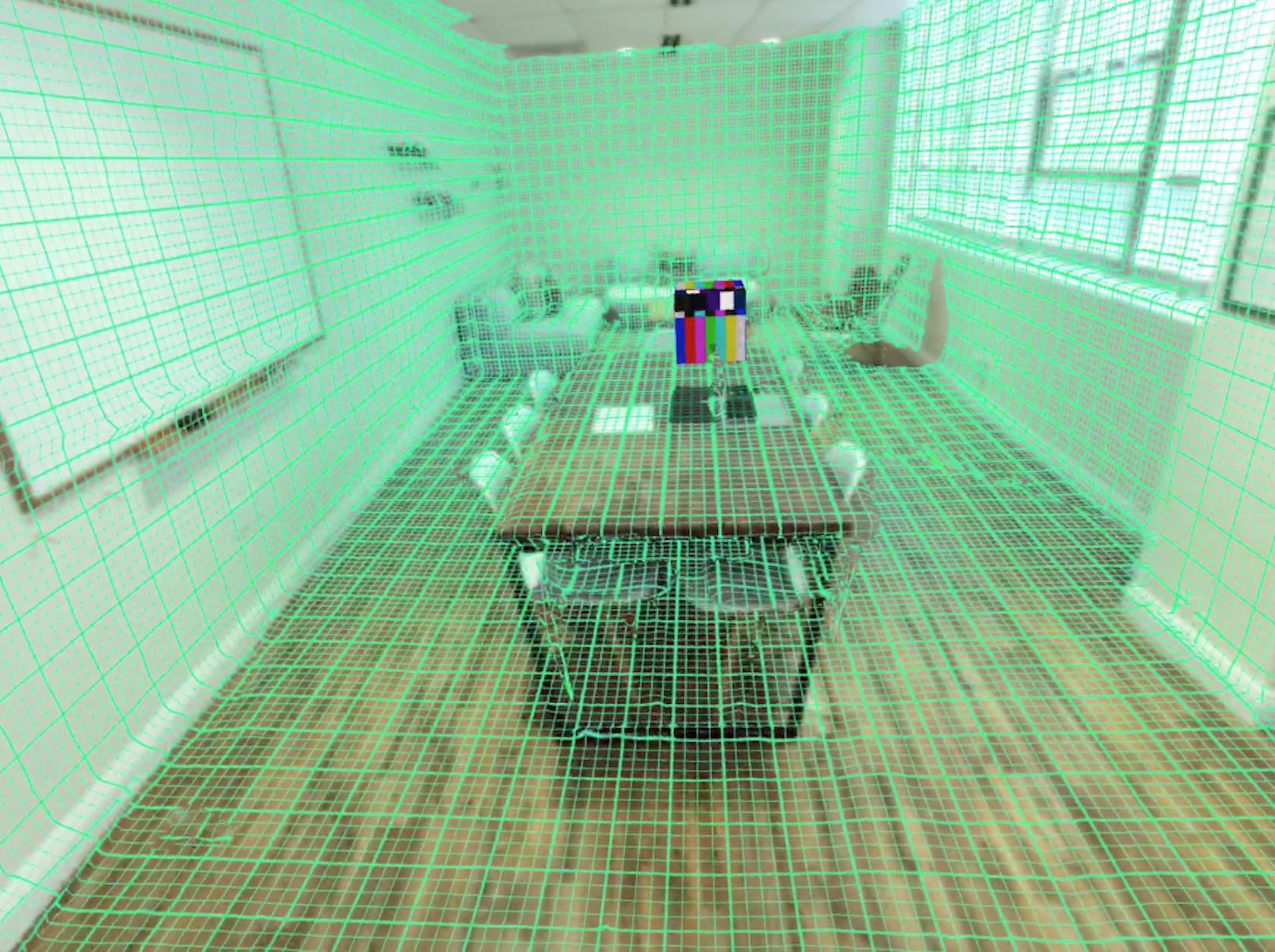

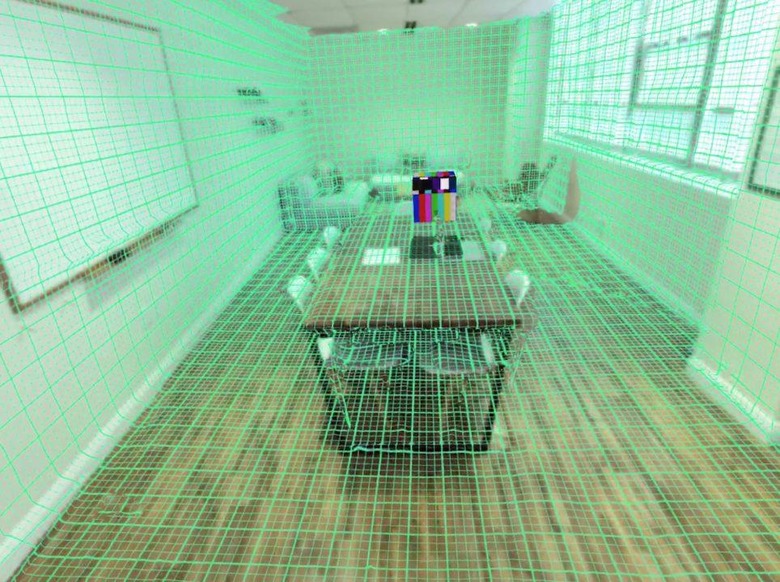

That was put to good use in the demo I tried. Bridge first needs to map the room, a quick process involving turning it to look around the walls, floor, and furniture. From that, it builds up a wireframe of the space, so that not only do you see what the iPhone camera sees, but the Bridge Engine understands what's a horizontal surface, what's vertical, and all angles in-between.

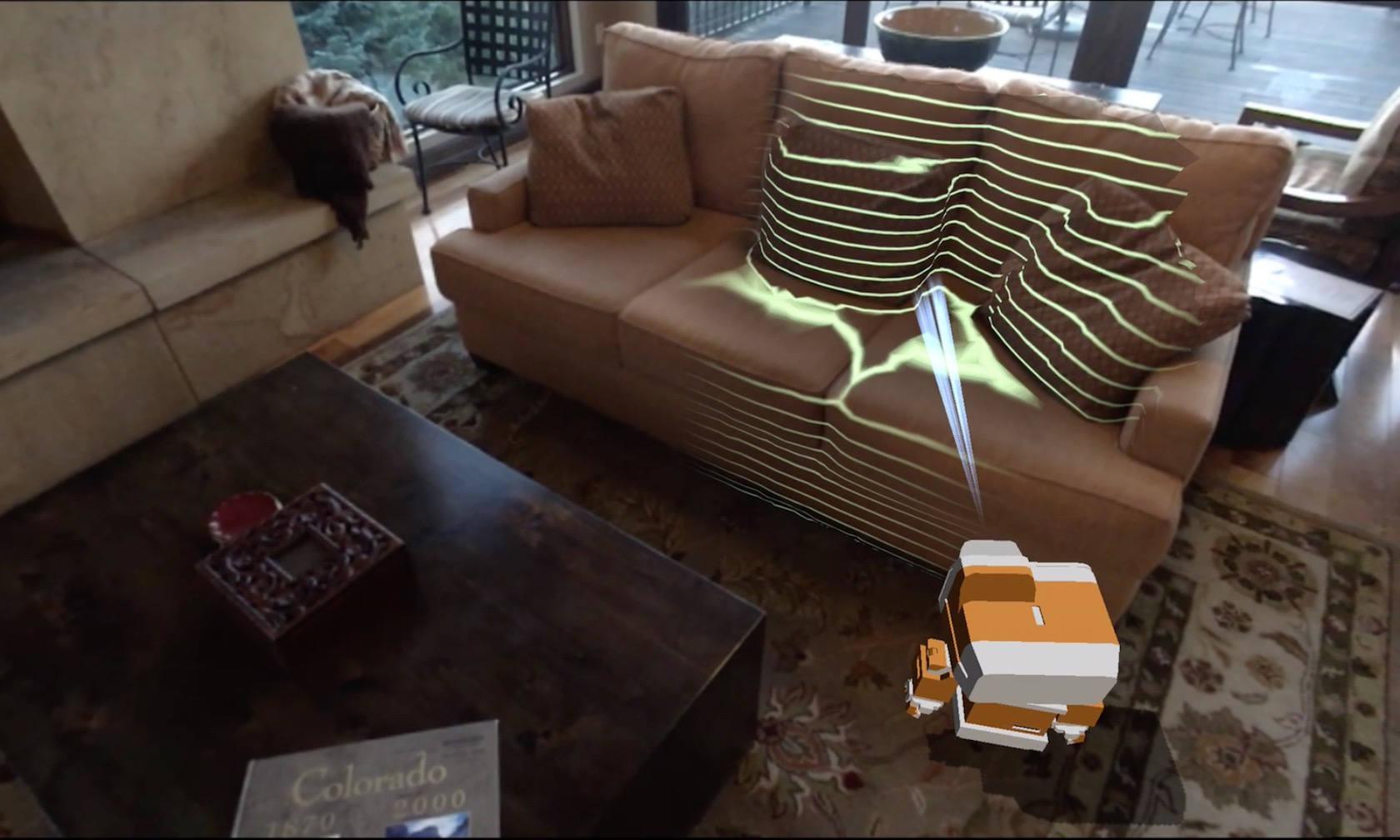

Occipital's demo app puts a small robot – Bridget – into the room, which you interact with using the Daydream-style controller. You can point and click to navigate it around, with the 'bot moving around furniture and other objects rather than just running straight through them. Dropping items into the space similarly acknowledges the real topography; at one point, I accidentally backed the robot into a corner by inadvertently positioning a virtual chair in its way. You quickly stop differentiating between what's real and what's digitally created, especially when virtual balls start rolling across real tables and bouncing down under and behind things.

It's pushing the capabilities of a smartphone hard. There was a very small amount of lag visible when I moved my head quickly, though not enough to cause any discomfort. The iPhone's own screen resolution is another limiting factor; Occipital tells me that, even though some Android devices have higher-resolution displays, there are no current plans to support Google's OS since the design, connectivity, and camera of each phone varies from the next.

Google is, probably, Occipital's biggest competitor. Back at I/O last week, the company showed off its new WorldSense tracking system, built on the indoor visual mapping technology developed as part of Project Tango. WorldSense works by looking for visual anchors in the world, using those as impromptu markers from which it can figure out where the headset-wearer is looking, and how the different objects related in 3D space. Early feedback suggests it works reasonably well, but Google is still apparently recommending that WorldSense systems – which will be standalone, rather than based on slotting in a phone – be used while sitting down or stationary.

In contrast, Occipital's setup allowed me to move around and, far from bumping into things, my biggest klutz was forgetting that you can't put down real objects like the Bridge controller on a virtual table in that space. Already being on the market is another tick in Occipital's column, as is existing Bridge Engine integration with popular platforms like SceneKit for VR and AR, and Unity for VR. I'm told developers have been able to get their existing mixed reality experiences up and running on Bridge in as little as ten minutes.

For now, Bridge remains a developer-focused device. After all, you can't really sell a platform to consumers without apps to use on it. Occipital isn't saying quite how many devices are in the wild, but tells me that the last preorder phase sold out in a matter of days. The demand, clearly, is there. Now we just need the compelling mixed reality experiences to sell it to the mass market.