NVIDIA Built An Epic AI Supercomputer From Four New A100 80GB GPUs

NVIDIA has revealed its newest workstation, and if you've always wanted a gleaming gold obelisk capable of putting petascale power on your desktop, the DGX Station A100 should do the trick. Targeting those doing serious amounts of machine learning and similar, the new tower packs four NVIDIA A100 Tensor Core GPUs with a whopping 320 GB of GPU memory.

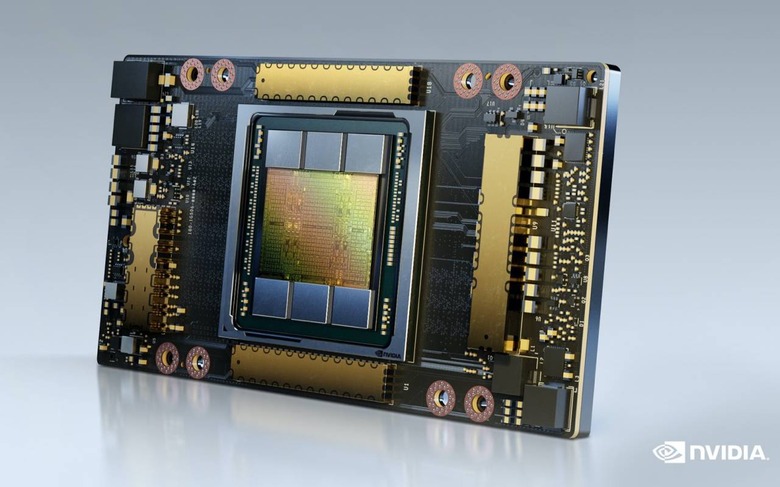

That's courtesy of NVIDIA's other big news today, the NVIDIA A100 80GB GPU. Now upgraded with HBM2e for 80GB of high-bandwidth memory – twice what the old model had – it can provide over 2 terabytes per second of memory bandwidth.

When dealing with huge models relies on how fast you can get data to the GPU, the enlarged bandwidth pipe is a big deal. It's the first of NVIDIA's range to crack the 2TB per second milestone, but the extra memory also adds flexibility for how the A100 can be used. Rather than deal with a single GPU instance, for example, it can be partitioned into up to seven, each with 10GB of its own memory.

The A100 80GB uses third-gen Tensor Cores, along with third-gen NVLink and NVSwith technology. That doubles the GPU-to-GPU bandwidth, for up to 600 GB per second transfers.

The DGX Station A100 has a full four of those GPUs, all hooked up with NVLink. It means the ability to run up to 28 separate GPU instances – with data securely siloed from each other – for parallel jobs and multiple users. Compared to its predecessor, NVIDIA says, you're looking at over a fourfold speed improvement for things like complex conversational AI models.

Joining the GPUs is a 64-core AMD processor, along with 512 GB of system memory. There's PCIe Gen-4 support, and a DGX Display Adapter with four DisplayPort outputs and support for up to 4K resolution. For storage, there's up to 7.68 TB of NVMe SSD – twice as fast, NVIDIA notes, than PCIe Gen3 SSDs – across two easy-access bays.

What makes it all particularly special is how straightforward it should be to deploy. Since the DGX Station A100 doesn't require special cooling or an above-average power supply, installation is about as simple as unpacking it from the box and plugging it in on your desk. It can be remotely managed, too, making it particularly useful at the moment when a lot of teams are now distributed. Sales will kick off this quarter, NVIDIA says, and there'll be an upgrade option for those who already have an NVIDIA DGX A100 320GB system.