Mobile Benchmarks Are Still Mostly Pointless - Here's Why

Huawei's recent misstep was like opening up a wound we thought had gone away. And while Huawei did admit to cheating benchmarking tools, its justification for doing so only serves to highlight something that was brought up half a decade ago. Mobile benchmarks, while not completely useless, are still nowhere near the maturity of their PC counterparts. Worse, they have even served to further the agenda of manufacturers instead of helping consumers make better choices.

Why Huawei, Why?

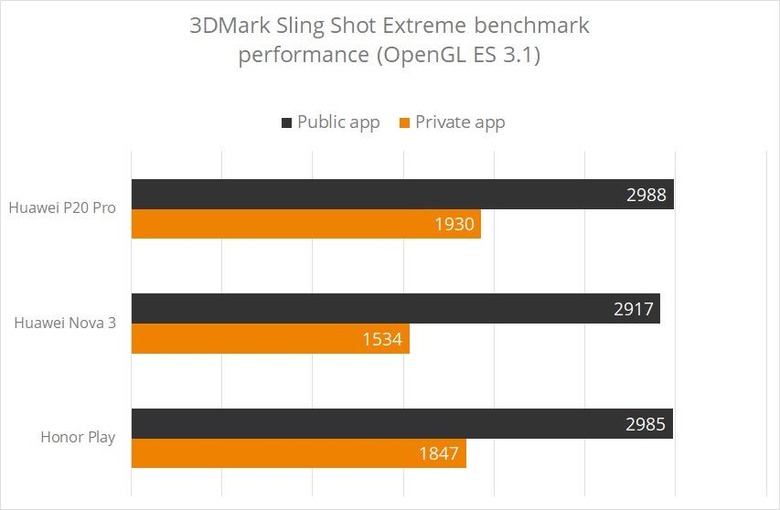

So here's what happened in the past few days. AnandTech broke the news that Huawei's latest smartphones that use its Kirin 970 chipset have a benchmark detection tool that cranks up the performance (by raising the power limit) of the device when a benchmark app is run. This makes it seem like has a higher performance than if no benchmark was running or, as 3DMark developer Futuremark discovered, if an unidentified benchmarking app was used. In other words, it has a secret "Performance Mode" that AnandTech argues only serves to degrade the processor's performance in the long run.

Huawei doesn't deny what it did but tried to excuse itself by pointing out that its competitors were cheating, too, and that those competitors are using those lies to push Huawei out of its position in the market. In other words, it's suggesting that it has no choice but to play in that unfair game by also being unfair. This isn't really new and is the same reason smartphone makers cheated way back in 2013. A tool that should have been used to inform customers has become an advertising platform for companies.

Why Benchmarks?

Why do we still use benchmarking tools? What are they for anyway? At first glance, you might presume they're simply used to measure the performance of a product, be it a phone or computer or a part of it, like the way you'd measure someone's height or the distance from your house to the nearest coffee shop. Yes, it does that but only as part of its real purpose: to compare things.

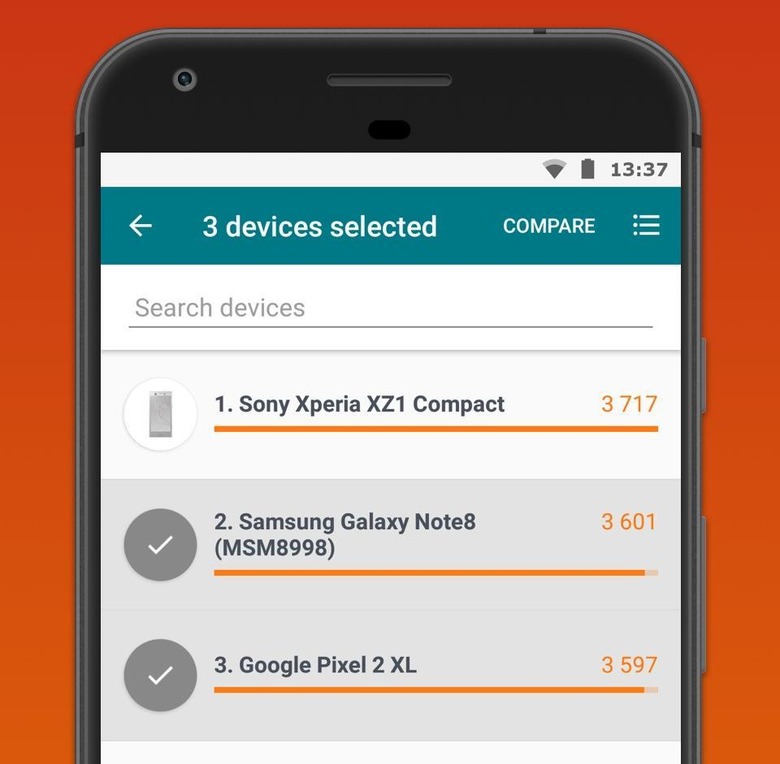

Benchmarks are ultimately tools for comparison. In theory, they establish a point of reference that serves as the basis of that comparison. In theory, it removes the need to use subjective terms like "fast", "smooth", "fluid", etc. replacing them with hard numbers that can be quantified and ranked. Without a starting point and a standard, it's almost impossible to have an intelligible discussion when comparing products. The problem is that, in the tech field at least, those standards aren't exactly standard in the first place.

Non-standard Standards

If you thought that the Metric vs English measurement system was bonkers, try looking at the measuring tools the computing and mobile industry has. While there are a few benchmarking tools that have risen in prominence, each one is like a government of their own, with their own rules and their own standards. Obviously, you'll have to use the same tool across all the phones you want to measure.

But the differences go deeper than that. Benchmark tool creators don't even agree what they should be measuring. Some believe they should be testing the theoretical maximum performance of a device or component. Others try their hardest to simulate "real-world" use. The lack of a standard is something Huawei is also complaining about. Unfortunately, it decided to be part of the problem and wait for someone else to come up with a solution.

Black Boxes

If the situation with benchmarks was that bleak, we probably wouldn't be seeing benchmarks at all. On the contrary, the mobile benchmarking market got its inspiration from the PC benchmarking ecosystem. The problem with mobile benchmarks is two-fold. The first is maturity. The mobile market is so much younger compared to PCs and benchmarks haven't had much time to mature in comparison.

The second reason is perhaps more problematic and can't really be solved by the passing of time. Compared to PCs, desktop or laptop, smartphones are black boxes. Sure, you can know what's inside but, unless you're an OEM or component maker, you won't be privy to how they all work together. Especially the system-on-chip, which is more than just the CPU. So while PC benchmark tools can focus on one component alone, like CPU, graphics card, etc, a mobile benchmark would have to take all of them as a whole because any one factor, from storage type to RAM type to GPU, could alter the results. They may be smaller, but it's precisely because of that that smartphones are also a lot more complicated.

Image courtesy of iFixit.

Wrap-up: Starting Points

So are mobile benchmarks utterly useless? Hardly. As mentioned, they provide a necessary starting point for discussion. They do, however, need to be more standardized. Unfortunately, that's not going to happen any time soon since both benchmark tool makers and OEMs have a vested interest in keeping the status quo. It will have to come from an outside, consumer-biased party.

But more importantly, consumers should also remember that benchmarks are just that, starting points for discussion. Considering the innumerable factors involved, the inconsistency of tools, and the lack of standards, they can never really be seen as completely objective measurements. And they definitely should never be used as a criteria for which smartphone to buy. Especially when you never know if said smartphone is cheating its grade.