IBM SyNAPSE: The Neuron-Inspired Future Of Computing

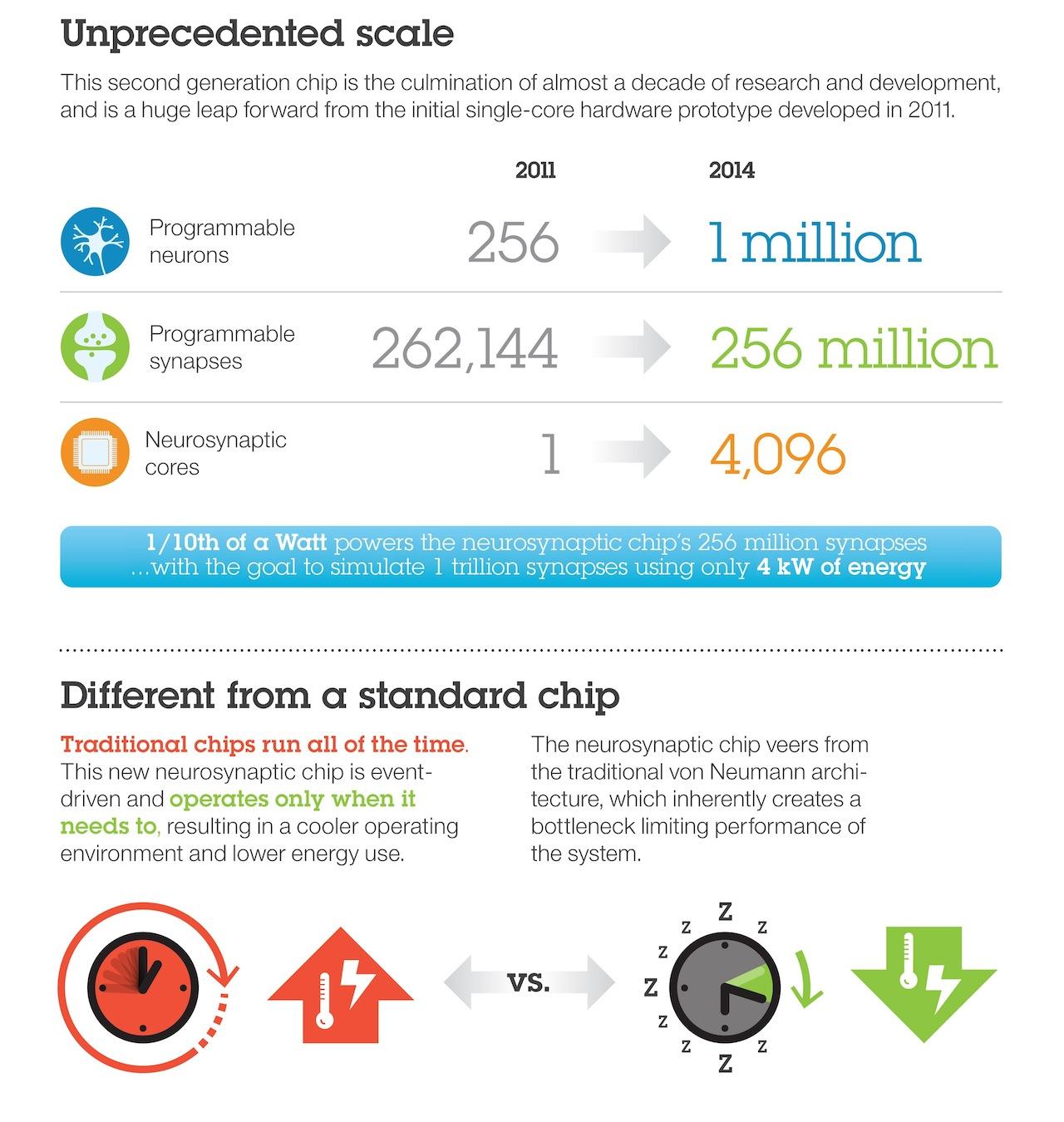

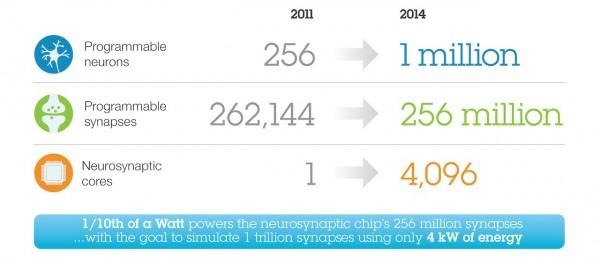

A computer chip that thinks like a neuron in the human brain and sips a fraction of the power of traditional processors could finally open the door to cognitive computing, IBM researchers claim today. Dubbed IBM SyNAPSE, the groundbreaking chip squeezes a million "programmable neurons" and 256 million "programmable synapses" into something the size of a postage stamp, but which could one day allow for advanced digital versions of human senses.

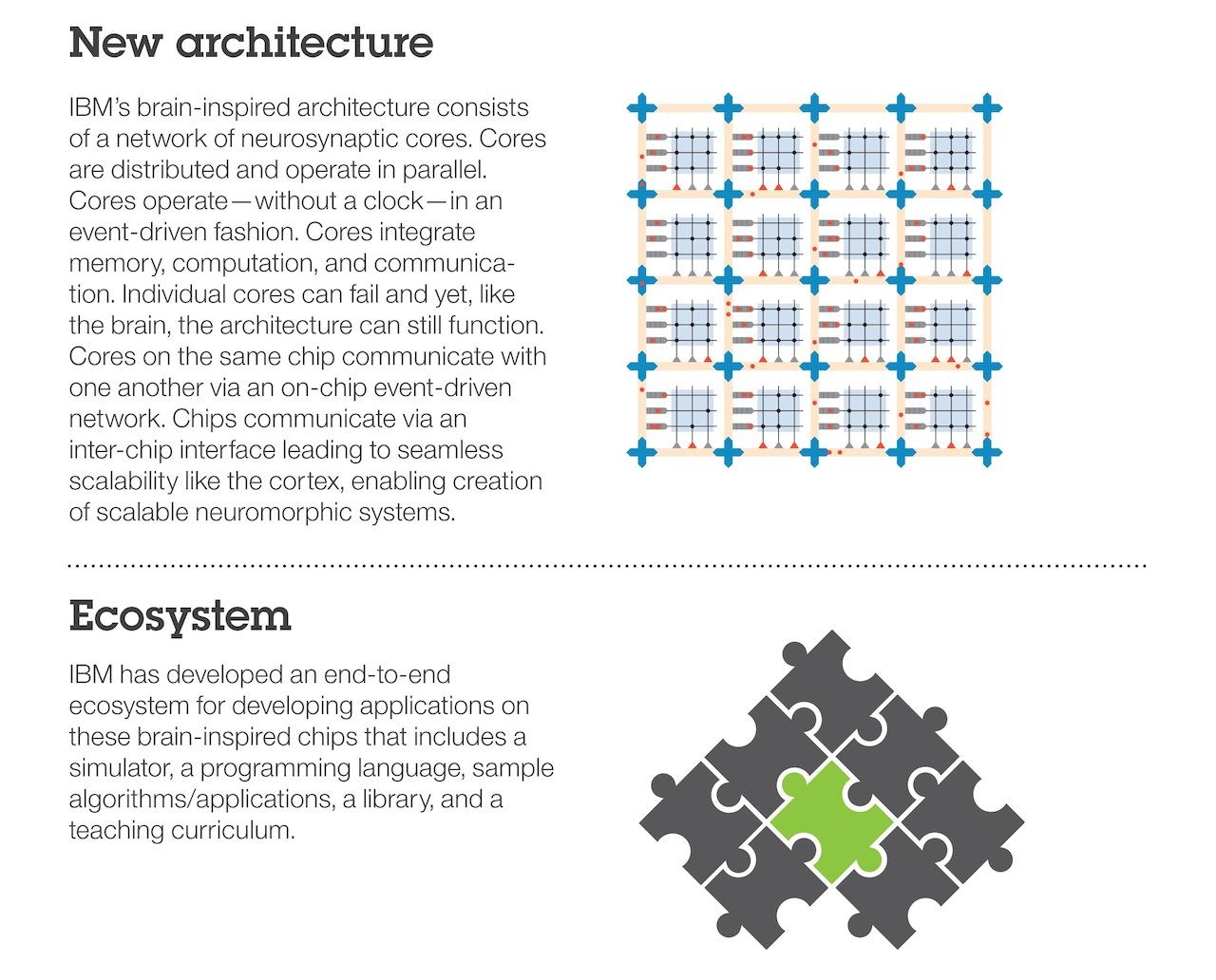

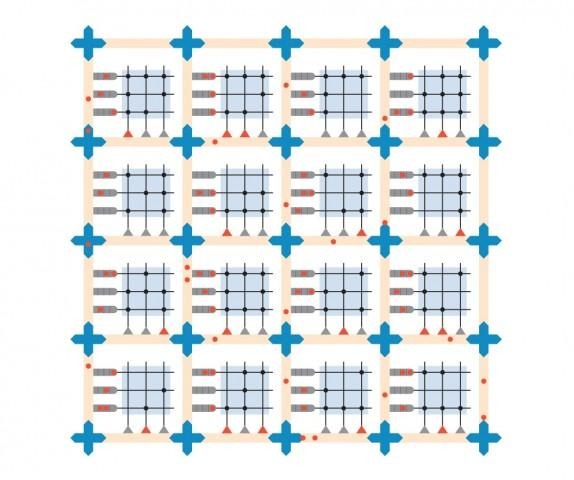

The chip – built by Samsung on 28nm processes – is capable of 46 billion synaptic operations per second per watt, and IBM says it's production-ready. It's made up of 4,096 digital neurosynaptic cores, each with its own memory, computation, and communication abilities, and which can all operate in parallel with the other.

More impressively, perhaps, is the ability of each SyNAPSE chip to seamlessly connect with a neighbor, meaning multiple examples can come together and form a huge digital brain. IBM has so far demonstrated it with an array of sixteen SyNAPSE chips that, amassed, can bring 16m programmable neurons and four billion programmable synapses to bear on tasks.

As for what sort of tasks that might actually include, IBM says the sky is effectively the limit. SyNAPSE could process sensory data in real-time and in new and unforeseen ways, integrating situational context with a far greater degree of fuzzy-logic than traditional processors. So, facial- and object-recognition systems could split off tasks for identifying different aspects of the face to different parts of each chip, figuring out who and what is in the scene much faster than a regular computer might.

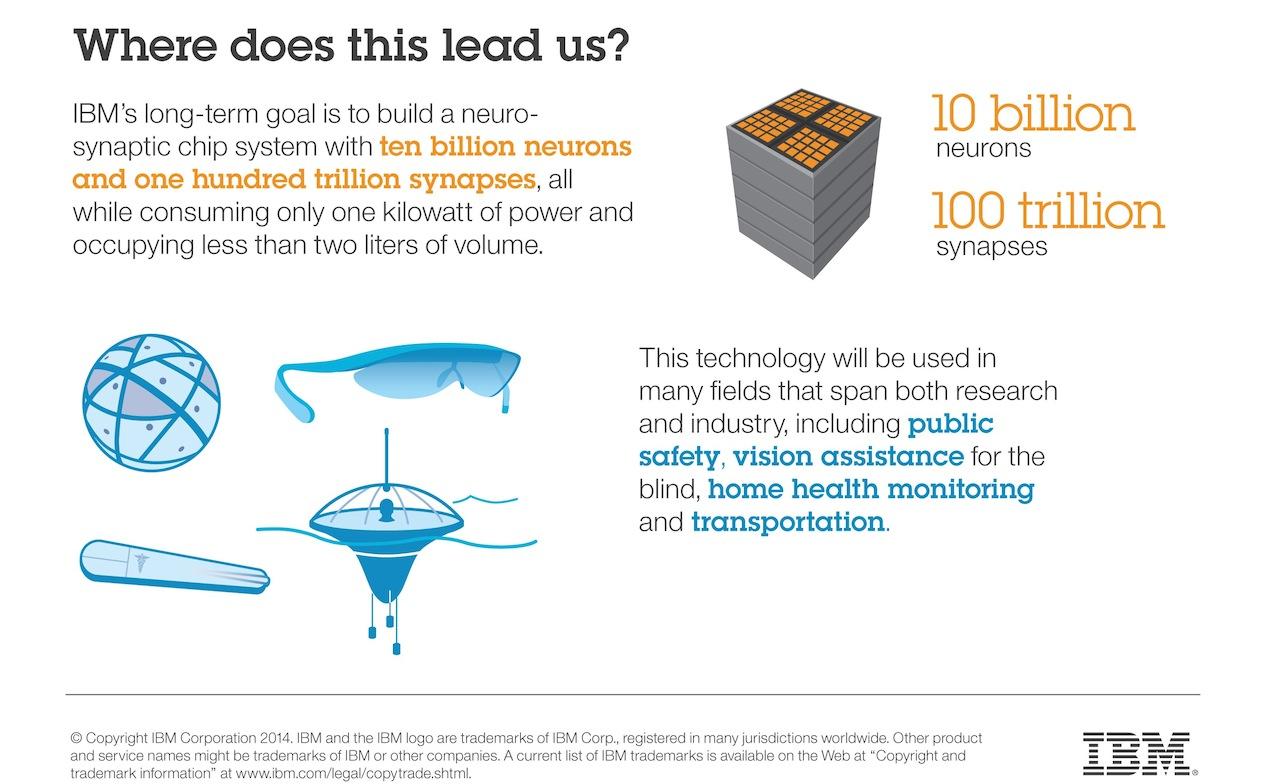

Vastly powerful supercomputers could one day be constructed from a platter of SyNAPSE chips, learning and adapting based on real-world events and settings.

Part of what makes SyNAPSE special is how it can achieve all that while still being frugal with its power consumption. IBM's researchers say that, while running at the equivalent of biological real-time, it would demand just 70mW, similar to the requirements of a hearing-aid.

That tenth-of-a-watt consumption is achieved by running only when there's actually work to be done. IBM is aiming for a practical simulation of a trillion synapses but with just 4 kW of power required.

As things like computational photography, contextually-aware image processing, and adaptive robotic systems living among – and interacting with – humans gain pace, chips like SyNAPSE look likely to proliferate. Last month, Movidius showed off its Myriad 2 co-processor, for instance, which it claims will enable smartphone photography with the range and quality traditionally associated with a DSLR, and potentially as soon as next year.

When SyNAPSE might start showing up in actual production hardware is unclear; for a start, programmers will need to get up to speed on coding for new types of processing so as to take advantage of it. IBM has a new language and is working on a full ecosystem including outreach to universities and existing customers to try to get them onboard.

SOURCE IBM

IMAGE Keoni Cabral