Google Built This EPIC Camera For Fast & Furious 6's Director

When Fast and Furious series director Justin Lin comes calling, wanting to film for your 360-degree revolution in movies, you build him a kick-ass camera. That is, assuming you're the Google ATAP team, flush with the success of the first few Google Spotlight Stories released, and keen to give the platform its debut live-action outing. Problem is, the practicalities of capturing all-around video with real actors are very different from the animations that Spotlight Stories has dealt with so far; that, ATAP lead Regina Dugan explained at I/O this week, meant some impressive new hardware.

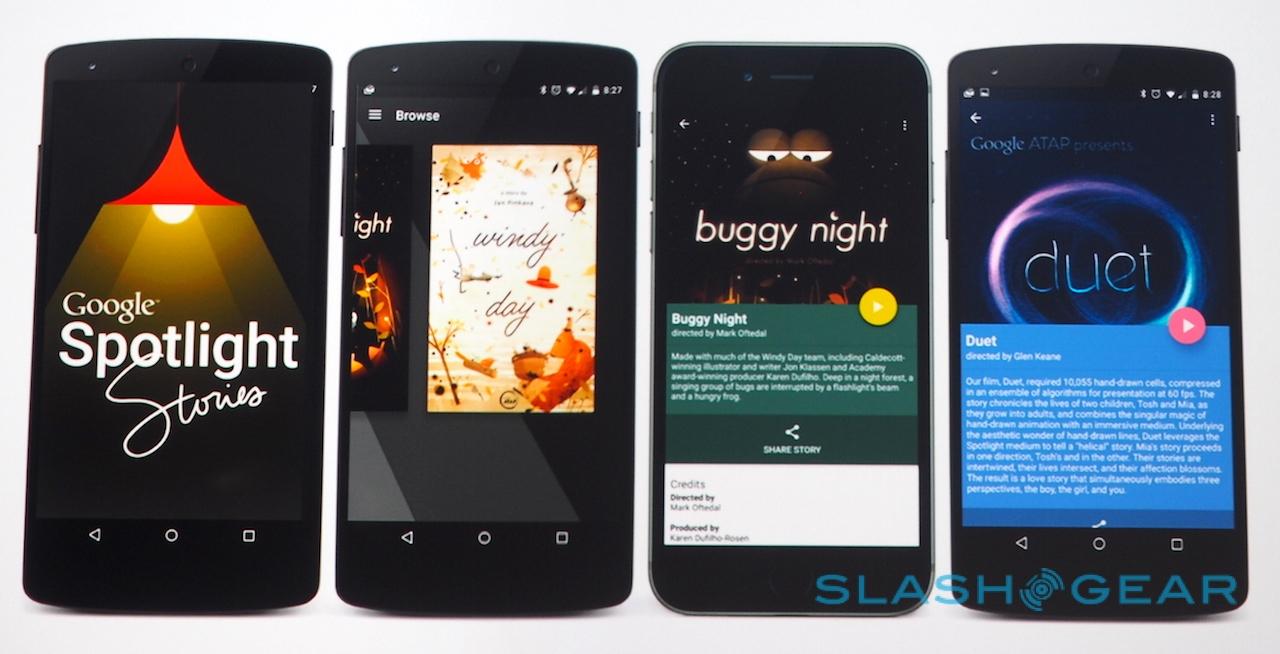

Spotlight Stories is in effect a movie told in the round. The viewer stands inside the action and can look around in all directions, watching it through the a window represented by their smartphone display.

So, while you're physically facing forward you might be seeing one part of the scene, but turning in the opposite direction could reveal new action. Until now, the three releases – Windy Day, Buggy Night, and Duet – have all been animated, but for its fourth installment, Help, famous director Justin Lin wanted to use the same approach but with the high-action feel of hits like Fast & Furious 6.

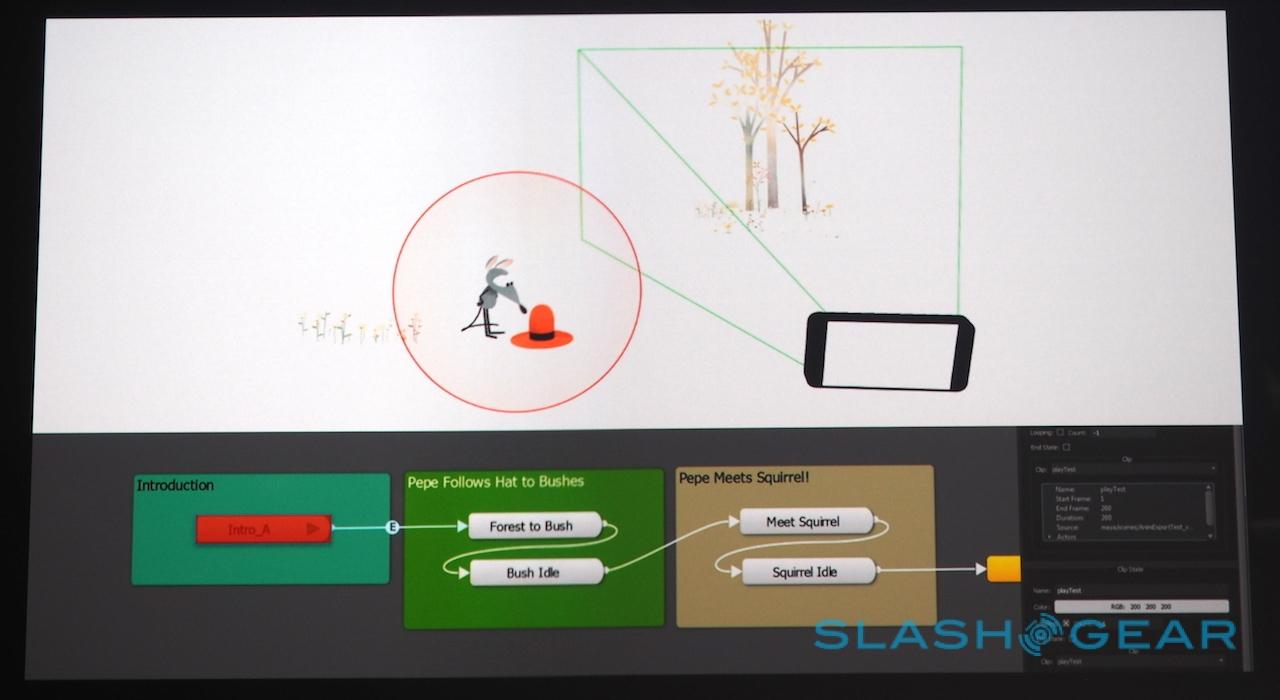

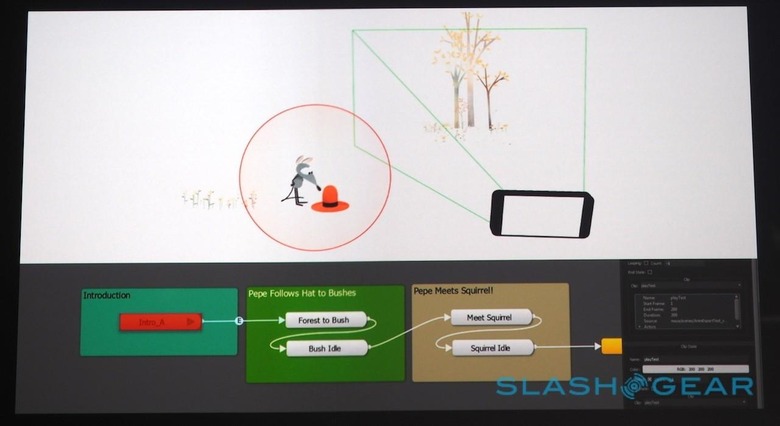

That demanded new hardware. Usually, animators rely on Google's 360 Storyboard tool, allowing them to "look around" temporary sketches with a phone as they build the final thing in 3D software.

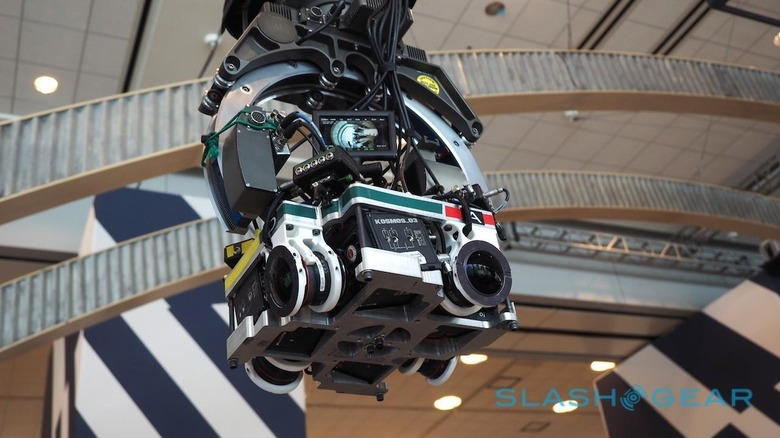

For Lin, it required a brand new camera. The ATAP team went hunting for an off-the-shelf 360-degree movie camera but, discovering nothing quite fit the bill, constructed one themselves from four RED EPIC cameras, wide-angle Canon lenses, and a remotely-controlled robotic arm.

The final result captures at 6K resolution and looks like something you might expect to find bolted to the Curiosity rover on Mars. In fact, the unusual aesthetic is because ATAP stripped everything down to its bare minimum and designed a new casing, allowing overall bulk to be reduced as well as to cut the nodal point of the lenses.

With it, Lin wrote and created Help, a film in which live actors try to escape a monstrous beast in a frantic journey that takes them across crumbling cities and through ominous subway tunnels.

Since the director also needed to know exactly what he was capturing on-set, ATAP also provided him with a number of live preview devices, both a four-monitor rig showing exactly what each camera sees, and a mobile viewer that could be swung around to replicate what the final phone app would show.

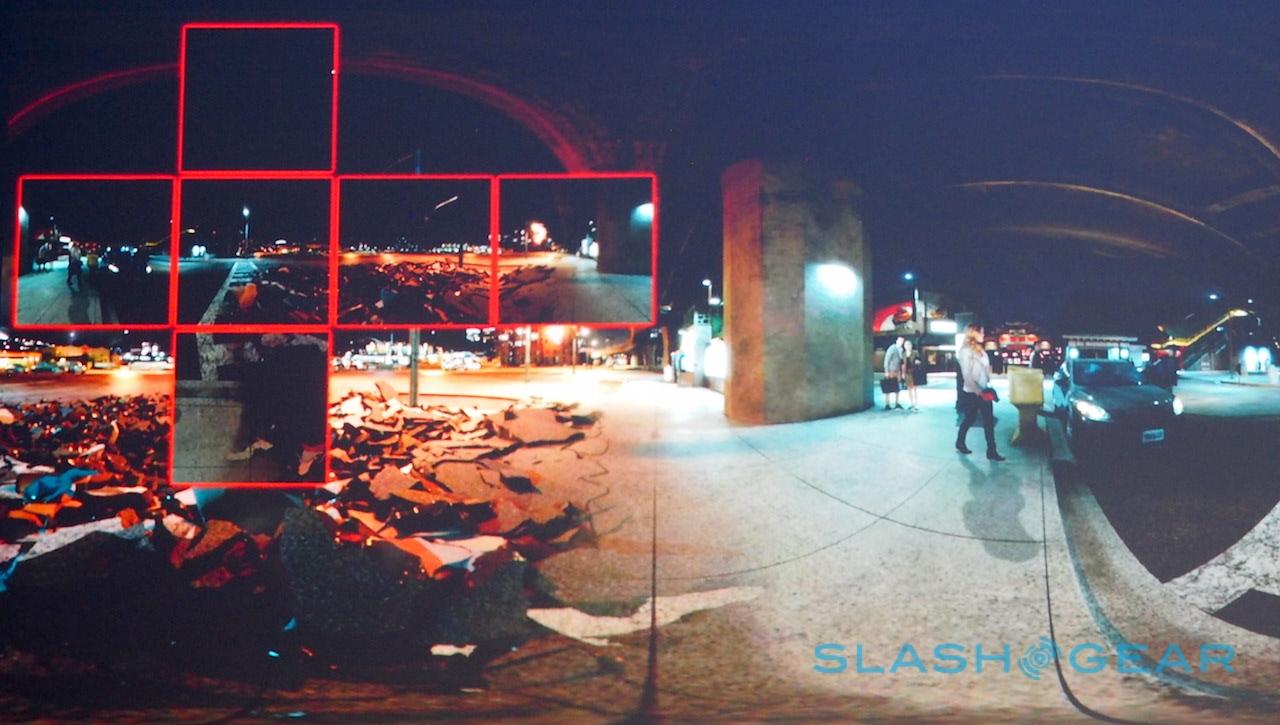

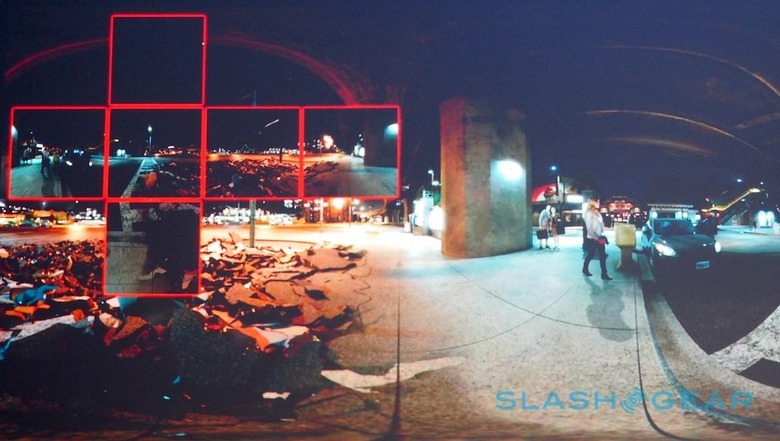

Some work also needed to be done on actually delivering Help to those devices. When you're pumping the equivalent of three 4K videos simultaneously into a phone, you quickly run the risk of bumping into the limits of its graphics processing power, so ATAP cooked up a rendering engine that only works on what is visible at any one time.

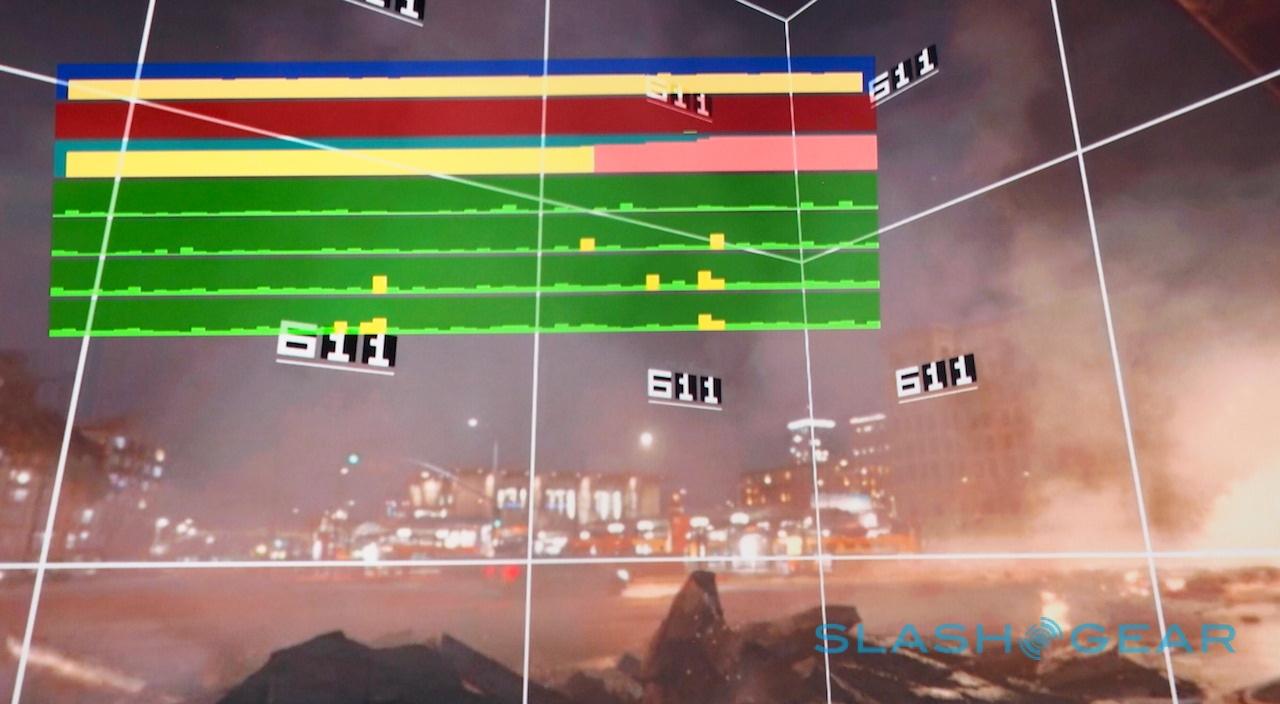

The overall scene is split into multiple tiles, and each is decoded at varying resolutions depending on what's happening and what GPU performance is available. It means that, at most, only 25-percent of the data is actually required.

How, though, do you make sure the viewer is actually looking at the right part of the scene when the action is happening? That required a new set of camera composition and direction techniques, which effectively guide the eye through the movie while still allowing you to look around and see whatever else is happening.

It's paired with Dynamic Virtual Surround sound, which relies on a combination of ambisonics, binaural rendering, and smartphones' abilities to do real-time 360 audio decoding to deliver just the right soundtrack through a regular set of stereo headphones.

Help lands first on Android, but there'll be a new iOS version of the Spotlight Stories app for iPhone "very soon after," ATAP says. This summer, it'll be accessible natively in YouTube, at which point we're likely to see what has still been a relatively niche project explode.

MORE Spotlight Stories