Facebook's Fake News Fight Takes Publisher Check Nationwide

Facebook is expanding its fake news fighting publisher info system across the social network, offering readers background insight before they decide whether or not to trust what they read. The system was launched as a pilot last year, as a way for Facebook to challenge accusations that it allowed misleading or downright incorrect stories to proliferate across the site and influence the 2016 US presidential election among other things.

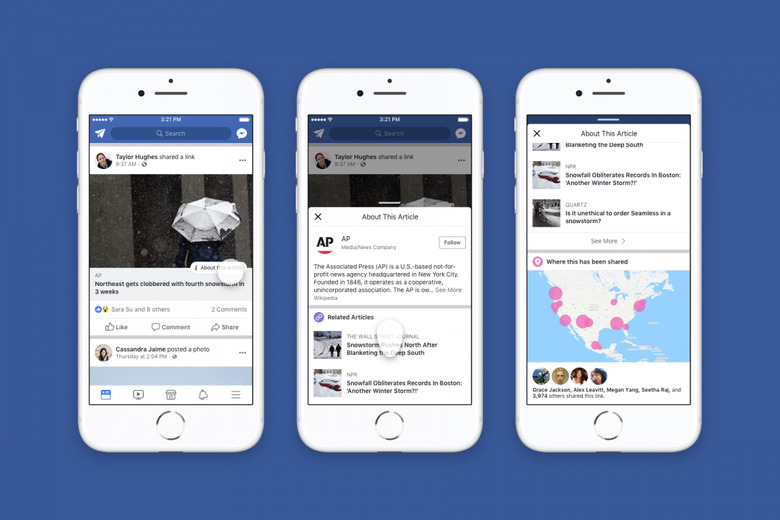

According to the site, its research suggests that there are a few key facts which help avoid that happening. Facebook shows a link to the publisher's Wikipedia entry, along with data on how many times the article has been shared on the site, and where. It also includes related articles on the same topic.

For this expansion, Facebook is adding two new features. "More From This Publisher" offers a snapshot of other recent articles from the same source, presumably as a way to flag any extremist views. "Shared By Friends" shows which members of a persons' friends group have also shared that same article.

If the author of the article has set up their account in the right way, meanwhile, Facebook will also use the tool to link to information on who wrote the piece. That could include a Wikipedia article if available, other recent articles they've penned, and the option to follow their page or profile. That aspect, Facebook says, will only be a small test initially.

Still, Facebook's strategy hasn't been met with universal acclaim. There's a reasonable argument to be made that the background information system only attempts to shift the onus of fact-checking, rather than taking any responsibility for it. Facebook, after all, still gets the traffic and the content sharing, washing its hands of whether the news article is actually based in truth or internationally manipulative.

It's also heavily reliant on Wikipedia, a route which has seen YouTube get into hot water over. Google's video streaming site announced last month that it would link to Wikipedia in more controversial videos, such as those that deal with conspiracy theories and UFOs. However Wikipedia denied any prior knowledge of the system, leading to criticisms that YouTube was taking advantage of the open-access online encyclopedia without paying up for the privilege.

In addition, it raised concerns that the new traffic to contentious Wikipedia pages could see an uptick in vandalism, as groups argue over topics. It's unclear whether Facebook is paying or donating to Wikipedia for the use of its data. The new feature will go live across the US from today.