Apple MultiTouch Fusion Patent Mixes Touch With Voice & Biometrics

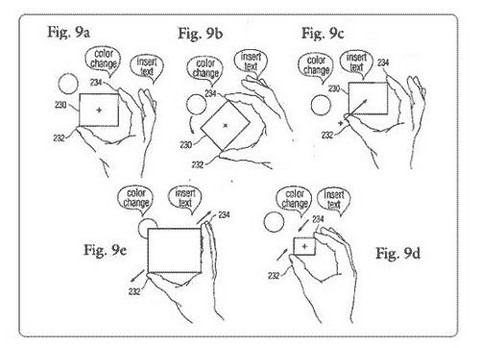

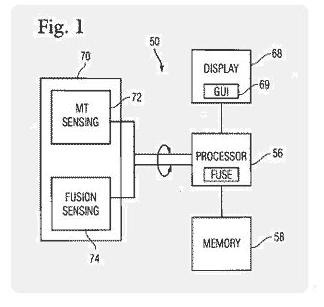

Apple has submitted a patent application describing a system whereby multitouch input is augmented with input from other media, for instance voice control, facial expression or even biometric data. Entitled "Multi-Touch Data Fusion", the patent explains a system where sensors could be built into, or around, a multitouch panel, measuring voice, temperature, vibration, light and more. A possible implementation of this could be manipulating an on-screen control (for instance a dial) while saying "color change"; the dial would therefore control the hue.

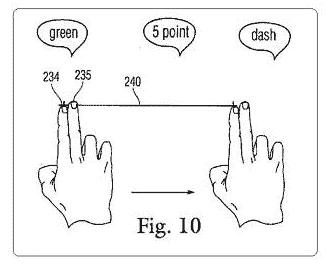

Alternatively, drawing or other media control could be simultaneously manipulated with touch and voice commands. In fig.10, the line (240) being drawn by the movement of the fingers has its properties changed (e.g. color, thickness and form) by voice commands issued while the fingers are in motion. The effect is fewer interruptions to the multitouch input.

Other aspects of the patent include a system for recognising hand profiles – that is, the angle of the hands and which finger is making contact with a multitouch surface – built into either a desk or making use of the webcam in a MacBook screen bezel. This could be used to perform gestures in front of the notebook, which would be interpreted as multitouch commands.

Apple suggests that alternative sensors could include:

"Biometric sensors, audio sensors, optical sensors, sonar sensors, vibration sensors, motion sensors, location sensors, light sensors, image sensors, acoustic sensors, electric field sensors, shock sensors, environmental sensors, orientation sensors, pressure sensors, force sensors, temperature sensors and/or the like" Apple patent

[via MacNN]