Your Whole Life Documented Electronically

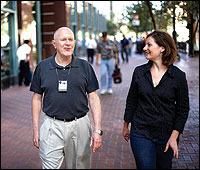

Having started a new job at the beginning of the year, I'm finding myself regularly running into people who I recognise but am unable to recall their name, where they work or in what context I know them. It's frustrating and potentially embarrassing. Not a problem likely to occur in Gordon Bell's life, however; everything he's seen, everything he's read, all the sites he's browsed to, the IM and email conversations he's had, it's all stored electronically: 150GB over the past six years, in fact.

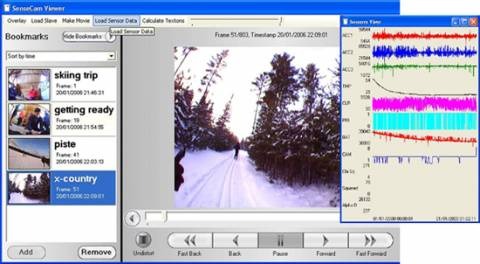

The subject of a Scientific American article on how technology is augmenting memory, Bell uses a system called MyLifeBits which pulls together all the strands of his online and offline life and makes them searchable through tagging and contextual linking. A wearable camera developed by Microsoft Research, called SenseCam, is studded with sensors that trigger shots when light, movement, temperature and proximity to another person is detected. GPS datalogging stores the location each image was taken.

It's a continually-evolving work in progress, of course; facial recognition capacities are being developed to avoid needing to tag every photo taken with who is in it. That would also allow the computer to map a timeline of your contact with an individual, whether that be recorded phone calls, emails, calendar entries and physical meet-ups.

The article is well-worth reading; personally, I'm looking forward to a time when a minute display in my glasses overlays details of just who I'm talking to (and why I know them) into my field of vision.

Scientific American [via Slashdot]