Wearable Projection Computer Project: Internet 'Sixth Sense'

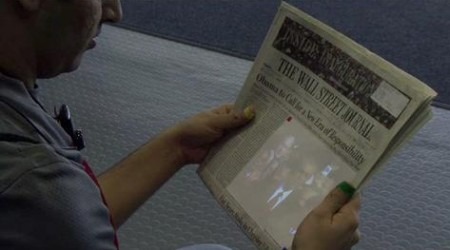

A group of MIT students have developed a wearable computer that projects its display onto any nearby surface, and is controlled by hand gestures and voice-recognition. A prototype was demonstrated at TED this week, capable of projecting a watch face onto the user's wrist after they trace a circle over it, capturing images framed by their fingers, and pulling up information about an individual and projecting it onto them during conversation.Video demos after the cut

When he encounters someone at a party, the system projects a cloud of words on the person's body to provide more information about him — his blog URL, the name of his company, his likes and interests. "This is a more controversial [feature]," Maes said over the audience's laughter. In another frame, Mistry picks up a boarding pass while he's sitting in a car. He projects the current status of his flight and gate number he's retrieved from the flight-status page of the airline onto the card

The students, led by Pranav Mistry, are part of the Fluid Interfaces group at MIT Media Lab; the prototype they demonstrated consisted of a battery-powered 3M pico-projector, a cellphone and a webcam, together with four colored Magic Marker lids worn on the fingertips. The hardware, costing less than $350, can track the lids and interpret the movements according to predefined functions, such as pulling up internet reviews of a book picked up in a store, and projecting them onto the book itself.

The technology, which has been patented, also allows mobile access to email, phone and other communication methods. A menu can be pulled up via a preset gesture, and then shortcuts – such as tracing an @ symbol to call up email – used to access different functions. More information on the project here [pdf link].

[tip o' the hat to s_constantine]