Startling White Dwarf Study Joins Dots On Life's Building Block

A new theory could explain one of the biggest mysteries about how the Milky Way and other galaxies were created, exploring the role white dwarf stars play in creating the building blocks of the universe. Carbon is an instrumental part of not only galactic formation but life, yet its origins in the Milky Way are still unclear.

That's not to say there hasn't been plenty of speculation. Carbon atoms are created by stars, as a product of the fusion process undertaken by helium. How it actually gets from there to flooding a galaxy, though, has been contentious.

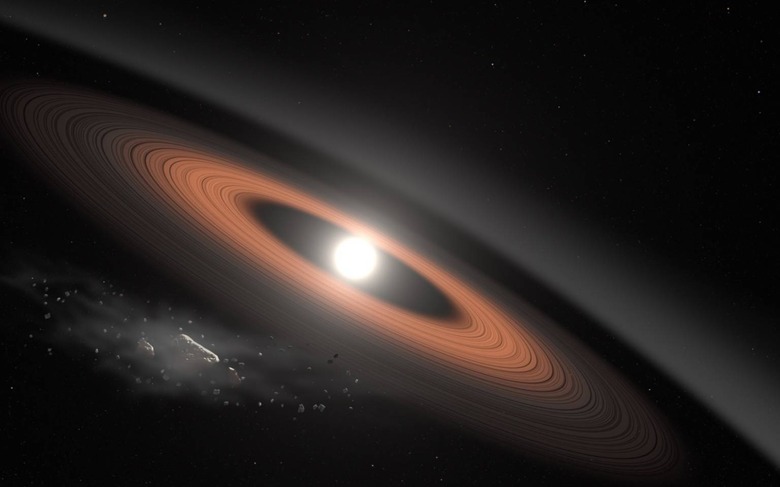

One possibility is that low-mass stars could've been abraded by stellar winds, losing their envelopes of carbon as they matured into white dwarfs. Dense and gradually collapsing over the course of billions of years, they eventually dispense carbon among other elements as they finally die. Another theory, though, suggests that much larger stars exploded, and the resulting supernovae spread the carbon that would go on to form the Milky Way in which Earth is located.

Stepping back in time is obviously not something astronomers can do, but by analyzing white dwarfs found in open star clusters in the galaxy they have a much better idea. Observations of such clusters – consisting of several thousand stars, roughly the same age and held in place by mutual gravitational attraction – were undertaken by the W. M. Keck Observatory in 2018.

Importantly, the stars in the clusters are all from the same molecular cloud. By measuring the white dwarf masses, astronomers were able to derive their masses at birth. That's because that progression is a known quantity in astrophysics, known as the initial-final mass relation.

What wasn't expected, though, was that the open cluster white dwarfs didn't fit in to that relation. Instead, the old stars weighed more than they "should" have.

The result is the establishment of a new mass boundary, on either side of which there are different behaviors around how carbon is stripped away from the evolving star, and how its own mass is impacted. Stars bigger than 2 solar masses create new carbon atoms, which are transferred to the surface and then spread through the universe by stellar winds. Stars smaller than 1.5 solar masses, however, do not.

"In other words, 1.5 solar masses represents the minimum mass for a star to spread carbon-enriched ashes upon its death," the researchers, led by Paola Marigo at the University of Padua in Italy and Enrico Ramirez-Ruiz, professor of astronomy and astrophysics at UC Santa Cruz, conclude. "These findings place stringent constraints on how and when carbon, the element essential to life on Earth, was produced by the stars of our galaxy, eventually ending up trapped in the raw material from which the Sun and its planetary system were formed 4.6 billion years ago."

While the research clearly explains some of the history of our own Milky Way, there are implications for the broader universe, too. "The initial-to-final mass relation is also what sets the lower mass limit for supernovae, the gigantic explosions seen at large distances and that are really important to understand the nature of the universe," study coauthor Pier-Emmanuel Tremblay of the University of Warwick explains.

Those bright stars, close to death and similar to the progenitors of the white dwarfs being studied, are responsible for the vast majority of light emitted by very distant galaxies. It's that light which telescopes collect as astronomers attempt to understand how galaxies and other cosmic features develop.