Samsung Opens Bixby To Developers In Scalable AI Platform Play

Samsung isn't done with Bixby. The virtual assistant may not have reached the usefulness of Alexa or the Google Assistant, or the consumer awareness of Siri, but Samsung is betting that embracing third-party developers and expanding Bixby's availability will make the difference in the long-term.

"We believe Bixby is more than just a voice assistance, and that is why we continue to invest in AI," Eui-Suk Chung, EVP head of software and artificial intelligence at Samsung, said during the opening keynote at the company's developer conference in San Francisco, CA today.

It's instrumental in fulfilling the promise Samsung made back at CES 2018 in January. Then, the company said that all of its devices – from washing machines, through TVs and refrigerators, to smart speakers and phones – would be connected and AI-enabled. However, it's taken some time for Samsung to explain exactly how that will happen or, indeed, why you might want it to.

"AI will truly transform every experience we have with consumer electronics," Chung insisted, "with the new Bixby, users don't need to know how to do something, they just need to know what they want to do and Bixby will take care of the rest. It will be all around you, all the time, working intelligently in the background to empower you to do more."

There'll be three elements to turning Bixby into a "scalable AI platform," Samsung says. "First, we are putting Bixby on more devices," Chung explains, "creating new user experiences and new AI experiences for consumers." That will start out with TVs, refrigerators, tablets, and speakers. "We also expect to see Bixby on more connected third-party devices in future."

There'll be five new languages supported: German, French, Italian, UK English, and Spanish. That should significantly expand the number of people who can actually communicate with Bixby on a day to day basis.

Arguably most important, though, will be making Bixby open. "Now, for the first time, we are opening Bixby up to all of you developers," Chung said. "This means giving you a brand new set of developer tools – actually the same tools we use – so you can build your own Bixby experiences."

Those experiences will be distributed by a new Bixby Marketplace, a channel to market experiences using the assistant. It'll give Samsung a platform to highlight just what its AI can do, vital if it's to explain why its vision of assistant technology is different – and potentially better – than what Google, Apple, Amazon, and others are doing. Also key, it'll allow developers to sell their Bixby experiences.

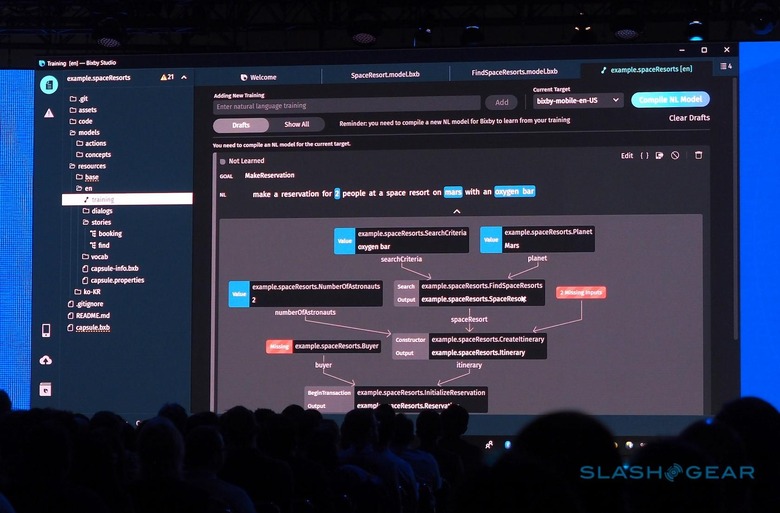

To bring all that to fruition, Samsung today launched the new Bixby Developer Studio. Created by the Viv Labs team, it comes with what Samsung is calling "dynamic program generation": a way for new apps to be built without having to think of every possibility first.

"For decades, the relationship between the developer and the computer has been a simple one," Adam Cheyer, CTO of Viv Labs, explained. "The developer tells the computer what to do, hand-coding every single process." The alternative so far has been AI coding, which leaves developers uncertain as to how the artificial intelligence is operating.

The Bixby Developer Studio will take a different, more collaborative approach. "Developers describe the what," Cheyer says, "the AI then adds the how."

"As a developer, you extend Bixby's knowledge and capabilities by using a "capsule" – think of a "capsule" as like a knowledge pack from the Matrix movies." For example, a "Space capsule" might allow a user to make a reservation at a space resort. Rather than every use-case and interaction being written by the developer, the AI handles those basics, e.g. "make a reservation for two people at a space resort on Mars with an oxygen bar."

The developer would flag that "Mars" is a location, while "two people" would be the number of astronauts, and "oxygen bar" would be the facilities. The AI then automatically creates the code required to built that app. Each stage in the app can be generated by basic UI elements, like calendars, or triggered by a text input from the developer.

What Samsung is hoping will set this all apart is just how much can be included within a Bixby conversation. There's no need to push users into a separate app, because transactions, natural language, and everything else can be enclosed in a "capsule" loaded within that conversation.

Certainly, making AI interactions both easier and more functional for users, and easier for developers to create, is vital if Samsung wants Bixby to gain traction. When your virtual assistant is known primarily for the frustration of not being able to remap the dedicated physical button on your flagship phones, that's a big hurdle to overcome. What remains to be seen is just how many of the developers at Samsung's Developer Conference actually go on to release meaningful Bixby experiences, and whether third-party device makers are similarly onboard.