NVIDIA TITAN V Is Company's Most Powerful PC GPU Yet

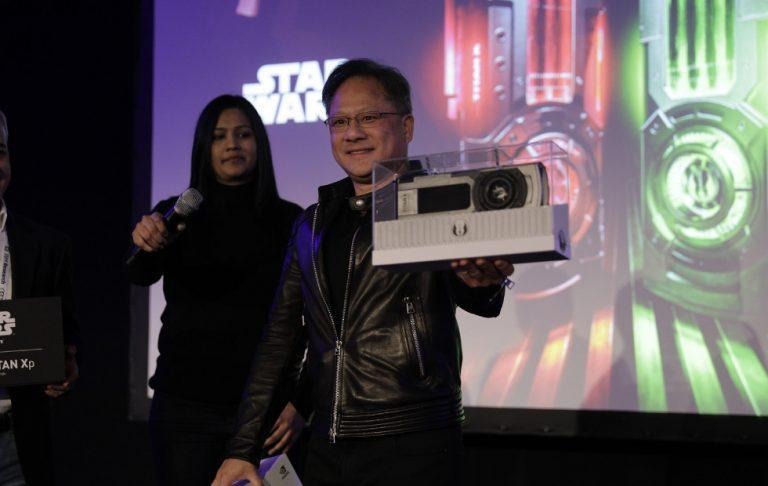

Last year, NVIDIA revived the TITAN X, dubbed the new TITAN X, to reclaim the crown from the GTX 1080. Early this year, it announced the TITAN Xp, dubbed its most powerful desktop GPU for Macs. So what follows it? Not the TITAN Xo or even TITAN Y. Yes, its the new TITAN V, which NVIDIA boasts if 9 times more powerful than its predecessor. And, naturally, that makes it the perfect fit for research and machine learning.

NVIDIA has found a new, profitable market to target, one that isn't at the mercy of the sometimes mercurial gaming market. As it turns out, it's powerful GPUs are just as well-suited for the number crunching needed in mathematical and scientific fields as they are in producing breathtaking graphics. It should be no surprise, then, that NVIDIA's marketing for its recent "most powerful GPUs" have a thing or two to say about research and AI.

The new TITAN V perfectly fits that purpose. It is based on NVIDIA's newest Volta GPU platform that it unveiled last May. The TITAN V boasts of 110 teraflops of power, almost 10 times as much as the TITAN X before it. That alone should make those who need computing power in a small box, a.k.a. researchers drool.

It isn't just about raw horsepower either. The TITAN V utilizes a combined L1 data cache and shared memory unit to minimize the latency involved when moving data around. It also has 12 GB of High-Bandwidth Memory or HBM2, touted to be the next evolution of graphics memory.

All that power naturally comes at a price. $2,999 to be exact. NVIDIA is, however, making the prospect a bit sweeter for researchers by making its GPU-optimized AI software available for free. Via its own NVIDIA Cloud, of course.

SOURCE: NVIDIA