MIT Researchers Control Robots With Brainwaves And Gestures

Researchers are always looking for ways to make controlling robots more natural for human operators. MIT is making strides in controlling robots using brainwaves and hand gestures. This could mean robots will one day need nothing more than a thought from a human operator to control them.

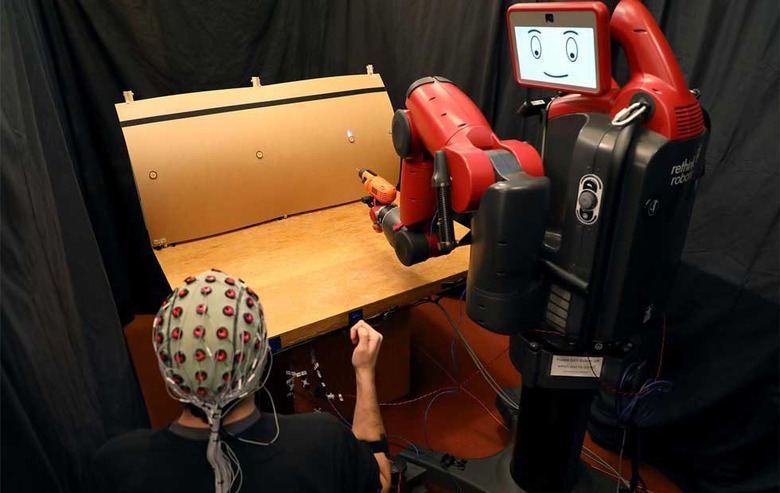

The system being developed at MIT's Computer Science and Artificial Intelligence Laboratory aims to let the user instantly correct mistakes a robot makes as easily as lifting a finger or thinking about the right action. The team has been working on this sort of control system for a while and the new work extends the scope of the original work.

The system monitors brain activity and can detect in real-time if a person notices and error as a robot is performing a task. The interface measuring muscle activity allows the person to make hand gestures that scroll through and choose options for the robot to execute. The past work on the subject that the team performed was only able to recognize brain signals when people trained themselves to think if very specific, arbitrary ways.

That original system was trained on recognizing those specific signals that were generated when the operator looked at different light displays that corresponded to tasks a robot could perform. In the new project, the robot used is called Baxter and is from Rethink Robotics. With supervision, the robot was able to increase its ability to choose the correct target from 70% of the time to over 97%.

The team used an EEG machine to record brain activity and an EMG machine to detect muscle activity. With the new system of looking at both muscle and brain signals, the control system can pick up natural gestures along with "snap" decisions on whether something is going wrong.

SOURCE: MIT