MIT Develops Systems For Autonomous Cars To Help Classify Drivers

MIT has created a new system for autonomous cars that might one day help the autonomous auto to operate safely alongside human drivers. A team from MIT has been exploring if self-driving cars can be programmed to classify the social personalities of other drivers to allow the autonomous auto to better predict what different vehicles will do.

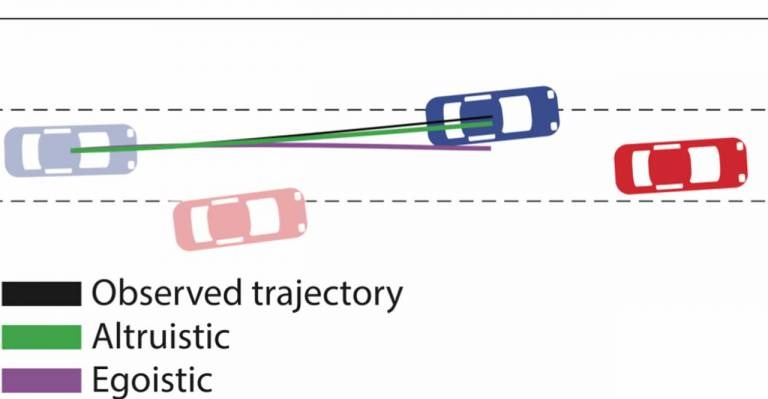

This will allow the autonomous auto to drive more safely around the other vehicles. The team published a paper that looks at the integration of tools from social psychology to classify driving behavior concerning how selfish or selfless a particular driver is. The team used something called social value orientation (SVO) that represents the degree to which someone is selfish (egoistic) versus altruistic or cooperative (prosocial).

The system them estimated the drivers' SVOs to create a real-time driving trajectory for the autonomous auto. Testing of the algorithm was done on the task of merging lanes and making unprotected left turns. The algorithm allowed the team to better predict the behavior of other cars by a factor of 25%.

In left-turn simulations, the car with the new algorithm was able to know to wait when the approaching car had a more egoistic driver and make the turn when the other vehicle was more prosocial. While not yet robust enough to be used on real roads, the new algorithm could help drivers in different ways.

The algorithm could be used to give drivers a warning in the rear-view mirror that a car in their blind spot has an aggressive driver to allow the diver to adjust accordingly. The algorithm may also allow autonomous autos to one day become more human-like in their behavior. One issue with current autonomous autos is that they are all programmed to assume all humans act the same way. That makes them conservative in decision-making at four-way stops and other intersections, which can lead to angered drivers.