Microsoft's Project Brainwave Brings Real-Time Artificial Intelligence

Microsoft has introduced additional details about Project Brainwave, a deep-learning acceleration platform for real-time artificial intelligence. This isn't the first time we're hearing about Project Brainwave, but we do get more details this time around. Microsoft detailed Project Brainwave recently at Hot Chips 2017, explaining that this project 'achieves a major leap forward in both performance and flexibility for cloud-based serving of deep learning models.'

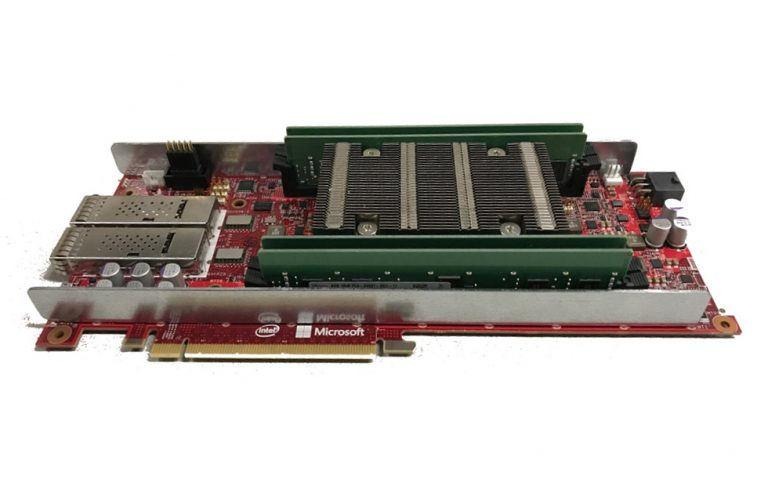

Microsoft dabbled on the subject of Project Brainwave a couple times last year, but this is the first instance of it providing any substantial details. The company explains that its FPGA infrastructure, which has been rolled out in recent years, is being leveraged by Project Brainwave. The field-programmable gate arrays — that is, the FPGAs — are joined by deep neural network processing units.

Microsoft explains that the overall Project Brainwave system is composed of distributed system architecture alongside FPGAs with synthesized DNN engines and a compiler/runtime that deals with trained models. Unlike some other companies which use hardened DNN processing units (DPUs) for their similar technology, Microsoft's undertaking uses what it calls 'soft' DPUs that can be scaled based on data types.

Microsoft researchers recently demonstrated the technology in action. In a blog post about the demo, Microsoft explained that the sustained performance level it achieved with Stratix 10 silicon was 'record-setting,' and that it achieved 'unprecedented levels of demonstrated real-time AI performance on extremely challenging models.'