Microsoft Soundscape Helps The Visually Impaired Hear The World

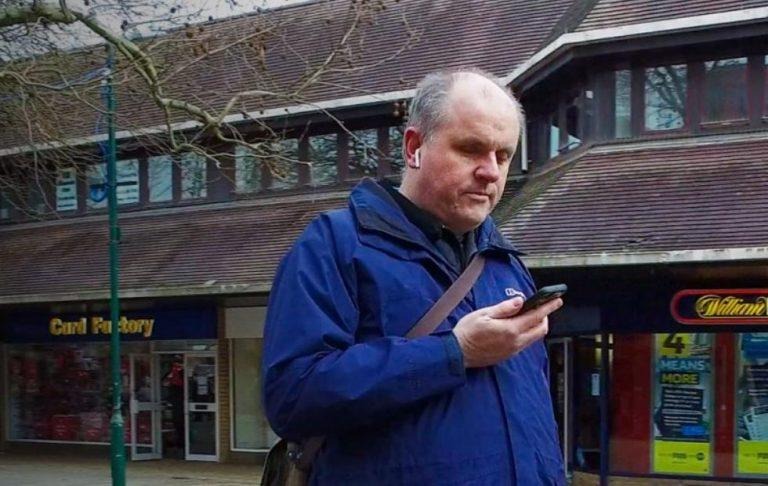

With the importance placed on screens, apps, and user interfaces, smartphones have become visual devices first and only communication devices second. That immediately puts a class of people at a disadvantage, those that have trouble seeing or couldn't see at all. Mobile technology has seemed to have forgotten these part of the population would benefit from the advancements in technology most. And so it is with some sense of relief when developers, especially big companies, give attention to accessibility. Like the new Soundscape iOS app from Microsoft Research, which lets those with visual impairments see the world around them using their ears.

Soundscape actually doesn't make use of any revolutionary new technology, machine learning, or hardware. OK, maybe it has a bit of machine learning involved in dealing with locations, but all in all, it uses technologies and features already found in phones and all around us.

Soundscape basically works around the concept of beacons. But instead of physical hardware beacons, Soundscape makes use of virtual pins that others or the users have placed in certain locations, much like pins on a maps app. Users with blindness or low vision can then hear information about these beacons as they come close or manually search for nearby points of interest.

It's not simply like reading aloud map information, tough. It uses 3D audio to make the notes sound like they're actually coming from the direction where the tag is located. This would allow users to form a better map of the area in their head, and visually impaired people are particularly impressive in doing that.

Soundscape is currently available only for iOS, though there doesn't seem to be any technical dependency that would prevent it from being on Android in the future. It does require the use of stereo headsets for best results in hearing audio from the proper direction.