AR App-Makers Just Got A Huge Treat Ahead Of HoloLens 2

Microsoft is throwing open HoloLens' more advanced sensors, giving apps for the augmented reality headset access to its 3D mapping abilities and more. The wearable computer has been available to well-heeled developers since early 2016, but it's only with a recent update to Windows 10 that it has unlocked its whole sensor suite.

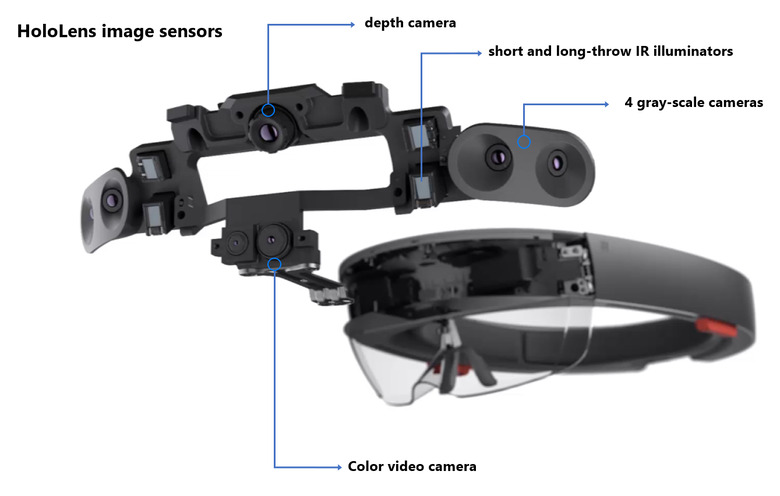

Until now, in fact, applications running on HoloLens – which is effectively a full Windows 10 PC running in a wearable form-factor – got access to the video stream from the color camera mounted on the front, as well as audio from its microphones. They're only a part of the overall sensors, however.

For instance, there are four grayscale cameras which HoloLens uses to track the environment around it. Two of those cameras capture the area in front of the headset, allowing apps to figure out the absolute depth of tracked visual features through triangulation. The other two point further to the side, expanding the field of view. While they may lack color capture, they're actually "significantly more light-sensitive" than the color camera HoloLens uses, Microsoft says.

The other key sensor is the depth camera, mounted at the top of the headset. It uses infrared, not human visible light, and operates in two modes. One, running at 30fps, tracks nearby objects like hands moving in HoloLens' field of vision. A lower-frequency mode clocks that down to 1-5fps for far-depth sensing. Right now HoloLens uses that for spatial mapping. As it works on IR, and HoloLens has an IR emitter, it can also take illuminated images that are "reasonably unaffected" by the ambient light conditions.

Now, with an update to Windows 10 for HoloLens, there's a new Research Mode which allows developers to tap into the raw image sensor streams. There'll still be the option to use Microsoft's own computer vision algorithms that the wearable itself provides, but the raw data will also allow app-makers to use their own algorithms. HoloLens will support both local processing and wirelessly transferring the data to another PC or into the cloud, if the Atom CPU the headset uses isn't powerful enough.

The functionality is likely to make HoloLens far more appealing to researchers looking at applications beyond just AR wearables. For example, Microsoft suggests, robotics projects looking at computer vision might want to experiment with HoloLens' sensors to better teach robots how to navigate through different environments. It'll be all the more important when the next-generation HoloLens arrives, which we're expecting to see in early 2019.

That will use the Project Kinect for Azure sensor, which Microsoft announced at Build 2018 earlier this year. It will provide greater resolution and more raw data, among other things, but won't just be limited to HoloLens. Microsoft will also be offering the sensor bar to other developers wanting to include its 3D mapping capabilities in their own devices.