Intel Server GPUs Target Android Cloud Gaming Servers

It may have once been the king of personal computing but Intel is now seeing its kingdom being attacked on almost all fronts. ARM-based laptops are starting to become trendy especially with the introduction of Macs running on Apple's M1 Silicon. Even on the server side, AMD and NVIDIA are closing in on the lucrative market, especially with the latter's GPU-based approach. Intel is now pushing back with its own new discrete GPU platform whose latest incarnation is being put at the service of Android games on the cloud.

Intel recently announced its first-ever discrete GPUs based on its new Intel Iris Xe graphics architecture. But while the Intel Iris Xe MAX targets thin-and-light laptops, this next batch, based on Intel Iris Xe-LP, is aiming for servers instead. Specifically, servers that need the heavy number-crunching demanded by mobile games.

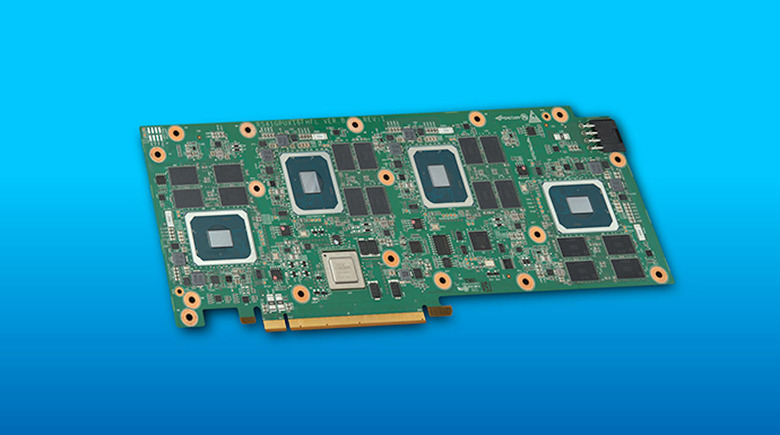

Intel's cloud gaming solution takes the form of an H3C x16 PCIe Gen 3.0 card that packs four of these Intel Server GPUs together. Each GPU comes with 8GB of dedicated LP-DDR4 memory for a total of 32 per card. Each GPU is also advertised to be able to run 20 Android games per chip or 80 games per card. A typical two-card system, Intel says, will be able to handle 100 to 160 users at the same time.

It is definitely interesting that Intel chose this rather specific market for its first server GPU audience. Mobile gaming is admittedly a lucrative business but, unlike actual game streaming where games are rendered on a remote server, most mobile games offload only data processing and user management. They don't really demonstrate the graphics capabilities of Intel's discrete GPUs.

That said, Intel isn't stopping there either. After these Server GPUs based on Iris Xe-LP and the Iris Xe MAX for laptops, the chipmaker is looking to the high-performance computing segment next with Xe-HP GPUs. These are already being made available to select developers and we might finally be able to see its high-performance graphics at work next year.