Google Reprimanded For YouTube Kids App Showing Inappropriate Content

Google's recent mobile app, YouTube Kids, a version of the popular video service that curates safe content for young children, has come under fire from two child and consumer advocacy groups claiming that the app is deceiving. The Center for Digital Democracy and the Campaign for a Commercial-Free Childhood filed a complaint with the FTC, stating that "the app is rife with videos that would not meet anyone's definition of 'family friendly.'" The complaint included evidence of video clips that had been found on YouTube Kids that were described as disturbing and/or harmful to young children.

The video clips they submitted were said to have been found on the YouTube Kids app within a single day. Some examples of inappropriate content that were among the clips included the following:

- a commercial for Budweiser

- a cartoon with characters using explicit language

- a dance instructor showing how to perform Michael Jackson's "crotch grab" move

- a demonstration of how to set a pile of matches on fire

- adult discussions on the topics of drugs, violence, and suicide

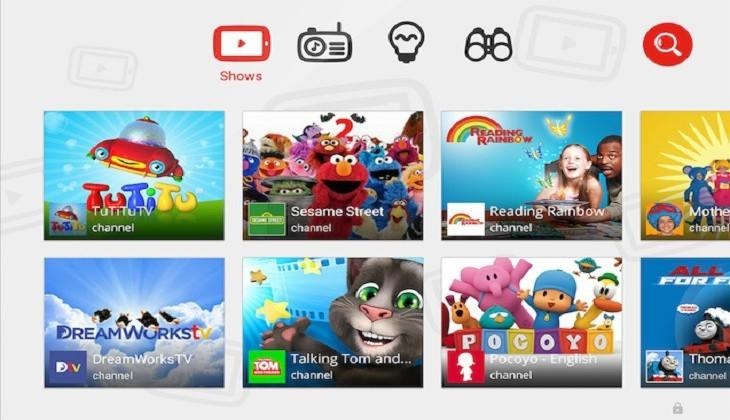

Google launched YouTube Kids back in February, with the Android version being described on the app marketplace as "kid-friendly" and intended for "curious little minds." Official screenshots for the app show the homescreen displaying videos such as Wallace and Gromit, Sesame Street, and Teletubbies. There are also tabs for "music" and "learning," which highlight kid-safe music and educational topics like science and art.

The FTC has confirmed that it received the complaint from the advocacy groups and will review the evidence, however they have yet to decide to open a formal investigation. A spokesperson from Google has issued statements reminding users of the safety features of the YouTube Kids app, including the option to turn off the search function, and the ability to flag any video as inappropriate. Google adds that they manually review flagged videos and remove anything deemed inappropriate.

SOURCE Wall Street Journal