Google Lens Is Coming To Android's Camera App With A Bunch Of New Features

Google Lens is a cool piece of technology as it is, but beginning next week, it's going to become a lot more accessible. No longer hidden away inside Google Photos and Assistant, Lens will soon be coming to Android camera app on a sizable number of devices. While we'd expect it to be added to the camera app on Google's own Pixel lineup, the full range of manufacturers supporting this roll out may surprise you.

At Google I/O today, the company unveiled all of the partners that will be adding Google Lens to the camera app on their phones. The list has a good number of major Android manufacturers on it, but one that's notably and (unsurprisingly) missing is Samsung. Sorry Galaxy adherents, you'll have to stick with Bixby Vision for now.

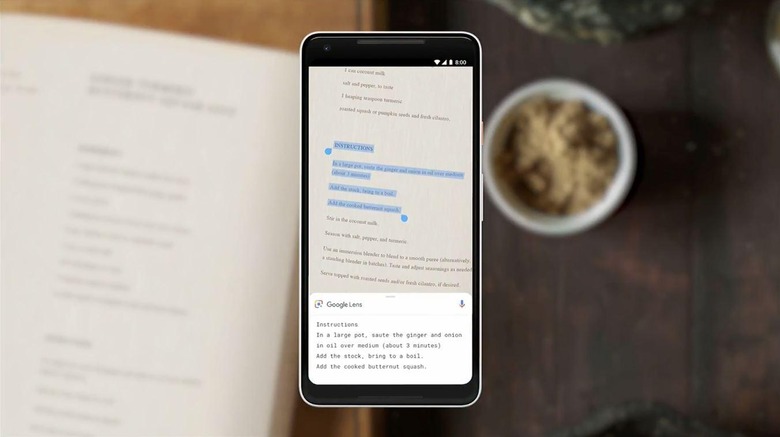

In any case, Google Lens is also getting some new features to go along with this launch. The first of these features is called Smart Text Selection, and it's particularly impressive. Smart Text Selection will allow you to copy and paste text you encounter in the real world – for instance, in a book – removing the hassle of typing it all out. You can also use this with Lens to look up a term you're unfamiliar with, without having to type it into Google Search yourself.

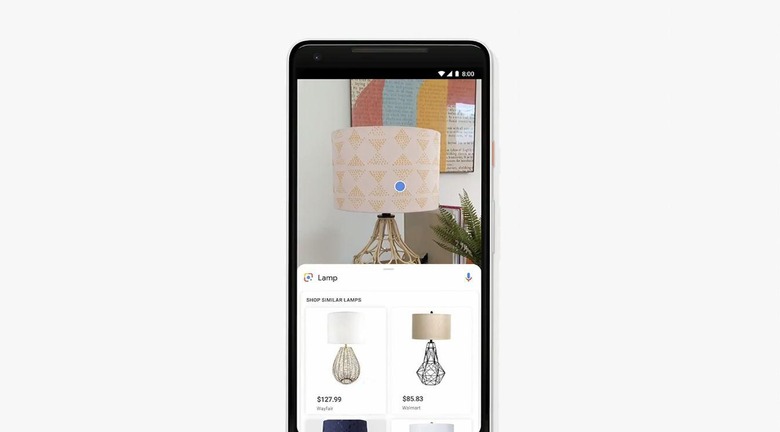

The next feature coming to Lens is called Style Match. Style Match is meant to help you find things that are similar to items that catch your eye in the real world. One of Google's examples involves encountering an interesting lamp and using Lens to find other lamps that are similar – not necessarily identical – in style, with shopping links inserted where they're appropriate.

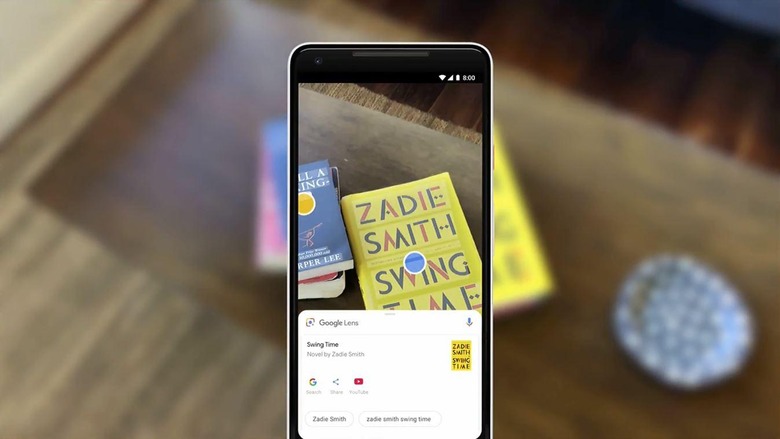

Finally, we'll see real-time results come to Lens. Essentially, this uses machine learning to surface information on something you're pointing your camera at without requiring you to snap a photo first. You might open your camera app, point it at a poster for a musician and have it surface a music video in real-time, without the need to analyze a photograph before you get that video.

The new features coming to Lens certainly sound pretty awesome, and as with most things based in machine learning, they're going to get better at what they do as more people use them. We'll see Lens come to flagship phones from the manufacturers listed above beginning next week, with these new features rolling out to all users over the next few weeks, so stay tuned.