Google Kinect-Style Android Motion Tracking Teased In Patent App

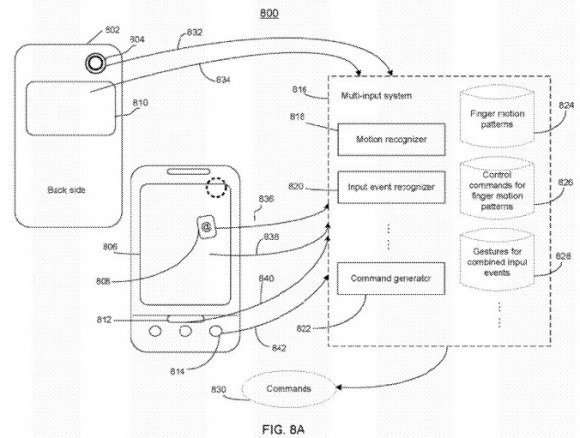

Google is exploring using Kinect-style motion tracking to add a new degree of gesture control to mobile devices, a new patent application suggests, adaptable to future Android phones but also wearables like Google Goggles. The submission, titled "Use camera to augment input for portable electronic device", describes using the front-facing camera in a phone, tablet or other gadget to identify and track the user's fingers in the space around it, recognizing "single tapping, double tapping, hovering, holding and swiping."

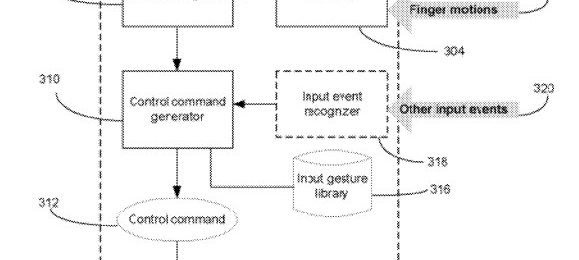

"Systems and methods are provided for controlling a portable electronic device. The device includes a built-in image capturing device. The system detects, through the image capturing device, motions of user finger over the image capturing device. The system determines a pattern of the detected motions using timing information related to the detected motions, and controls the portable electronic device based on the determined pattern. The system also receives inputs from other input devices associated with the portable electronic device, and controls the device based on combination of the determined pattern and the received inputs" Google patent application

As Google describes it, the gesture input could be used to trigger phonecalls, navigate through multimedia and more, perhaps working in conjunction with other sensors such as the device's accelerometer, digital compass, microphone and others. A combination of light and timing would be used to differentiate between different gestures and commands, such as hovering or virtual clicking, and while Google suggests actual contact between the finger and the camera would be supported, movements within its range of sight would also be possible, presuming there was sufficient differentiation between light and dark sections of the frame.

"The portable electronic device may be a mobile device (e.g., a smart phone such as Google Nexus) that is capable of communicating with other mobile devices through network 90. The portable electronic device may also be a music player, or any type of multi-function portable user device that has a built-in camera" Google patent application

Although a phone is the obvious device to use such a system – having a relatively small display to be pocketable – the persistent rumored wearable augmented-reality computer being developed in Google's X-Labs could also benefit. According to the leaks, the glasses would indeed use a forward-facing camera to track hand and finger movements, translating them into navigation and control of the "Google Glasses" Android-based OS.

It's not the first time we've seen camera-based tracking used in mobile devices. Sony Ericsson used such a system all the way back in 2009 for basic gaming on a cellphone, while other phones used wave-gestures to skip back and forward through music tracks. Japanese researchers, meanwhile, took the idea considerably further in 2010, with a high-framerate camera used to track finger input in 3D space in front of a cellphone and use it for entering text on a virtual keyboard, among other things.

As with any patent application, of course, there's no telling whether a commercial implementation of the system will ever show up in the wild.

[via Slashdot]