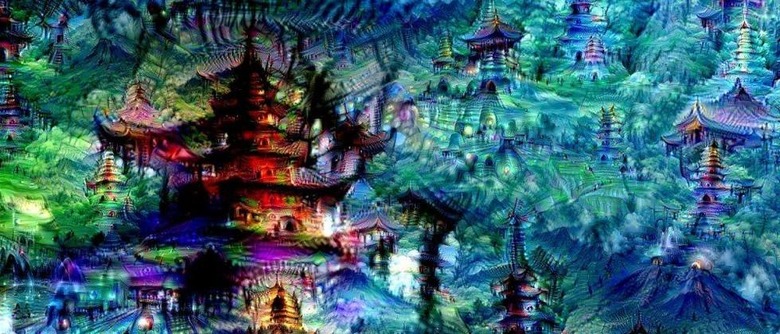

Google AI Creates Dreamy Images From Artificial Neural Networks

Artificial neural networks (ANN) are the driving force behind speech recognition and image classification. ANN process images, isolating the definitive essence of an image from background noise. It's one method that Google's image search uses to tell the difference between similar images, like forks and knives. The networks need to learn that forks have tines to differentiate them from knives. Google uses these networks to manage the vast array of images that flow through its database, and it is always tweaking the mathematical models to create more precise image identification. The result of the latest experiments in ANN parameters is a batch of images that are downright trippy

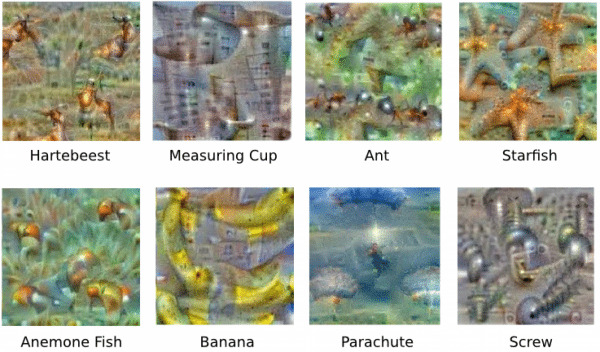

In 2012, Google's neural networks were just advanced enough to identify cats on the internet. The process has come a long way since then. Take a look at what else Google can identify, below.

Researchers can adjust the parameters to make sure the neural networks understands that a banana, for example, will be yellow, in a curved oblong shape. It could be oriented in the image a number of ways, but the essence of the image is preserved. The neural networks don't always get it right. Without the proper parameters, networks include various kinds of "noise" along with the image. AS most pictures of a dumbbell have an arm pumping iron, one network deducted that dumbbells must have arms, and at no dumbbell image is complete without a flesh-toned appendage.

These hallunication-like images are the output from repeated feedback loops within neural networks. Like a computational game of "telephone," the image you start with doesn't exactly mirror the final image. The ANN was tasked with isolating patterns from white noise. As the image passed through earch layer of the ANN, it became more distorted as each neuron tried to distill the essence of the image.

Google researchers continue to develop these neural networks. If these dreamy images are any indication, Google dreams of electric sheep.

Source: Google Research Blog