Gboard On Pixel Phones Get Fast Offline Speech Recognition

Even before there was Google Assistant, Google was already playing with voice and speech recognition for various features, including Voice Search. Like most of Google's AI-powered functionality, however, those relied on an active, not to mention good, Internet connection. That's OK when you're trying to search for something online or place an order, not so much when you're dictating or translating words. Fortunately, Google's AI team has come up with a solution for the Gboard keyboard that will let you dictate text even when you're offline.

Speech recognition systems are actually composed of multiple parts, each one a critical part of the pipeline. There's a model that maps input audio to a distinct unit of sound called a phoneme, a model that connects phonemes to form words, and another model that tries to guess the phrase. Given the complexity of these models, they have traditionally been stored on remote servers where input audio recordings are sent over to be processed.

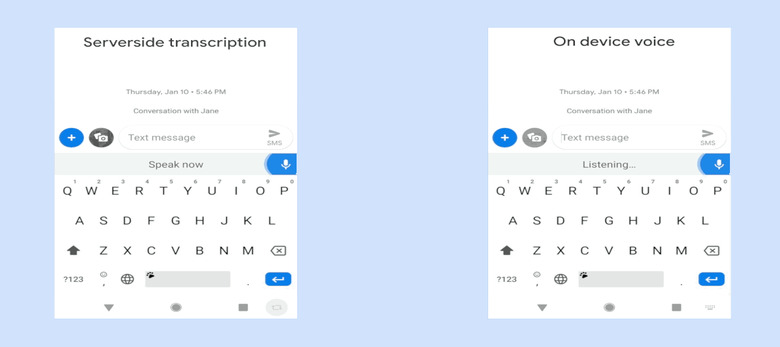

While that method often gives accurate results, the latency pretty much kills any hope of real-time use for things like on the spot translation and dictation. Fortunately, a new type of neural network model has been developed which Google calls Recurrent Neural Network Transducer or RNN-T. In a nutshell, instead of waiting for the entire input to be sent and then processing it, an RNN-T processes input samples as they come and streams output symbols.

In Gboard's case, those output symbols are basically characters of the English alphabet. That is why the new Gboard speech recognition feature appears to spit out words one character at a time, something which looks more natural to humans.

More importantly, RNN-T models are small enough that they can fit inside the phone. There is no latency to speak of because processing and crawling the model all happen on the device, without the need for an Internet connection. This new all-neural, on-device Gboard speech recognizer will be available on all Google Pixel phones but only in American English. The researchers are hoping the same techniques could be applied to more languages in the near future.