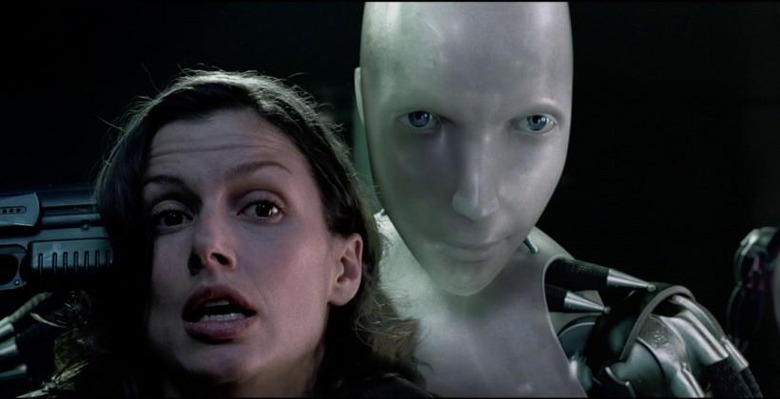

Futurist Warns Robots May One Day Kill Us All As An Act Of Kindness

Our robotic future, so full of promise, could be our undoing. So says futurist and CEO of Poikos, Nell Watson, who recently spoke at The Conference in Sweden about all things robots. The issue lies in artificial intelligence and a robot's acquisition of knowledge, and the lack of understanding about ethics that are present in both.

Watson talks about the nature of intelligence as it relates to robotics, and goes on to describe ways they've already been implemented into our lives — as well as ways they will start replacing so-called menial jobs in the near future. Artificial intelligence will eventually provide robots with a sense of intuition, and even, she says, with a degree of self-awareness.

Jobs with emotional components will be "safe for now, but not forever" — nursing is used as one example. Watson says robots will one day be better at understanding humans than humans themselves, however, and that will present some unique challenges. She goes on to discuss things like "triggers" and how robots are better at understanding them than humans, in the sense that robots can make the pragmatic choice whereas humans are influenced by their emotional components.

This all leads into a bigger issue, one that has been at the heart of ethical discussions for decades: "When we start to see super-intelligent artificial intelligences, are they going to be friendly?"

Yes, if we make them that way, but says Watson, even if robots are friendly, it won't be enough. Future robots could decide that "the kindest and best thing for humanity is to end us," she warns, leading into a call for the need to make sure robots can understand ethics from a human perspective, not merely from a pragmatic one.

Of course, this isn't the first time we've heard concerns raised about the future of possibly lethal robots. Back in May, for example, an informal UN meeting took place to discuss the topic of autonomous robots capable of killing, and the ethical questions at play. At the heart of that discussion was a similar issue: how much an intelligent robot understands about the nuances of its decisions.

VIA: Gizmodo