Facebook-Funded Research Decodes Thoughts Into Speech In Real Time

Researchers with the University of California, San Fransico, have successfully decoded human thoughts into speech in real-time, the university has announced. This marks a new milestone for the work first detailed in April, paving the way for consumer devices that can respond to thoughts without the need for the user to audibly state commands.READ: Facebook moves hundreds of employees to new AR glasses team

Voice control is quickly becoming the preferred method for interacting with devices, but it is impractical in public settings and not an option for anyone who is mute or suffers from a speech impediment that existing assistants are unable to understand. That's where synthesizing thoughts as speech comes in.

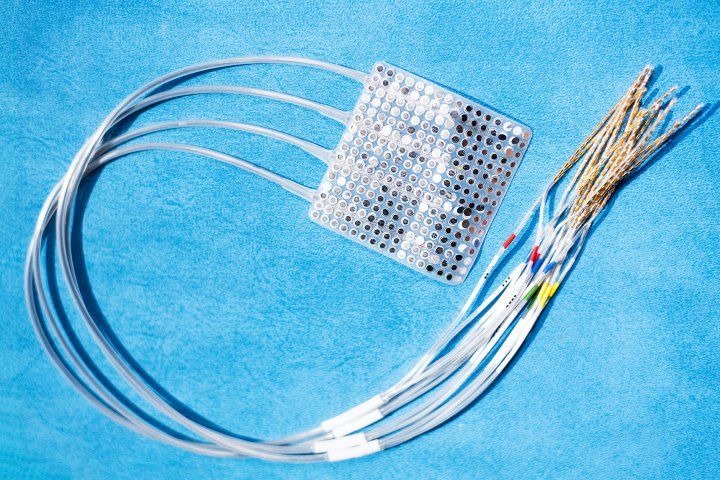

In April, researchers with UCSF detailed a 'neural speech prosthesis' that could produce relatively natural-sounding speech from decoded bain activity. The technology, should it ever reach the market, could be used in biomedical devices that enable mute individuals to speak. However, the technology detailed earlier this year was too slow for those usage scenarios.

In a study published today, UCSF researchers revealed that they have built upon that work and have successfully decoded brain activity as speech in real-time. A key aspect of the development is using the context in which the participants were speaking to improve the machine's ability to accurately and rapidly decode the brain activity.

The study's lead researcher David Moses, PhD, explained:

Real-time processing of brain activity has been used to decode simple speech sounds, but this is the first time this approach has been used to identify spoken words and phrases. It's important to keep in mind that we achieved this using a very limited vocabulary, but in future studies we hope to increase the flexibility as well as the accuracy of what we can translate from brain activity.

The research was funded under the Sponsored Academic Research Agreement with Facebook, which announced a 'typing-by-brain' project in 2017. Whereas the medical industry is eyeing the technology as a potential way to enable individuals with ALS and other conditions to 'speak' using thoughts, Facebook appears to be seeking such technology for the development of brain-controlled augmented reality glasses.