Facebook Ego4D Tracking Your When, How, What, And Who

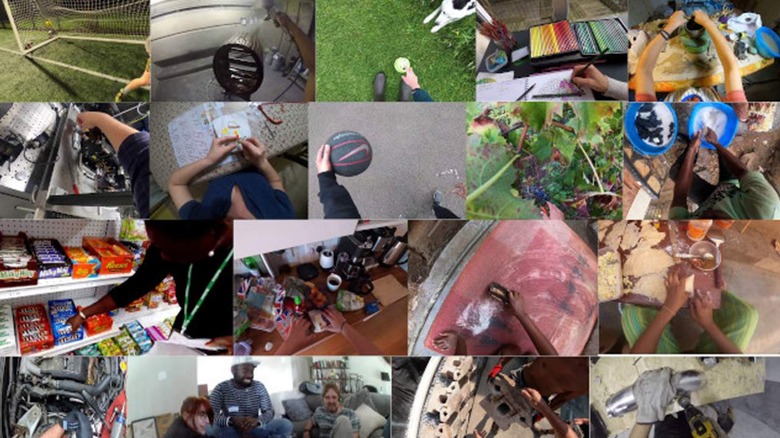

When in a few years Facebook releases a new pair of glasses with cameras, microphones, and proximity sensors aboard, remember this article. Today we're taking a peek at some research posted by Facebook AI called "Ego4D". This research worked with "a consortium of 13 universities and labs across nine countries" that together collected "2,200 hours of first person video in the wild, featuring over 700 participants going about their daily lives."

The aim of the researchers working with Facebook AI in this research is to develop artificial intelligence that "understands the world from this point of view"* so that they're able to "unlock a new era of immersive experiences." They're looking specifically at how augmented reality (AR) glasses and virtual reality (VR) headsets will "become as useful in everyday life as smartphones."

*They're referring to a first-person perspective, here. They've worked with video captured from a first-person point of view as opposed to the normal perspective from which AI is trained through video and photos: third person perspective.

Researchers listed five "benchmark challenges" for this project that effectively show what they're tracking. To be clear: Facebook isn't tracking this data through real live devices for this project – not yet. This is all being tracked via first-person perspective videos Facebook AI attained for this project:

• Episodic memory: What happened when?

• Forecasting: What am I likely to do next?

• Hand and object manipulation: What am I doing?

• Audio-visual diarization: Who said what when?

• Social interaction: Who is interacting with whom?

According to Facebook AI, their research on this subject worked with a data set that was 20x greater than any other "in terms of hours of footage." It is through the Facebook AI project announcement for Ego4D that this information was made public.

To learn more about this project, take a peek at the research paper Ego4D: Around the World in 3,000 Hours of Egocentric Video as published by arXiv.