Bionic Hand Sees, Instinctively Reaches For Objects

Forget whatever fantasy you might have of artificial limbs. Reality is far more cruel than fiction. Although we do have hi-tech prosthetics today, operating them requires no small amount of effort and time. Even the act of reaching out for a stick can take more than just a few seconds because of the time it takes for our brains to interpret what our eyes see, transmit it to electrical signals, which in turn get interpreted by artificial limbs into movement. That is why biomedical engineers from Newcastle University are trying to cut down the time by giving the artificial arm its own eye and brain.

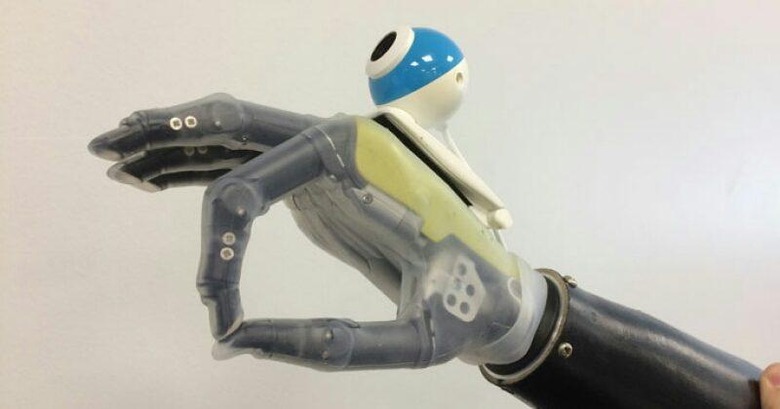

"The hand that sees" is almost like a title of horror flick, but it could very well be the beginnings of true futuristic prosthetics. The concept is simple really. The arm itself "sees" an object using a built-in camera, identifies what the object is, select an appropriate "grasping" type, and moves towards the object. All of these happen in just milliseconds and without the wearer's active thought.

Of course, easier said than done. Seeing is one thing, but the recognition is the trickier part. Unsurprisingly, that bit involves a lot of computer vision, machine learning, and neural networks. In essense, thr computer controllinig the artificial arm is fed multiple images of objects from different angles in order for it to learn. But more than that, it also tries to determine what is the best method to grasp that object.

Impressive as that might sound, for the researchers it is but a first step towards something grander and more ambitious. That would be a truly connected bionic hand that can not only see but also feel things like pressure and temperature.

SOURCE: Newcastle University