ARCore Depth API Is Now Ready To Hide AR Objects Behind Real Objects

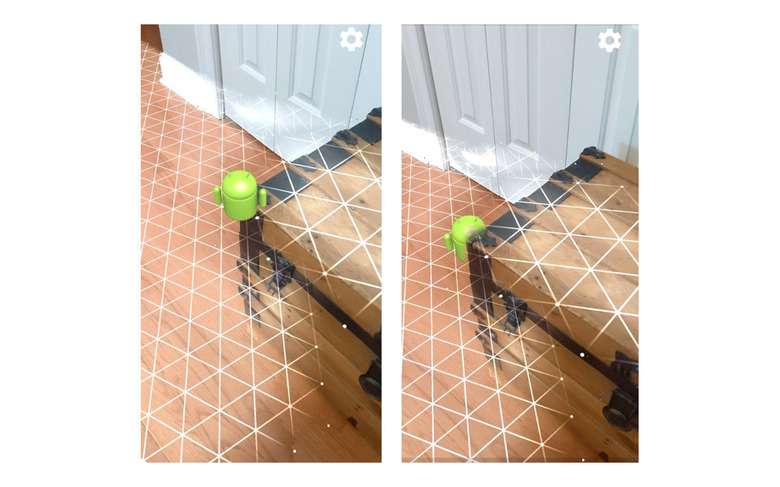

The very first iterations of phone-based augmented reality simply slapped floating "stickers" in the camera viewer to give the semblance of a 3D object virtually existing in the real world. That illusion, however, was easily shattered when a real-world object that should go in front of those virtual objects don't. Google's answer to that is ARCore's new depth perception and after half a year of baking in previews and betas, it is finally ready for all developers to create more believable AR illusions.

Depth perception is one of the key features of believable augmented reality experiences but while it isn't exactly new, most of the time it requires specialized hardware like a depth or 3D time-of-flight (ToF) sensor to pull off. Google created ARCore to enable AR experiences across smartphones that may have just one camera, like many of its own Pixel phones, and that includes enabling depth perception without additional imaging sensors.

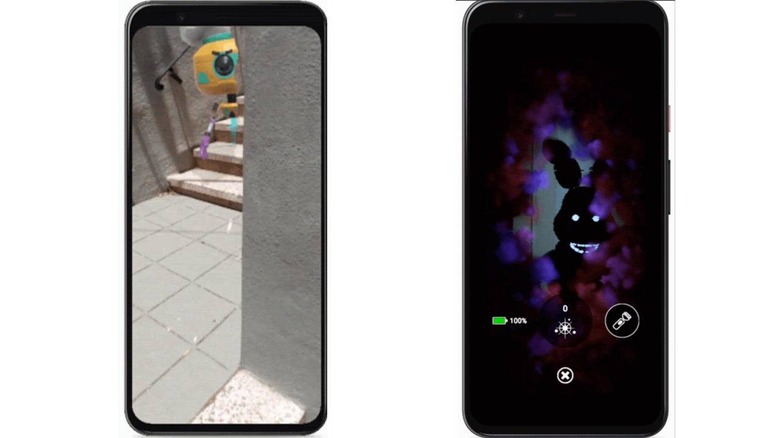

The results of this software-based solution are definitely impressive, at least going by the samples that Google is now showing off. It has partnered with a few big names in the app and mobile gaming market to put that API to test. Illumix used it to give Five Nights at Freddy's AR: Special Delivery players a shocker while Snap will be letting users interesting AR-based Lenses.

Hiding behind real-world things isn't the only use for depth perception, of course. It is equally important to properly put AR objects in front of them, like how TeamViewer Pilot is using it to annotate live video feeds. There are also plenty of opportunities for more whimsical applications, of course, like putting a miniature skating ramp in your living room.

ARCore's Depth API is now available in the latest 1.18 version, both for direct Android developers as well as those using the Unity 3D game engine. While it doesn't require more than one RGB camera to work, Google does say that having a 3D ToF sensor will naturally improve the quality of the experience.