AR Experiment Uses AI To Cut And Paste Real-World Things Into Photoshop

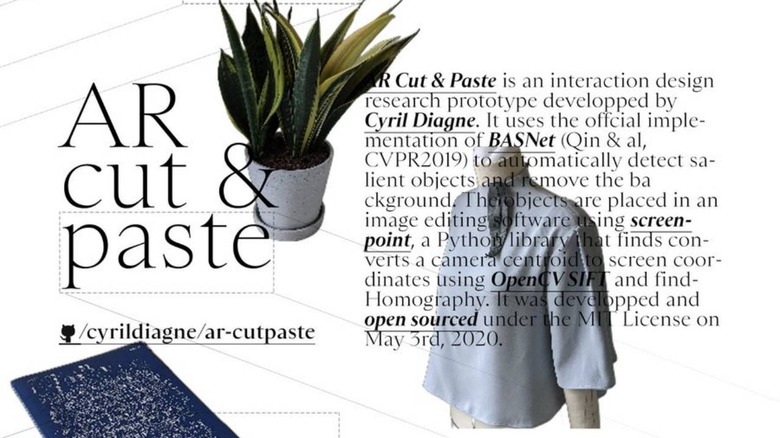

Augmented or mixed reality experiences often involve placing virtual objects alongside real-world things as seen through glasses or on phone screens. Doing the reverse, that is, placing real-world objects into digital canvases and in real-time, isn't so common and not that easy either. That, however, is the almost magical accomplishment that AI programmer and UX designer Cyril Diagne accomplished, letting you "cut and paste" real-world things into a Photoshop canvas.

You might scoff at the initial thought if you don't see the demo. After all, isn't it trivial to take a picture of, say, a flower vase and copy and paste it to your computer, even if it might involve a few steps? That may be the case but this AR Cut & Paste experiment is a lot more sophisticated than that.

What makes it very special is that it only takes a "picture" of the object without its surroundings and then adds it to the Photoshop canvas when you drag your phone over to the computer. It's almost like literally cutting out the object from its background and dragging it to paste into a document, though, of course, you're actually "cloning" the item instead.

The secret to this experiment is, of course, AI, specifically "salient object detection and background removal". A lot of phones today apply something similar but simpler to separate the foreground and blur the background, a.k.a. bokeh simulation or portrait mode. In this case, the AI is still too complicated that it has to run on a computer rather than on the phone itself.

The experiment is far from perfect, given the very noticeable latency in the actions, but it's an impressive demonstration nonetheless. Diagne generously provides the code needed for the whole setup, both for the phone and the server running on a computer so that anyone with some technical skill can try it out or, better yet, improve it.