Android Live Transcribe Will Soon Let You Know When Dogs Are Barking

Smartphones are becoming more sophisticated and more powerful but, truth be told, almost all of those features are designed for well-abled users. While those may represent the majority of paying customers, that's no reason to not make life easier for all kinds of users as well. On Global Accessibility Awareness Day, Google is announcing an upcoming feature for Live Transcribe that will help users with hearing problems be more aware of their surroundings and keep a record of some critical conversations as well.

Announced last February, Live Transcribe is basically automatic captioning for everything your Android phone can hear. It's designed to transcribe speech to text in real-time using cloud-based speech recognition and put it on the phone's screen. Pretty much like the stuff Google does with Google Assistant, for example.

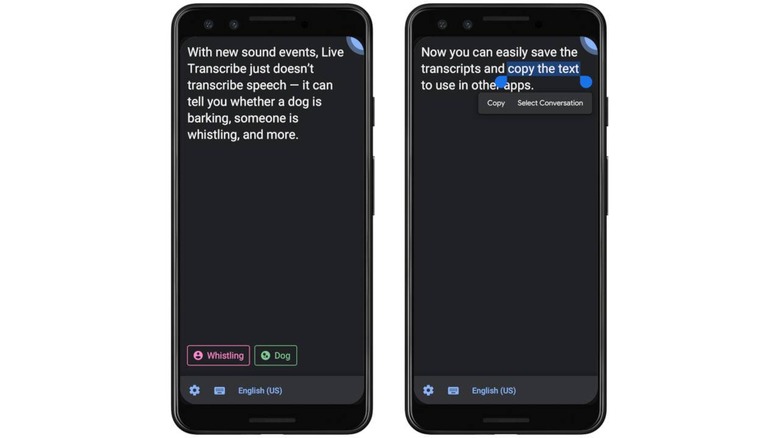

But spoken words aren't the only things people with hearing loss or deafness are interested in. Sometimes, it's even more critical that they hear background sounds. In an upcoming update next month, Live Transcribe will utilize machine learning and speech recognition technologies to identify and transcribe sound events.

That means sounds like dogs barking, people clapping, or vehicles speeding by will be noted on the user's screen. Sometimes, those sound events offer important social cues that people need to react properly. Other times, it can be critical to their safety.

Live Transcript will also allow users to copy and save those transcripts for up to three days. That's useful not just for those with hearing problems but even for learning languages or even temporarily saving interviews for later processing. The funny thing about accessibility is that if you design experiences with accessibility in mind, you actually benefit everyone.