Android Camera Switches Uses Your Face To Control Your Phone

Android has quite a number of accessibility features and APIs, some of which have been used, misused, or even abused to open doors to power users and functionality Google wouldn't have normally allowed. Many of those accessibility features have focused on voice control or screen reading, but a new Camera Switches feature in the Android Accessibility Suite expands that functionality to include certain facial expressions for those who might not even be able to speak or hold an external device to control their smartphones.

Google had played around with using front-facing sensors to control the phone on the Pixel 4, but that was limited to hand gesture detection powered by Project Soli's sonar system. It didn't exactly take off as Google hoped, and it wasn't good for accessibility either. The beta version of the Android Accessibility Suite app, however, is taking a slightly different route, and it could prove to be more interesting and more useful for those with some physical disabilities.

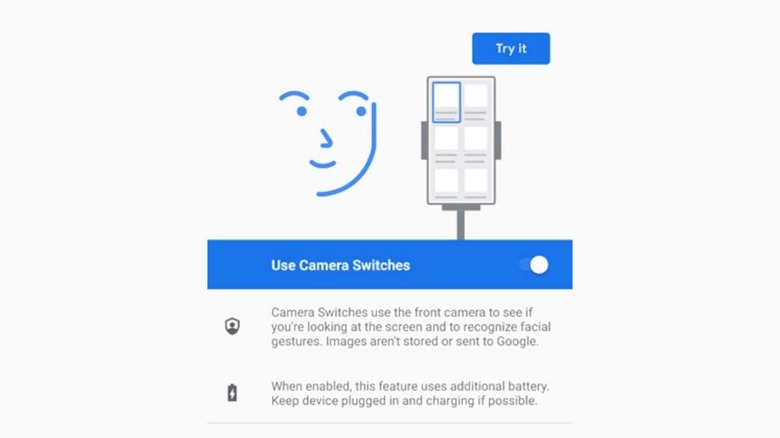

Simply called "Camera Switches," the feature would let users who can't speak or use their hands to control their phones. It instead uses a fixed set of facial expressions that can be mapped to different actions. Rather than use a specialized sensor, Camera Switches uses the front-facing camera, meaning any phone can theoretically support this feature.

Camera Switches only support a limited number of facial expressions, including open mouth, smile, raise eyebrows, look left, look right, and look up. Those, however, can be used to initiate quite a number of actions, like going Back or to the Home screen, scrolling forward or backward, or even mapping to a touch & hold gesture.

XDA says that this Accessibility Suite feature might be heading for Android 12 first but seems to be compatible with at least Android 11 as well. In both cases, using Camera Switches will cause a notification to appear in the status bar, indicating that the camera is in use.