Wikipedia deploys AI to fight spam and troll edits

Wikipedia has revealed that it recently began using artificial intelligence (AI) in order detect and eliminate article edits that contain spam or false information from trolls. The goal of the AI, dubbed Objective Revision Evaluation Service, or ORES, is to not only make life easier for Wikipedia veterans who volunteer hours of their time to eliminate bogus updates, but also attract and encourage new contributors.

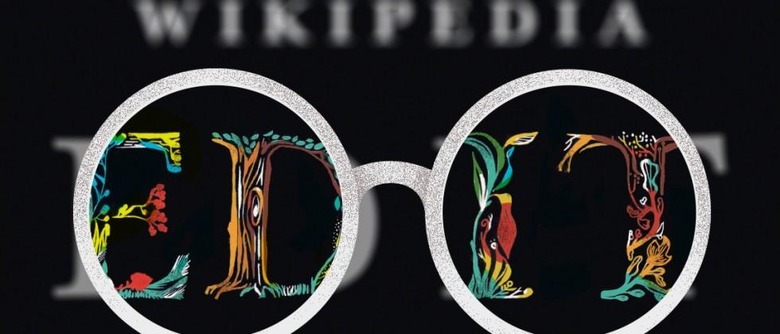

As the Wikimedia Foundation puts it, ORES is like a "pair of X-ray specs" that scans new revisions and uses machine learning to detect suspicious edits by looking for certain patterns. If an article is flagged, it is sent over to a human editor to double-check, who will then notify the contributor if the revision is removed.

This practice obviously will cut down on spam, but it makes it more clear to contributors, who may not have malicious intent, why their revision wasn't approved, as opposed to the current system of just deleting edits without explanation.

Wikipedia explains that the main difference between ORES and other AI it already uses is that this new tool is capable of telling the difference between someone's honest error and something that is intentionally meant to damage an article.

SOURCE Wikimedia Blog