Smartphone AI Is Still Useless: Here's How It Can Improve

LG has formally announced the name, not the phone itself, of its next flagship, the LG G7 ThinQ. That ThinQ part of its name puts an emphasis on the company's AI thrust. It's not the first to tout AI capabilities inside their phones. In fact, it has lately become something like a badge of honor, alongside the notch. But these new AI capabilities in our smartphones, whether via AI assistants or otherwise, are still far from being useful. OEMs seem to be obsessed with just one, at most two, aspects of AI, often neglecting the aspects where we need a better brain even more.

Photos are good but calories are better

AI in smartphones have taken different, and sometimes inaccurate forms, but most seem to be focused on applying it to the camera. They might have been "inspired" by how the Pixel became an unlikely superstar thanks to some machine learning magic in the camera software. In practice, however, most such camera AI have really been limited to one job: scene recognition.

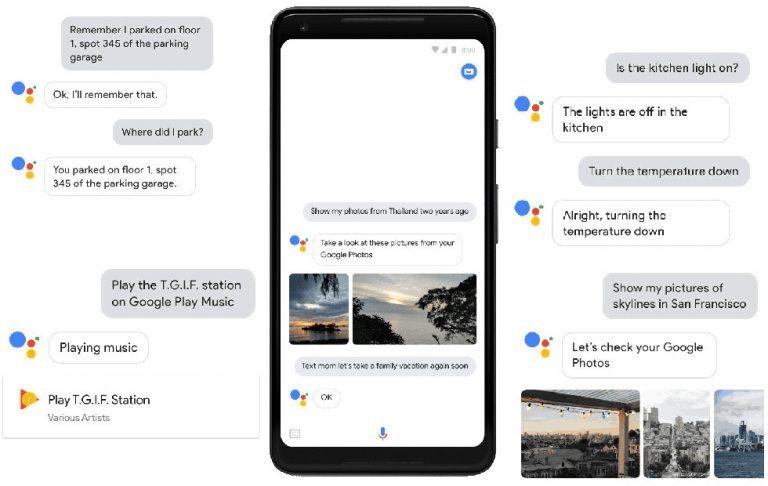

That's not to say it isn't important but it's not the only use for AI applied to a camera. Computer vision and object recognition could be far more useful than just determining whether the camera is pointed at a face or at food. The latter could identify the kind of food and tell you how many calories it has. Fortunately, it seems that some are indeed catching on to the idea, like Bixby Vision, Google Lens, and even the weird Amazon Echo Look. But there's so much more that can be done. A photo of a list can be turned into a digital check list, product photos can immediately be added to an inventory, and selfies at landmarks can automatically be added to an AI-generated travel album.

That weather update would have useful an hour ago

We get a lot of notifications on our phones. Most of the time, it's not the notifications we want. Even rare are the notifications we need at the time we need them. Weather updates are awesome, except when they're too late to do us any good. Like before we leave the house for an appointment.

Our phones hold a vast range of information about our lives, from our appointments to our contacts to our shopping lists. It shouldn't actually take too much work to link them together. Some AI assistants already advise you when to leave to avoid traffic. It could also tell you right then and there what kind of weather you might face in the next few hours. LG once had something like that with its Smart Notice feature in the LG G3, only, it didn't really talk nor volunteered information at the right time.

Remember the milk when I can actually buy it

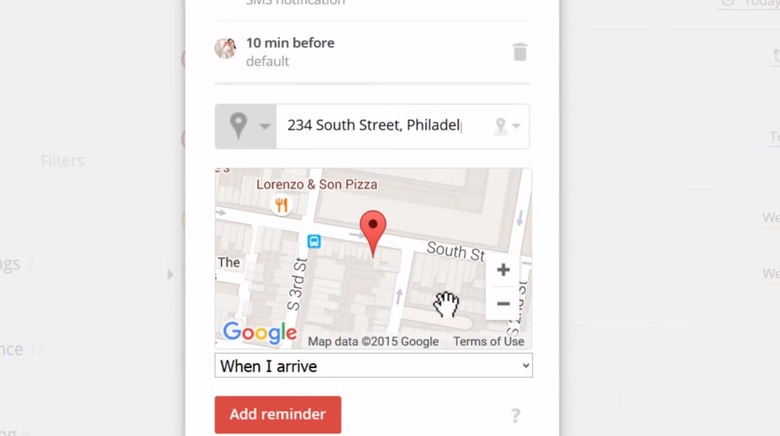

It's so easy to tell your phone to remind you to buy something later. Sometimes, it will even be smart enough to ask when that "later" is. It's not that easy, however, to tell it to remind you when you're actually near a place you buy it. AI in phones seems to have a problem with context. Or rather, they have a problem with determining context without you having to ask first.

Curiously enough, our phones do seem to be aware of their context, specifically their, and your, location. They offer up information related to a place, just not the what you actually need and where you need it. There are todo apps that do have location-based reminders, but it's not a common feature. And, of course, you have to actually remember to add that reminder in the first place. Wouldn't it be nice if your smartphone were smart enough to do it for you?

Predicting the future

A bit part of artificial intelligence and machine learning revolves around prediction. That is, they take a set of data, learn from it, and predict the next possible steps or what an object might be. That's extremely useful in a game of Go but could also be used in faking smartphone responsiveness. What if your phone knew what app you were going to launch next and preloaded it in the background so that the moment you tap on the icon it instantly launches with no content loading time?

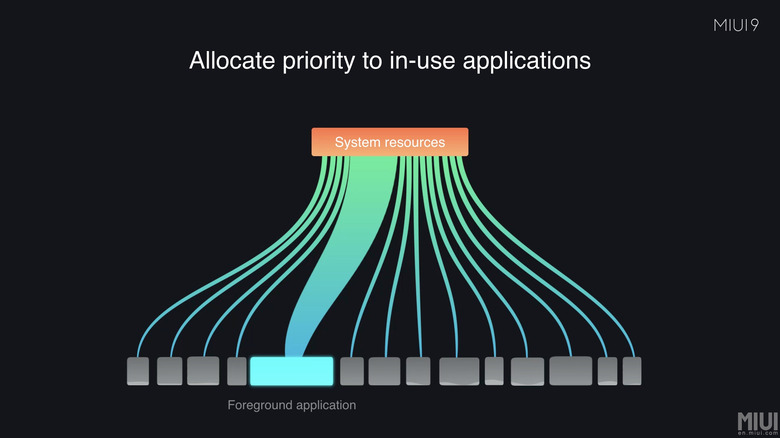

Huawei and Xiaomi have actually been working on that kind of "predictive" AI but have suddenly gone silent about it. It seems to have taken a backstage to AI-assisted camera scene recognition and face ID. They seem to be the most popular AI use cases, so why waste resources on something that is actually going to be useful for all kinds of users. Humans are creatures of habit, so it's sometimes easy to determine what a user will do next. But even without that kind of advanced prediction, an AI-assistance performance tune-up could help make smartphones feel, well, smarter.

(Xiaomi MIUI 9)

Why do you need to explicitly turn on a game mode when the system can detect you're playing a game and reallocate resources while ensuring your Twitter feed is always up to date? Why do you have to manually set a Do Not Disturb schedule when it knows you sleep and wake up at these hours and block all but the most important notifications from disturbing your sleep? These are definitely the things we can use help with every day. Not just with trying to decide what ISO sensitivity to use when taking a photo outdoors.

Knowing too much

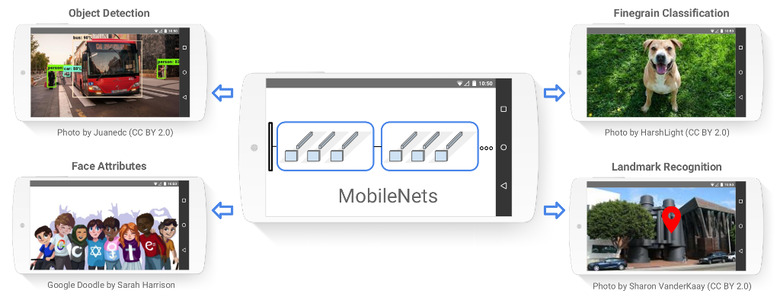

While an AI-empowered smartphone might be a dream for tech lovers but a nightmare for privacy-conscious users. AI has become almost equated with the cloud when there's little reason it should. In the examples above, only those that have to actually search for information need to connect online. Others can make use of the data already stored on your device or, at the very least, use the phone's GPS hardware.

A lot of the voice-controlled AI assistants communicate with remote servers to offload the language processing at the very least. That may be an acceptable excuse, with some limits, but not all AI has to be voice-activated at all. Our hardware is also getting more and more powerful that basic machine learning and processing can be done right on the device itself. Unfortunately, companies won't make money that way.

(TensorFlow MobileNets)

Wrap-up

AI has pretty much become a marketing buzzword and is sometimes inaccurately applied to products and features. While there's a smidgen of machine learning in scene and object recognition, it's really just a small part. Other common applications are basically just automation that some human intelligence has to set up first. It's an underwhelming and underutilized application of AI, almost giving the science a bad name and trying to mask the fact that our smartphones haven't exactly become smarter at all.