NVIDIA GauGAN Neural Network Makes Masterpieces Out Of Doodles

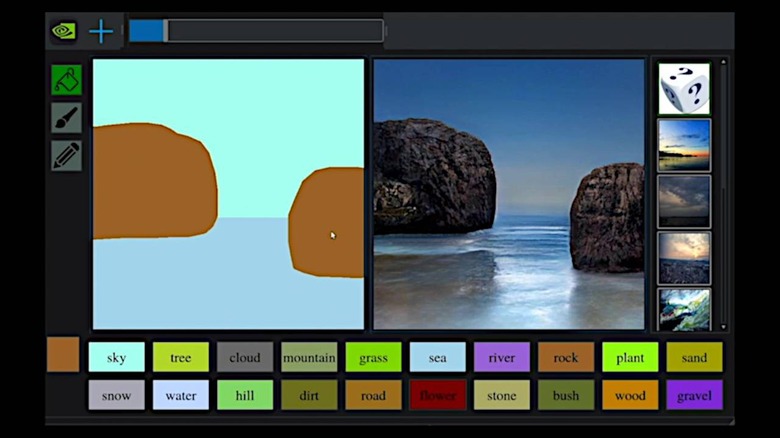

Soon, not being able to draw may no longer be an excuse not to express yourself or your ideas in beautiful, photorealistic images. If you can scribble a shape and click on a few buttons, a shaky line can turn into a mountain range, a lopsided circle can become a lake, and incomprehensible doodles can transform into a masterpiece. That's pretty much the unbelievable accomplishment that NVIDIA researchers have made in developing the deep learning model named GauGAN that can turn almost any collection of lines into a work of art.

The neural network isn't simply replacing doodles and shapes with photorealistic images of rocks, mountains, skies, or water. In addition to taking into account the original shape of the drawing, GauGAN also takes into account other objects in the scene. Turn a patch of grass into a pond and it will create reflections on the surface based on what's surrounding the new body of water.

To accomplish this, NVIDIA researchers employed generative adversarial networks, the "GAN" in GauGAN's name. A generator network creates images that it presents to a discriminator network. The discriminator, which has learned from real images how real-world objects look like, guides the generator with pixel per pixel feedback.

So the developers or the painter don't have to say "create a reflection on this body of water." GauGAN will automatically do so because it has learned that water creates reflections based on studying real photos. Even more impressive, when you change green grass into snow, the neural network will automatically adjust the sky color and surrounding foliage into the appropriate winter season.

While impressive and entertaining by itself, the researchers envision that GauGAN could be used to create tools that will, in turn, empower users to create their own virtual worlds. They will no longer have to rely on painstaking processes or call on artists to put their thoughts into images. They just have to pick up a mouse, or a stylus, and doodle away.