Neural Network Used To Transform Flat Images Into High-Res 3D Models

Researchers with Berkeley have detailed a new technology that can take a flat image and transform it into a 3D model. While that alone isn't new, the technology detailed in a new paper is notable because it can create high-quality 3D models from a single image, making it a viable foundation for potentially turning any single image into a detailed and usable three-dimensional model.

The researchers explain that 3D reconstruction typically uses convolutional neural networks (CNN) to anticipate the shape of any given object as it would exist in 3D space, a process that requires the neural network to be trained using CAD model datasets. Various classes of objects can be learned by the CNN, but the output is often 'coarse' due to certain limitations in predicting volumes.

The researchers helped solve this issue by essentially building upon the low-resolution models to generate higher-resolution models, which itself is ultimately based on a flat image. "We exploit the two dimensional nature of surfaces by hierarchically predicting fine resolution voxels only where a surface is expected judging from the low resolution prediction," the paper explains.

The team calls their method 'hierarchical surface prediction,' HSP for short, and it starts by predicting the low-resolution voxels (that is, volume elements) for a given object. The method deviates from typical CNN approaches, though, by classifying each voxel based on three classes — free space, boundary, and occupied space — rather than two classes. By doing so, the system is able to predict higher-resolution elements, the end result being high-res voxel grids.

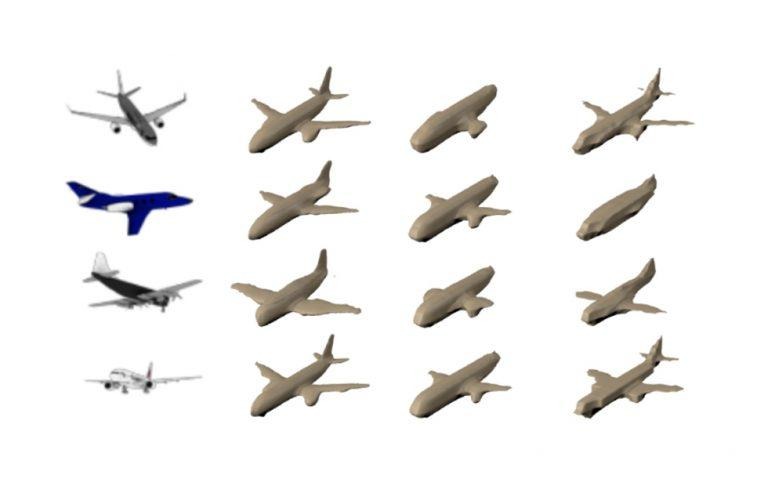

The images above show this 3D model generation and then refinement over the course of analysis, starting with the flat image — an airplane, in this case — and refining it into a more detailed end product. Rather than ending up with a bulbous 3D model that shows only a rudimentary airplane shape, the end result is detailed enough to include engines and wing shapes.

SOURCE: Berkeley