MIT Conduct-A-Bot System Controls A Drone Using Muscle Signals

Researchers at MIT have created a new system that they think brings us closer to seamless human-robot collaboration. The system is called "Conduct-A-Bot." It uses human muscle signals from wearable sensors to pilot a drone, controlling its movements.

The system MIT researchers have developedMIT researchers have developed places electromyography and motion sensors on the biceps, triceps, and forearms of the person controlling the flying drone. Those sensors measure the muscle signals and movement and use algorithms to process the signals to detect gestures in real-time. No off-line calibration or per-user training data is required.

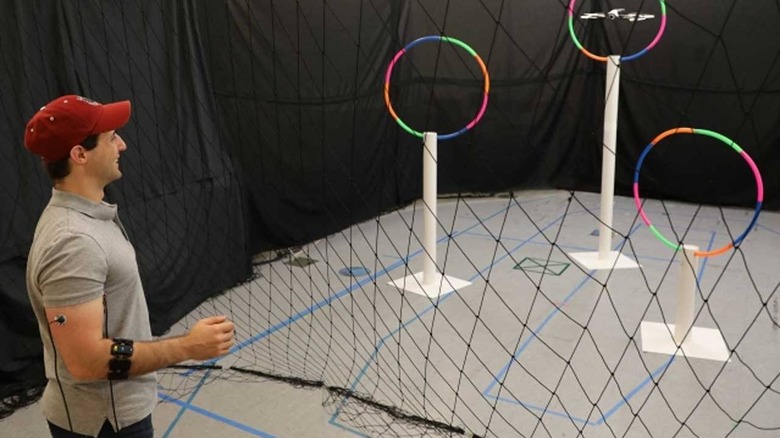

MIT says its system uses only two or three wearable sensors, with nothing in the environment required for it to work. The system has the potential to be used in various scenarios, including navigating menus on electronic devices or supervising autonomous robots. In their testing, the team used the Conduct-A-Bot system to control a Parrot Bebop 2 drone. They say any commercial drone could be used.

The system can detect actions like rotational gestures, clenched fists, tensed arms, and activated forearms. The signals are interpreted by the Conduct-A-Bot system to move the drone left, right, up, down, and forward. The signals can also be used to have the drone rotate and stop.

During testing, the drone correctly responded to 82% of over 1500 human gestures when it was remotely controlled through hoops. The system also correctly identified approximately 94% of cued gestures with the drone was not being controlled. The team says that their system could eventually target a range of applications for human-robot collaboration, including remote exploration, assistive personal robots, and manufacturing tasks. Controls of the type could also open up new avenues for contactless work. The system the MIT researchers created calibrates itself to each person's signals while they're making gestures that control the robot, making it faster and easier for casual users to take advantage of.