IBM Sets New Records In Quantum Computing

IBM scientists have set three new records in the realm of quantum computing, the company announced today at the Annual Physical Society meeting in Boston. Significant advances were achieved by the team in developing a quantum computing system that's stable enough to be scalable, bringing it one step closer to reality for practical computation.

We're certainly not all quantum physicists here, so we won't get into the nitty gritty, but essentially quantum computing allows for solving mathematical problems at speeds currently not possible by any other means. Based on quantum physics, quantum computing starts with the use of a qubit, unlike in traditional computing, which uses a bit. A bit can exist only in two possible states, "0" or "1". A qubit, however, has a third state known as superposition, where it can be both.

This may not seem like a huge deal, but mathematically, this makes a big difference in the amount of processing power. Quantum computers can work on millions of computations at once and many times faster than even the fastest supercomputers of today. This is especially helpful in the areas of encoding or decoding large numbers of sensitive data, searching databases of unstructured information, and in solving previously unsolvable mathematical problems.

However, qubits always had an issue of decoherence during the superposition state, where interference from other parts of the computer can cause it to switch to state 0. Hence, in order for quantum computing to become useful and scalable, methods must be devised to keep qubits coherenet for long enough so that error-correction techniques can prevent inaccurate computation due to decoherence.

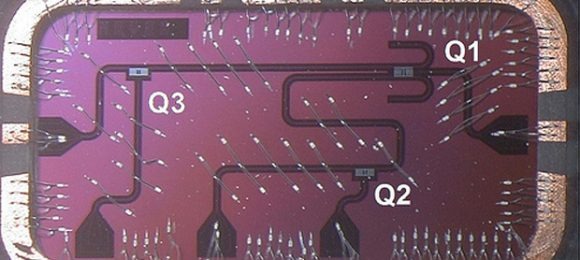

The IBM team has developed a superconducting qubit or a 3D qubit that can sustain coherence for up to 100 microseconds, which is two to four times longer than previous records and approaches the threshold for effective error correction. This stable 3D qubit could potentially be scaled up to hundreds or thousands of qubits, exponentially increasing the processing power.

[via Forbes]