Here's Facebook's Plan For Fighting Fake News

It isn't exactly a secret that Facebook has a fake news problem. Facebook came under fire during and after this year's US election for the amount of fake news that spread throughout the site, and it wasn't long after that it announced plans to try to combat such stories. Today we're getting our first look at what Facebook will do to combat the spread of fake news on its site.

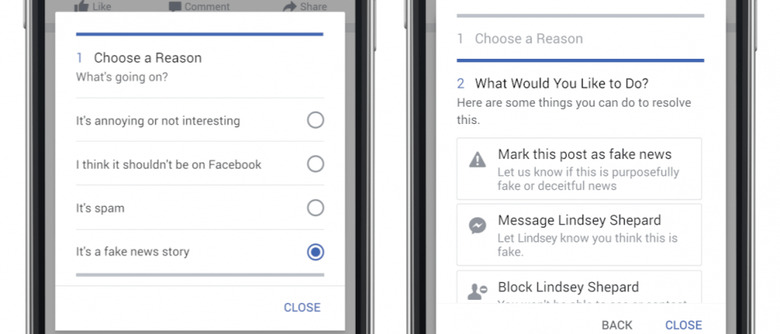

The first step in identifying fake news will rest with users. Facebook has made its reporting tools easier, so now if you see a fake news story shared on the site, you can report it as such. Just use the drop down menu on the post to report it as a fake news story, and you'll presented with a number of options – you can flag the story as fake, send a message to the person who shared it to let them know it's made up, or block them altogether.

From there, Facebook says it will use reports from users and "other signals" to send stories that are suspected to be false to third-party fact checkers. The fact checkers are signatories of Poynter's International Fact Checking Code of Principles, and include organizations like Snopes, ABC News, and the Associated Press. If they determine that the story is false, the link will be flagged as "disputed" on Facebook.

At this point, the fake news story doesn't actually get removed, but that disputed flag will be visible to everyone. Disputed stories will appear lower in News Feeds, and users will get alerts about disputed links when they try to share one. Essentially, it's a way for Facebook to allow users to share whatever they want while designating that some links may be less trustworthy than others.

These efforts only confront end-users sharing fake news, but Facebook is also trying to remove incentives for creating fake news in the first place. If a news story is labeled as disputed, it can no longer be turned into an ad or a promoted post, potentially reducing the amount of income purveyors of fake news pull in. In my opinion, that sounds like an excellent start to making fake news a little less attractive, not just to users, but also to publishers.

Beyond that, Facebook says it has eliminated the ability for publishers to spoof domains, which it hopes will "reduce the prevalence of sites that pretend to be real publications." It also says that it will be "analyzing publisher sites to detect where policy enforcement actions might be necessary."

At the end of it all, it sounds like Facebook is headed in the right direction when it comes to combating fake news. While it won't take measures to remove fake news stories that are posted by users, you'd hope that the "disputed" label would be enough for most rational people. Many of these features are currently in testing, and you should be able to try Facebook's new fake news reporting tool right now.

SOURCE: Facebook